{

"cells": [

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# 07 - Ensemble Methods\n",

"\n",

"by [Alejandro Correa Bahnsen](http://www.albahnsen.com/) & [Iván Torroledo](http://www.ivantorroledo.com/)\n",

"\n",

"version 1.3, June 2018\n",

"\n",

"## Part of the class [Applied Deep Learning](https://github.com/albahnsen/AppliedDeepLearningClass)\n",

"\n",

"\n",

"This notebook is licensed under a [Creative Commons Attribution-ShareAlike 3.0 Unported License](http://creativecommons.org/licenses/by-sa/3.0/deed.en_US). Special thanks goes to [Kevin Markham](https://github.com/justmarkham)"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Why are we learning about ensembling?\n",

"\n",

"- Very popular method for improving the predictive performance of machine learning models\n",

"- Provides a foundation for understanding more sophisticated models"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Lesson objectives\n",

"\n",

"Students will be able to:\n",

"\n",

"- Define ensembling and its requirements\n",

"- Identify the two basic methods of ensembling\n",

"- Decide whether manual ensembling is a useful approach for a given problem\n",

"- Explain bagging and how it can be applied to decision trees\n",

"- Explain how out-of-bag error and feature importances are calculated from bagged trees\n",

"- Explain the difference between bagged trees and Random Forests\n",

"- Build and tune a Random Forest model in scikit-learn\n",

"- Decide whether a decision tree or a Random Forest is a better model for a given problem"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Part 1: Introduction"

]

},

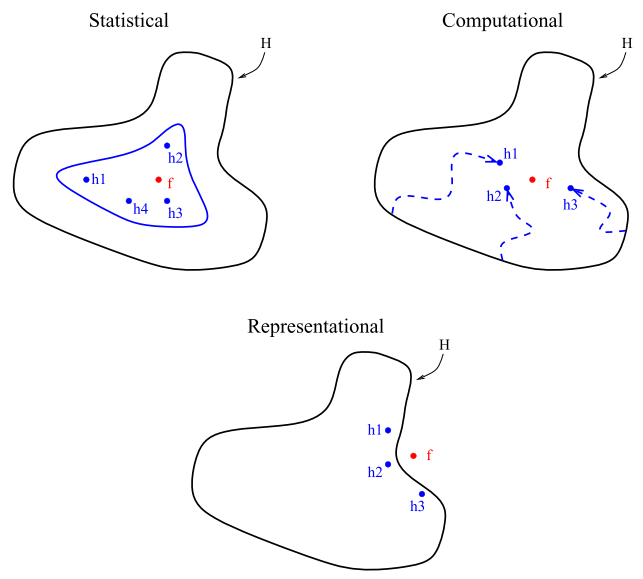

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Ensemble learning is a widely studied topic in the machine learning community. The main idea behind \n",

"the ensemble methodology is to combine several individual base classifiers in order to have a \n",

"classifier that outperforms each of them.\n",

"\n",

"Nowadays, ensemble methods are one \n",

"of the most popular and well studied machine learning techniques, and it can be \n",

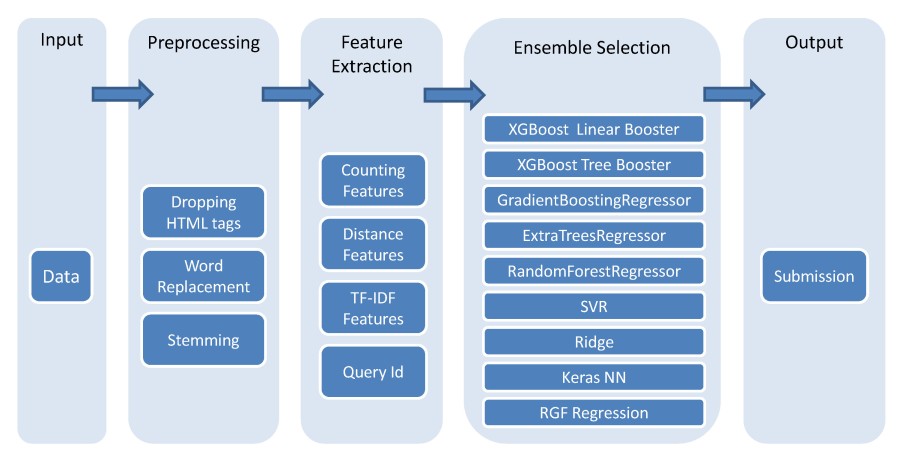

"noted that since 2009 all the first-place and second-place winners of the KDD-Cup https://www.sigkdd.org/kddcup/ used ensemble methods. The core \n",

"principle in ensemble learning, is to induce random perturbations into the learning procedure in \n",

"order to produce several different base classifiers from a single training set, then combining the \n",

"base classifiers in order to make the final prediction. In order to induce the random permutations \n",

"and therefore create the different base classifiers, several methods have been proposed, in \n",

"particular: \n",

"* bagging\n",

"* pasting\n",

"* random forests \n",

"* random patches \n",

"\n",

"Finally, after the base classifiers \n",

"are trained, they are typically combined using either:\n",

"* majority voting\n",

"* weighted voting \n",

"* stacking\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"There are three main reasons regarding why ensemble \n",

"methods perform better than single models: statistical, computational and representational . First, from a statistical point of view, when the learning set is too \n",

"small, an algorithm can find several good models within the search space, that arise to the same \n",

"performance on the training set $\\mathcal{S}$. Nevertheless, without a validation set, there is \n",

"a risk of choosing the wrong model. The second reason is computational; in general, algorithms \n",

"rely on some local search optimization and may get stuck in a local optima. Then, an ensemble may \n",

"solve this by focusing different algorithms to different spaces across the training set. The last \n",

"reason is representational. In most cases, for a learning set of finite size, the true function \n",

"$f$ cannot be represented by any of the candidate models. By combining several models in an \n",

"ensemble, it may be possible to obtain a model with a larger coverage across the space of \n",

"representable functions."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

""

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Example\n",

"\n",

"Let's pretend that instead of building a single model to solve a binary classification problem, you created **five independent models**, and each model was correct about 70% of the time. If you combined these models into an \"ensemble\" and used their majority vote as a prediction, how often would the ensemble be correct?"

]

},

{

"cell_type": "code",

"execution_count": 1,

"metadata": {},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"[0 1 1 1 1 0 0 1 1 1 1 1 1 1 1 1 1 0 1 1]\n",

"[1 1 1 1 1 1 1 0 1 0 0 0 1 1 1 0 1 0 0 0]\n",

"[1 1 1 1 0 1 1 0 0 1 1 1 1 1 1 1 1 0 1 1]\n",

"[1 1 0 0 0 0 1 1 0 1 1 1 1 1 1 0 1 1 1 0]\n",

"[0 0 1 0 0 0 1 0 1 0 0 0 1 1 1 1 1 1 1 1]\n"

]

}

],

"source": [

"import numpy as np\n",

"\n",

"# set a seed for reproducibility\n",

"np.random.seed(1234)\n",

"\n",

"# generate 1000 random numbers (between 0 and 1) for each model, representing 1000 observations\n",

"mod1 = np.random.rand(1000)\n",

"mod2 = np.random.rand(1000)\n",

"mod3 = np.random.rand(1000)\n",

"mod4 = np.random.rand(1000)\n",

"mod5 = np.random.rand(1000)\n",

"\n",

"# each model independently predicts 1 (the \"correct response\") if random number was at least 0.3\n",

"preds1 = np.where(mod1 > 0.3, 1, 0)\n",

"preds2 = np.where(mod2 > 0.3, 1, 0)\n",

"preds3 = np.where(mod3 > 0.3, 1, 0)\n",

"preds4 = np.where(mod4 > 0.3, 1, 0)\n",

"preds5 = np.where(mod5 > 0.3, 1, 0)\n",

"\n",

"# print the first 20 predictions from each model\n",

"print(preds1[:20])\n",

"print(preds2[:20])\n",

"print(preds3[:20])\n",

"print(preds4[:20])\n",

"print(preds5[:20])"

]

},

{

"cell_type": "code",

"execution_count": 2,

"metadata": {},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"[1 1 1 1 0 0 1 0 1 1 1 1 1 1 1 1 1 0 1 1]\n"

]

}

],

"source": [

"# average the predictions and then round to 0 or 1\n",

"ensemble_preds = np.round((preds1 + preds2 + preds3 + preds4 + preds5)/5.0).astype(int)\n",

"\n",

"# print the ensemble's first 20 predictions\n",

"print(ensemble_preds[:20])"

]

},

{

"cell_type": "code",

"execution_count": 3,

"metadata": {},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"0.713\n",

"0.665\n",

"0.717\n",

"0.712\n",

"0.687\n"

]

}

],

"source": [

"# how accurate was each individual model?\n",

"print(preds1.mean())\n",

"print(preds2.mean())\n",

"print(preds3.mean())\n",

"print(preds4.mean())\n",

"print(preds5.mean())"

]

},

{

"cell_type": "code",

"execution_count": 4,

"metadata": {},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"0.841\n"

]

}

],

"source": [

"# how accurate was the ensemble?\n",

"print(ensemble_preds.mean())"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"**Note:** As you add more models to the voting process, the probability of error decreases, which is known as [Condorcet's Jury Theorem](http://en.wikipedia.org/wiki/Condorcet%27s_jury_theorem)."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## What is ensembling?\n",

"\n",

"**Ensemble learning (or \"ensembling\")** is the process of combining several predictive models in order to produce a combined model that is more accurate than any individual model.\n",

"\n",

"- **Regression:** take the average of the predictions\n",

"- **Classification:** take a vote and use the most common prediction, or take the average of the predicted probabilities\n",

"\n",

"For ensembling to work well, the models must have the following characteristics:\n",

"\n",

"- **Accurate:** they outperform the null model\n",

"- **Independent:** their predictions are generated using different processes\n",

"\n",

"**The big idea:** If you have a collection of individually imperfect (and independent) models, the \"one-off\" mistakes made by each model are probably not going to be made by the rest of the models, and thus the mistakes will be discarded when averaging the models.\n",

"\n",

"There are two basic **methods for ensembling:**\n",

"\n",

"- Manually ensemble your individual models\n",

"- Use a model that ensembles for you"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### Theoretical performance of an ensemble\n",

" If we assume that each one of the $T$ base classifiers has a probability $\\rho$ of \n",

" being correct, the probability of an ensemble making the correct decision, assuming independence, \n",

" denoted by $P_c$, can be calculated using the binomial distribution\n",

"\n",

"$$P_c = \\sum_{j>T/2}^{T} {{T}\\choose{j}} \\rho^j(1-\\rho)^{T-j}.$$\n",

"\n",

" Furthermore, as shown, if $T\\ge3$ then:\n",

"\n",

"$$\n",

" \\lim_{T \\to \\infty} P_c= \\begin{cases} \n",

" 1 &\\mbox{if } \\rho>0.5 \\\\ \n",

" 0 &\\mbox{if } \\rho<0.5 \\\\ \n",

" 0.5 &\\mbox{if } \\rho=0.5 ,\n",

" \\end{cases}\n",

"$$\n",

"\tleading to the conclusion that \n",

"$$\n",

" \\rho \\ge 0.5 \\quad \\text{and} \\quad T\\ge3 \\quad \\Rightarrow \\quad P_c\\ge \\rho.\n",

"$$"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Part 2: Manual ensembling\n",

"\n",

"What makes a good manual ensemble?\n",

"\n",

"- Different types of **models**\n",

"- Different combinations of **features**\n",

"- Different **tuning parameters**"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"\n",

"\n",

"*Machine learning flowchart created by the [winner](https://github.com/ChenglongChen/Kaggle_CrowdFlower) of Kaggle's [CrowdFlower competition](https://www.kaggle.com/c/crowdflower-search-relevance)*"

]

},

{

"cell_type": "code",

"execution_count": 5,

"metadata": {

"collapsed": true

},

"outputs": [],

"source": [

"# read in and prepare the vehicle training data\n",

"import zipfile\n",

"import pandas as pd\n",

"with zipfile.ZipFile('../datasets/vehicles_train.csv.zip', 'r') as z:\n",

" f = z.open('vehicles_train.csv')\n",

" train = pd.io.parsers.read_table(f, index_col=False, sep=',')\n",

"with zipfile.ZipFile('../datasets/vehicles_test.csv.zip', 'r') as z:\n",

" f = z.open('vehicles_test.csv')\n",

" test = pd.io.parsers.read_table(f, index_col=False, sep=',')\n",

"\n",

"train['vtype'] = train.vtype.map({'car':0, 'truck':1})\n",

"# read in and prepare the vehicle testing data\n",

"test['vtype'] = test.vtype.map({'car':0, 'truck':1})"

]

},

{

"cell_type": "code",

"execution_count": 6,

"metadata": {},

"outputs": [

{

"data": {

"text/html": [

"\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" price | \n",

" year | \n",

" miles | \n",

" doors | \n",

" vtype | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 22000 | \n",

" 2012 | \n",

" 13000 | \n",

" 2 | \n",

" 0 | \n",

"

\n",

" \n",

" | 1 | \n",

" 14000 | \n",

" 2010 | \n",

" 30000 | \n",

" 2 | \n",

" 0 | \n",

"

\n",

" \n",

" | 2 | \n",

" 13000 | \n",

" 2010 | \n",

" 73500 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 3 | \n",

" 9500 | \n",

" 2009 | \n",

" 78000 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 4 | \n",

" 9000 | \n",

" 2007 | \n",

" 47000 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" price | \n",

" year | \n",

" miles | \n",

" doors | \n",

" vtype | \n",

"

\n",

" \n",

" \n",

" \n",

" | 13 | \n",

" 1300 | \n",

" 1997 | \n",

" 138000 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 2 | \n",

" 13000 | \n",

" 2010 | \n",

" 73500 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 12 | \n",

" 1800 | \n",

" 1999 | \n",

" 163000 | \n",

" 2 | \n",

" 1 | \n",

"

\n",

" \n",

" | 2 | \n",

" 13000 | \n",

" 2010 | \n",

" 73500 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 6 | \n",

" 3000 | \n",

" 2004 | \n",

" 177000 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 1 | \n",

" 14000 | \n",

" 2010 | \n",

" 30000 | \n",

" 2 | \n",

" 0 | \n",

"

\n",

" \n",

" | 3 | \n",

" 9500 | \n",

" 2009 | \n",

" 78000 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 10 | \n",

" 2500 | \n",

" 2003 | \n",

" 190000 | \n",

" 2 | \n",

" 1 | \n",

"

\n",

" \n",

" | 11 | \n",

" 5000 | \n",

" 2001 | \n",

" 62000 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 9 | \n",

" 1900 | \n",

" 2003 | \n",

" 160000 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 6 | \n",

" 3000 | \n",

" 2004 | \n",

" 177000 | \n",

" 4 | \n",

" 0 | \n",

"

\n",

" \n",

" | 1 | \n",

" 14000 | \n",

" 2010 | \n",

" 30000 | \n",

" 2 | \n",

" 0 | \n",

"

\n",

" \n",

" | 0 | \n",

" 22000 | \n",

" 2012 | \n",

" 13000 | \n",

" 2 | \n",

" 0 | \n",

"

\n",

" \n",

" | 1 | \n",

" 14000 | \n",

" 2010 | \n",

" 30000 | \n",

" 2 | \n",

" 0 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" 0 | \n",

" 1 | \n",

" 2 | \n",

" 3 | \n",

" 4 | \n",

" 5 | \n",

" 6 | \n",

" 7 | \n",

" 8 | \n",

" 9 | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" 1300.0 | \n",

" 1300.0 | \n",

" 3000.0 | \n",

" 4000.0 | \n",

" 1300.0 | \n",

" 4000.0 | \n",

" 4000.0 | \n",

" 4000.0 | \n",

" 3000.0 | \n",

" 4000.0 | \n",

"

\n",

" \n",

" | 1 | \n",

" 5000.0 | \n",

" 1300.0 | \n",

" 3000.0 | \n",

" 5000.0 | \n",

" 5000.0 | \n",

" 5000.0 | \n",

" 4000.0 | \n",

" 5000.0 | \n",

" 5000.0 | \n",

" 5000.0 | \n",

"

\n",

" \n",

" | 2 | \n",

" 14000.0 | \n",

" 13000.0 | \n",

" 13000.0 | \n",

" 13000.0 | \n",

" 13000.0 | \n",

" 14000.0 | \n",

" 13000.0 | \n",

" 13000.0 | \n",

" 9500.0 | \n",

" 9000.0 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" AtBat | \n",

" Hits | \n",

" HmRun | \n",

" Runs | \n",

" RBI | \n",

" Walks | \n",

" Years | \n",

" CAtBat | \n",

" CHits | \n",

" CHmRun | \n",

" CRuns | \n",

" CRBI | \n",

" CWalks | \n",

" League | \n",

" Division | \n",

" PutOuts | \n",

" Assists | \n",

" Errors | \n",

" Salary | \n",

" NewLeague | \n",

"

\n",

" \n",

" \n",

" \n",

" | 1 | \n",

" 315 | \n",

" 81 | \n",

" 7 | \n",

" 24 | \n",

" 38 | \n",

" 39 | \n",

" 14 | \n",

" 3449 | \n",

" 835 | \n",

" 69 | \n",

" 321 | \n",

" 414 | \n",

" 375 | \n",

" N | \n",

" W | \n",

" 632 | \n",

" 43 | \n",

" 10 | \n",

" 475.0 | \n",

" N | \n",

"

\n",

" \n",

" | 2 | \n",

" 479 | \n",

" 130 | \n",

" 18 | \n",

" 66 | \n",

" 72 | \n",

" 76 | \n",

" 3 | \n",

" 1624 | \n",

" 457 | \n",

" 63 | \n",

" 224 | \n",

" 266 | \n",

" 263 | \n",

" A | \n",

" W | \n",

" 880 | \n",

" 82 | \n",

" 14 | \n",

" 480.0 | \n",

" A | \n",

"

\n",

" \n",

" | 3 | \n",

" 496 | \n",

" 141 | \n",

" 20 | \n",

" 65 | \n",

" 78 | \n",

" 37 | \n",

" 11 | \n",

" 5628 | \n",

" 1575 | \n",

" 225 | \n",

" 828 | \n",

" 838 | \n",

" 354 | \n",

" N | \n",

" E | \n",

" 200 | \n",

" 11 | \n",

" 3 | \n",

" 500.0 | \n",

" N | \n",

"

\n",

" \n",

" | 4 | \n",

" 321 | \n",

" 87 | \n",

" 10 | \n",

" 39 | \n",

" 42 | \n",

" 30 | \n",

" 2 | \n",

" 396 | \n",

" 101 | \n",

" 12 | \n",

" 48 | \n",

" 46 | \n",

" 33 | \n",

" N | \n",

" E | \n",

" 805 | \n",

" 40 | \n",

" 4 | \n",

" 91.5 | \n",

" N | \n",

"

\n",

" \n",

" | 5 | \n",

" 594 | \n",

" 169 | \n",

" 4 | \n",

" 74 | \n",

" 51 | \n",

" 35 | \n",

" 11 | \n",

" 4408 | \n",

" 1133 | \n",

" 19 | \n",

" 501 | \n",

" 336 | \n",

" 194 | \n",

" A | \n",

" W | \n",

" 282 | \n",

" 421 | \n",

" 25 | \n",

" 750.0 | \n",

" A | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" AtBat | \n",

" Hits | \n",

" HmRun | \n",

" Runs | \n",

" RBI | \n",

" Walks | \n",

" Years | \n",

" CAtBat | \n",

" CHits | \n",

" CHmRun | \n",

" CRuns | \n",

" CRBI | \n",

" CWalks | \n",

" League | \n",

" Division | \n",

" PutOuts | \n",

" Assists | \n",

" Errors | \n",

" Salary | \n",

" NewLeague | \n",

"

\n",

" \n",

" \n",

" \n",

" | 1 | \n",

" 315 | \n",

" 81 | \n",

" 7 | \n",

" 24 | \n",

" 38 | \n",

" 39 | \n",

" 14 | \n",

" 3449 | \n",

" 835 | \n",

" 69 | \n",

" 321 | \n",

" 414 | \n",

" 375 | \n",

" 0 | \n",

" 0 | \n",

" 632 | \n",

" 43 | \n",

" 10 | \n",

" 475.0 | \n",

" 0 | \n",

"

\n",

" \n",

" | 2 | \n",

" 479 | \n",

" 130 | \n",

" 18 | \n",

" 66 | \n",

" 72 | \n",

" 76 | \n",

" 3 | \n",

" 1624 | \n",

" 457 | \n",

" 63 | \n",

" 224 | \n",

" 266 | \n",

" 263 | \n",

" 1 | \n",

" 0 | \n",

" 880 | \n",

" 82 | \n",

" 14 | \n",

" 480.0 | \n",

" 1 | \n",

"

\n",

" \n",

" | 3 | \n",

" 496 | \n",

" 141 | \n",

" 20 | \n",

" 65 | \n",

" 78 | \n",

" 37 | \n",

" 11 | \n",

" 5628 | \n",

" 1575 | \n",

" 225 | \n",

" 828 | \n",

" 838 | \n",

" 354 | \n",

" 0 | \n",

" 1 | \n",

" 200 | \n",

" 11 | \n",

" 3 | \n",

" 500.0 | \n",

" 0 | \n",

"

\n",

" \n",

" | 4 | \n",

" 321 | \n",

" 87 | \n",

" 10 | \n",

" 39 | \n",

" 42 | \n",

" 30 | \n",

" 2 | \n",

" 396 | \n",

" 101 | \n",

" 12 | \n",

" 48 | \n",

" 46 | \n",

" 33 | \n",

" 0 | \n",

" 1 | \n",

" 805 | \n",

" 40 | \n",

" 4 | \n",

" 91.5 | \n",

" 0 | \n",

"

\n",

" \n",

" | 5 | \n",

" 594 | \n",

" 169 | \n",

" 4 | \n",

" 74 | \n",

" 51 | \n",

" 35 | \n",

" 11 | \n",

" 4408 | \n",

" 1133 | \n",

" 19 | \n",

" 501 | \n",

" 336 | \n",

" 194 | \n",

" 1 | \n",

" 0 | \n",

" 282 | \n",

" 421 | \n",

" 25 | \n",

" 750.0 | \n",

" 1 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" feature | \n",

" importance | \n",

"

\n",

" \n",

" \n",

" \n",

" | 0 | \n",

" AtBat | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 2 | \n",

" HmRun | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 3 | \n",

" Runs | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 4 | \n",

" RBI | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 5 | \n",

" Walks | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 7 | \n",

" League | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 8 | \n",

" Division | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 9 | \n",

" PutOuts | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 10 | \n",

" Assists | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 11 | \n",

" Errors | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 12 | \n",

" NewLeague | \n",

" 0.000000 | \n",

"

\n",

" \n",

" | 6 | \n",

" Years | \n",

" 0.488391 | \n",

"

\n",

" \n",

" | 1 | \n",

" Hits | \n",

" 0.511609 | \n",

"

\n",

" \n",

"

\n",

"

\n",

"\n",

"

\n",

" \n",

" \n",

" | \n",

" feature | \n",

" importance | \n",

"

\n",

" \n",

" \n",

" \n",

" | 7 | \n",

" League | \n",

" 0.003603 | \n",

"

\n",

" \n",

" | 12 | \n",

" NewLeague | \n",

" 0.004290 | \n",

"

\n",

" \n",

" | 8 | \n",

" Division | \n",

" 0.005477 | \n",

"

\n",

" \n",

" | 10 | \n",

" Assists | \n",

" 0.023842 | \n",

"

\n",

" \n",

" | 11 | \n",

" Errors | \n",

" 0.028618 | \n",

"

\n",

" \n",

" | 2 | \n",

" HmRun | \n",

" 0.044607 | \n",

"

\n",

" \n",

" | 9 | \n",

" PutOuts | \n",

" 0.060063 | \n",

"

\n",

" \n",

" | 3 | \n",

" Runs | \n",

" 0.071800 | \n",

"

\n",

" \n",

" | 0 | \n",

" AtBat | \n",

" 0.094592 | \n",

"

\n",

" \n",

" | 4 | \n",

" RBI | \n",

" 0.130965 | \n",

"

\n",

" \n",

" | 5 | \n",

" Walks | \n",

" 0.139899 | \n",

"

\n",

" \n",

" | 1 | \n",

" Hits | \n",

" 0.145264 | \n",

"

\n",

" \n",

" | 6 | \n",

" Years | \n",

" 0.246980 | \n",

"

\n",

" \n",

"

\n",

"