[](https://mxnet.apache.org)

Apache MXNet for Deep Learning

===========================================

[](https://github.com/apache/mxnet/releases) [](https://github.com/apache/mxnet/stargazers) [](https://github.com/apache/mxnet/network) [](https://github.com/apache/mxnet/graphs/contributors) [](https://github.com/apache/mxnet/issues) [](https://github.com/apache/mxnet/labels/good%20first%20issue) [](https://github.com/apache/mxnet/labels/pr-awaiting-review) [](https://github.com/apache/mxnet/blob/master/LICENSE) [](https://twitter.com/intent/tweet?text=Wow:%20https%3A%2F%2Fgithub.com%2Fapache%2Fmxnet%20@ApacheMXNet) [](https://twitter.com/ApacheMXNet)

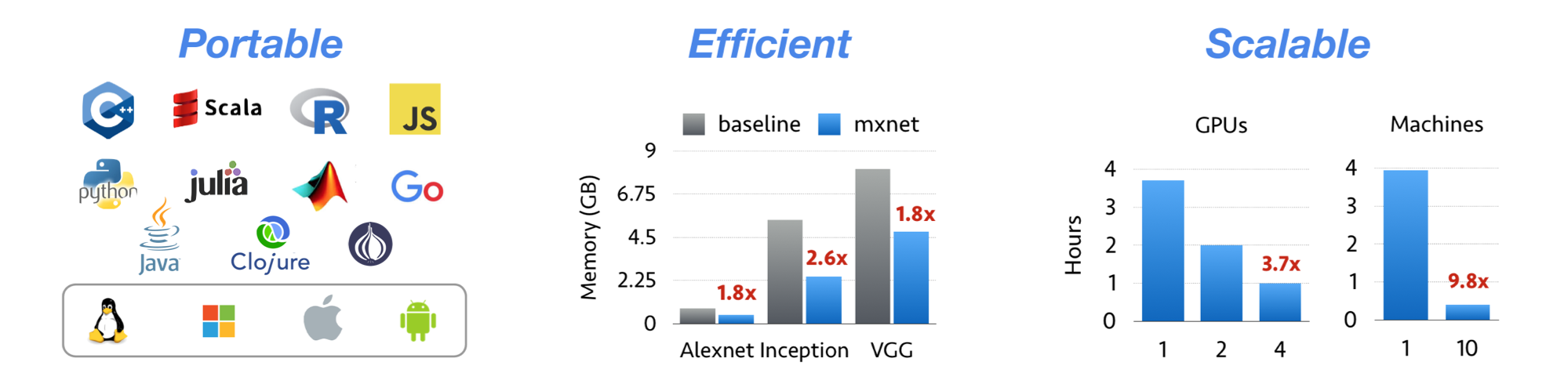

Apache MXNet is a deep learning framework designed for both *efficiency* and *flexibility*.

It allows you to ***mix*** [symbolic and imperative programming](https://mxnet.apache.org/api/architecture/program_model)

to ***maximize*** efficiency and productivity.

At its core, MXNet contains a dynamic dependency scheduler that automatically parallelizes both symbolic and imperative operations on the fly.

A graph optimization layer on top of that makes symbolic execution fast and memory efficient.

MXNet is portable and lightweight, scalable to many GPUs and machines.

Apache MXNet is more than a deep learning project. It is a [community](https://mxnet.apache.org/versions/master/community)

on a mission of democratizing AI. It is a collection of [blue prints and guidelines](https://mxnet.apache.org/api/architecture/overview)

for building deep learning systems, and interesting insights of DL systems for hackers.

Licensed under an [Apache-2.0](https://github.com/apache/mxnet/blob/master/LICENSE) license.

| Branch | Build Status |

|:-------:|:-------------:|

| [master](https://github.com/apache/mxnet/tree/master) | [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/centos-cpu/job/master/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/centos-gpu/job/master/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/clang/job/master/)

[](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/edge/job/master/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/miscellaneous/job/master/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/sanity/job/master/)

[](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/unix-cpu/job/master/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/unix-gpu/job/master/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/website/job/master/)

[](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/windows-cpu/job/master/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/windows-gpu/job/master/) [](https://mxnet.apache.org/) |

| [v1.x](https://github.com/apache/mxnet/tree/v1.x) | [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/centos-cpu/job/v1.x/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/centos-gpu/job/v1.x/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/clang/job/v1.x/)

[](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/edge/job/v1.x/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/miscellaneous/job/v1.x/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/sanity/job/v1.x/)

[](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/unix-cpu/job/v1.x/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/unix-gpu/job/v1.x/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/website/job/v1.x/)

[](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/windows-cpu/job/v1.x/) [](http://jenkins.mxnet-ci.com/job/mxnet-validation/job/windows-gpu/job/v1.x/) [](https://mxnet.apache.org/) |

Features

--------

* NumPy-like programming interface, and is integrated with the new, easy-to-use Gluon 2.0 interface. NumPy users can easily adopt MXNet and start in deep learning.

* Automatic hybridization provides imperative programming with the performance of traditional symbolic programming.

* Lightweight, memory-efficient, and portable to smart devices through native cross-compilation support on ARM, and through ecosystem projects such as [TVM](https://tvm.ai), [TensorRT](https://docs.nvidia.com/deeplearning/tensorrt/developer-guide/index.html), [OpenVINO](https://software.intel.com/content/www/us/en/develop/tools/openvino-toolkit.html).

* Scales up to multi GPUs and distributed setting with auto parallelism through [ps-lite](https://github.com/dmlc/ps-lite), [Horovod](https://github.com/horovod/horovod), and [BytePS](https://github.com/bytedance/byteps).

* Extensible backend that supports full customization, allowing integration with custom accelerator libraries and in-house hardware without the need to maintain a fork.

* Support for [Python](https://mxnet.apache.org/api/python), [Java](https://mxnet.apache.org/api/java), [C++](https://mxnet.apache.org/api/cpp), [R](https://mxnet.apache.org/api/r), [Scala](https://mxnet.apache.org/api/scala), [Clojure](https://mxnet.apache.org/api/clojure), [Go](https://github.com/jdeng/gomxnet/), [Javascript](https://github.com/dmlc/mxnet.js/), [Perl](https://mxnet.apache.org/api/perl), and [Julia](https://mxnet.apache.org/api/julia).

* Cloud-friendly and directly compatible with AWS and Azure.

Contents

--------

* [Installation](https://mxnet.apache.org/get_started)

* [Tutorials](https://mxnet.apache.org/api/python/docs/tutorials/)

* [Ecosystem](https://mxnet.apache.org/ecosystem)

* [API Documentation](https://mxnet.apache.org/api)

* [Examples](https://github.com/apache/mxnet-examples)

* [Stay Connected](#stay-connected)

* [Social Media](#social-media)

What's New

----------

* [1.9.1 Release](https://github.com/apache/mxnet/releases/tag/1.9.1) - MXNet 1.9.1 Release.

* [1.8.0 Release](https://github.com/apache/mxnet/releases/tag/1.8.0) - MXNet 1.8.0 Release.

* [1.7.0 Release](https://github.com/apache/mxnet/releases/tag/1.7.0) - MXNet 1.7.0 Release.

* [1.6.0 Release](https://github.com/apache/mxnet/releases/tag/1.6.0) - MXNet 1.6.0 Release.

* [1.5.1 Release](https://github.com/apache/mxnet/releases/tag/1.5.1) - MXNet 1.5.1 Patch Release.

* [1.5.0 Release](https://github.com/apache/mxnet/releases/tag/1.5.0) - MXNet 1.5.0 Release.

* [1.4.1 Release](https://github.com/apache/mxnet/releases/tag/1.4.1) - MXNet 1.4.1 Patch Release.

* [1.4.0 Release](https://github.com/apache/mxnet/releases/tag/1.4.0) - MXNet 1.4.0 Release.

* [1.3.1 Release](https://github.com/apache/mxnet/releases/tag/1.3.1) - MXNet 1.3.1 Patch Release.

* [1.3.0 Release](https://github.com/apache/mxnet/releases/tag/1.3.0) - MXNet 1.3.0 Release.

* [1.2.0 Release](https://github.com/apache/mxnet/releases/tag/1.2.0) - MXNet 1.2.0 Release.

* [1.1.0 Release](https://github.com/apache/mxnet/releases/tag/1.1.0) - MXNet 1.1.0 Release.

* [1.0.0 Release](https://github.com/apache/mxnet/releases/tag/1.0.0) - MXNet 1.0.0 Release.

* [0.12.1 Release](https://github.com/apache/mxnet/releases/tag/0.12.1) - MXNet 0.12.1 Patch Release.

* [0.12.0 Release](https://github.com/apache/mxnet/releases/tag/0.12.0) - MXNet 0.12.0 Release.

* [0.11.0 Release](https://github.com/apache/mxnet/releases/tag/0.11.0) - MXNet 0.11.0 Release.

* [Apache Incubator](http://incubator.apache.org/projects/mxnet.html) - We are now an Apache Incubator project.

* [0.10.0 Release](https://github.com/apache/mxnet/releases/tag/v0.10.0) - MXNet 0.10.0 Release.

* [0.9.3 Release](./docs/architecture/release_note_0_9.md) - First 0.9 official release.

* [0.9.1 Release (NNVM refactor)](./docs/architecture/release_note_0_9.md) - NNVM branch is merged into master now. An official release will be made soon.

* [0.8.0 Release](https://github.com/apache/mxnet/releases/tag/v0.8.0)

### Ecosystem News

* [oneDNN for Faster CPU Performance](docs/python_docs/python/tutorials/performance/backend/dnnl/dnnl_readme.md)

* [MXNet Memory Monger, Training Deeper Nets with Sublinear Memory Cost](https://github.com/dmlc/mxnet-memonger)

* [Tutorial for NVidia GTC 2016](https://github.com/dmlc/mxnet-gtc-tutorial)

* [MXNet.js: Javascript Package for Deep Learning in Browser (without server)](https://github.com/dmlc/mxnet.js/)

* [Guide to Creating New Operators (Layers)](https://mxnet.apache.org/api/faq/new_op)

* [Go binding for inference](https://github.com/songtianyi/go-mxnet-predictor)

Stay Connected

--------------

| Channel | Purpose |

|---|---|

| [Follow MXNet Development on Github](https://github.com/apache/mxnet/issues) | See what's going on in the MXNet project. |

| [MXNet Confluence Wiki for Developers](https://cwiki.apache.org/confluence/display/MXNET/Apache+MXNet+Home) | MXNet developer wiki for information related to project development, maintained by contributors and developers. To request write access, send an email to [send request to the dev list](mailto:dev@mxnet.apache.org?subject=Requesting%20CWiki%20write%20access) . |

| [dev@mxnet.apache.org mailing list](https://lists.apache.org/list.html?dev@mxnet.apache.org) | The "dev list". Discussions about the development of MXNet. To subscribe, send an email to [dev-subscribe@mxnet.apache.org](mailto:dev-subscribe@mxnet.apache.org) . |

| [discuss.mxnet.io](https://discuss.mxnet.io) | Asking & answering MXNet usage questions. |

| [Apache Slack #mxnet Channel](https://the-asf.slack.com/archives/C7FN4FCP9) | Connect with MXNet and other Apache developers. To join the MXNet slack channel [send request to the dev list](mailto:dev@mxnet.apache.org?subject=Requesting%20slack%20access) . |

| [Follow MXNet on Social Media](#social-media) | Get updates about new features and events. |

### Social Media

Keep connected with the latest MXNet news and updates.

Apache MXNet on Twitter

Apache MXNet on Twitter

Contributor and user blogs about MXNet

Contributor and user blogs about MXNet

Discuss MXNet on r/mxnet

Discuss MXNet on r/mxnet

Apache MXNet YouTube channel

Apache MXNet YouTube channel

Apache MXNet on LinkedIn

Apache MXNet on LinkedIn

History

-------

MXNet emerged from a collaboration by the authors of [cxxnet](https://github.com/dmlc/cxxnet), [minerva](https://github.com/dmlc/minerva), and [purine2](https://github.com/purine/purine2). The project reflects what we have learned from the past projects. MXNet combines aspects of each of these projects to achieve flexibility, speed, and memory efficiency.

Tianqi Chen, Mu Li, Yutian Li, Min Lin, Naiyan Wang, Minjie Wang, Tianjun Xiao,

Bing Xu, Chiyuan Zhang, and Zheng Zhang.

[MXNet: A Flexible and Efficient Machine Learning Library for Heterogeneous Distributed Systems](https://github.com/dmlc/web-data/raw/master/mxnet/paper/mxnet-learningsys.pdf).

In Neural Information Processing Systems, Workshop on Machine Learning Systems, 2015