"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## An Introduction to Bayesian Statistical Analysis"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Before we jump in to model-building and using MCMC to do wonderful things, it is useful to understand a few of the theoretical underpinnings of the Bayesian statistical paradigm. A little theory (and I do mean a *little*) goes a long way towards being able to apply the methods correctly and effectively.\n",

"\n",

"There are several introductory references to Bayesian statistics that go well beyond what we will cover here. Some suggestions:\n",

"\n",

"[Chapter 11 of Schaum's Outline of Probability and Statistics](https://www.kobo.com/us/en/ebook/schaum-s-outline-of-probability-and-statistics-4th-edition)\n",

"\n",

"[Introduction to Bayesian Statistics](https://www.stat.auckland.ac.nz/~brewer/stats331.pdf)\n",

"\n",

"[Bayesian Statistics](https://www.york.ac.uk/depts/maths/histstat/pml1/bayes/book.htm)\n",

"\n",

"\n",

"## What *is* Bayesian Statistical Analysis?\n",

"\n",

"Though many of you will have taken a statistics course or two during your undergraduate (or graduate) education, most of those who have will likely not have had a course in *Bayesian* statistics. Most introductory courses, particularly for non-statisticians, still do not cover Bayesian methods at all, except perhaps to derive Bayes' formula as a trivial rearrangement of the definition of conditional probability. Even today, Bayesian courses are typically tacked onto the curriculum, rather than being integrated into the program.\n",

"\n",

"In fact, Bayesian statistics is not just a particular method, or even a class of methods; it is an entirely different paradigm for doing statistical analysis.\n",

"\n",

"> Practical methods for making inferences from data using probability models for quantities we observe and about which we wish to learn.\n",

"*-- Gelman et al. 2013*\n",

"\n",

"A Bayesian model is described by parameters, uncertainty in those parameters is described using probability distributions."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

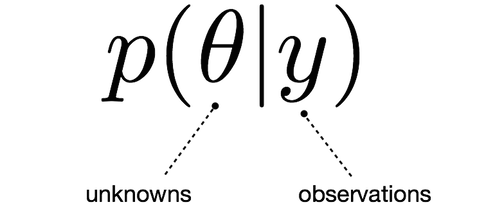

"All conclusions from Bayesian statistical procedures are stated in terms of *probability statements*\n",

"\n",

"\n",

"\n",

"This confers several benefits to the analyst, including:\n",

"\n",

"- ease of interpretation, summarization of uncertainty\n",

"- can incorporate uncertainty in parent parameters\n",

"- easy to calculate summary statistics"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## What is Probability?\n",

"\n",

"> *Misunderstanding of probability may be the greatest of all impediments to scientific literacy.*\n",

"> — Stephen Jay Gould\n",

"\n",

"It is useful to start with defining what probability is. There are three main categories:\n",

"\n",

"### 1. Classical probability\n",

"\n",

"

\n",

"$Pr(X=x) = \\frac{\\text{# x outcomes}}{\\text{# possible outcomes}}$\n",

"

\n",

"\n",

"Classical probability is an assessment of **possible** outcomes of elementary events. Elementary events are assumed to be equally likely.\n",

"\n",

"### 2. Frequentist probability\n",

"\n",

"

\n",

"$Pr(X=x) = \\lim_{n \\rightarrow \\infty} \\frac{\\text{# times x has occurred}}{\\text{# independent and identical trials}}$\n",

"

\n",

"\n",

"This interpretation considers probability to be the relative frequency \"in the long run\" of outcomes.\n",

"\n",

"### 3. Subjective probability\n",

"\n",

"

\n",

"$Pr(X=x)$\n",

"

\n",

"\n",

"Subjective probability is a measure of one's uncertainty in the value of \\\\(X\\\\). It characterizes the state of knowledge regarding some unknown quantity using probability.\n",

"\n",

"It is not associated with long-term frequencies nor with equal-probability events.\n",

"\n",

"For example:\n",

"\n",

"- X = the true prevalence of diabetes in Austin is < 15%\n",

"- X = the blood type of the person sitting next to you is type A\n",

"- X = the Nashville Predators will win next year's Stanley Cup\n",

"- X = it is raining in Nashville\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Bayesian vs Frequentist Statistics: What's the difference?\n",

"\n",

"See the [VanderPlas paper and video](http://conference.scipy.org/proceedings/scipy2014/pdfs/vanderplas.pdf).\n",

"\n",

"\n",

"\n",

"Any statistical paradigm, Bayesian or otherwise, involves at least the following: \n",

"\n",

"1. Some **unknown quantities** about which we are interested in learning or testing. We call these *parameters*.\n",

"2. Some **data** which have been observed, and hopefully contain information about (1).\n",

"3. One or more **models** that relate the data to the parameters, and is the instrument that is used to learn.\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### The Frequentist World View\n",

"\n",

"\n",

"\n",

"- The data that have been observed are considered **random**, because they are realizations of random processes, and hence will vary each time one goes to observe the system.\n",

"- Model parameters are considered **fixed**. The parameters' values are unknown, but they are fixed, and so we *condition* on them.\n",

"\n",

"In mathematical notation, this implies a (very) general model of the following form:\n",

"\n",

"

\n",

"$f(y | \\theta)$\n",

"

\n",

"\n",

"Here, the model \\\\(f\\\\) accepts data values \\\\(y\\\\) as an argument, conditional on particular values of \\\\(\\theta\\\\).\n",

"\n",

"Frequentist inference typically involves deriving **estimators** for the unknown parameters. Estimators are formulae that return estimates for particular estimands, as a function of data. They are selected based on some chosen optimality criterion, such as *unbiasedness*, *variance minimization*, or *efficiency*.\n",

"\n",

"> For example, lets say that we have collected some data on the prevalence of autism spectrum disorder (ASD) in some defined population. Our sample includes \\\\(n\\\\) sampled children, \\\\(y\\\\) of them having been diagnosed with autism. A frequentist estimator of the prevalence \\\\(p\\\\) is:\n",

"\n",

">

\n",

"> $\\hat{p} = \\frac{y}{n}$\n",

">

\n",

"\n",

"> Why this particular function? Because it can be shown to be unbiased and minimum-variance.\n",

"\n",

"It is important to note that new estimators need to be derived for every estimand that is introduced.\n",

"\n",

"### The Bayesian World View\n",

"\n",

"\n",

"\n",

"- Data are considered **fixed**. They used to be random, but once they were written into your lab notebook/spreadsheet/IPython notebook they do not change.\n",

"- Model parameters themselves may not be random, but Bayesians use probability distribtutions to describe their uncertainty in parameter values, and are therefore treated as **random**. In some cases, it is useful to consider parameters as having been sampled from probability distributions.\n",

"\n",

"This implies the following form:\n",

"\n",

"

\n",

"$p(\\theta | y)$\n",

"

\n",

"\n",

"This formulation used to be referred to as ***inverse probability***, because it infers from observations to parameters, or from effects to causes.\n",

"\n",

"Bayesians do not seek new estimators for every estimation problem they encounter. There is only one estimator for Bayesian inference: **Bayes' Formula**."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

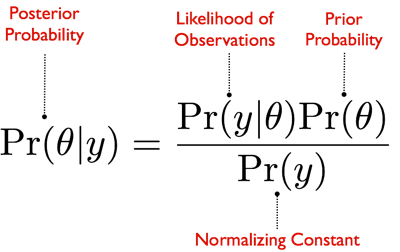

"## Bayes' Formula\n",

"\n",

"Given two events A and B, the conditional probability of A given that B is true is expressed as follows:\n",

"\n",

"$Pr(A|B) = \\frac{Pr(B|A)Pr(A)}{Pr(B)}$\n",

"\n",

"where P(B)>0. Although Bayes' theorem is a fundamental result of probability theory, it has a specific interpretation in Bayesian statistics. \n",

"\n",

"In the above equation, A usually represents a proposition (such as the statement that a coin lands on heads fifty percent of the time) and B represents the evidence, or new data that is to be taken into account (such as the result of a series of coin flips). P(A) is the **prior** probability of A which expresses one's beliefs about A before evidence is taken into account. The prior probability may also quantify prior knowledge or information about A. \n",

"\n",

"P(B|A) is the **likelihood**, which can be interpreted as the probability of the evidence B given that A is true. The likelihood quantifies the extent to which the evidence B supports the proposition A. \n",

"\n",

"P(A|B) is the **posterior** probability, the probability of the proposition A after taking the evidence B into account. Essentially, Bayes' theorem updates one's prior beliefs P(A) after considering the new evidence B.\n",

"\n",

"P(B) is the **marginal likelihood**, which can be interpreted as the sum of the conditional probability of B under all possible events $A_i$\n",

"in the sample\n",

"space \n",

"\n",

"- For two events $P(B) = P(B|A)P(A) + P(B|\\bar{A})P(\\bar{A})$\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

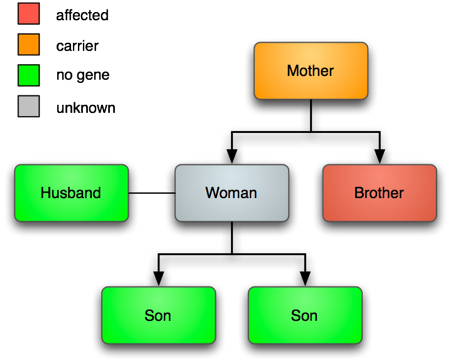

"### Example: Genetic probabilities\n",

"\n",

"Let's put Bayesian inference into action using a very simple example. I've chosen this example because it is one of the rare occasions where the posterior can be calculated by hand. We will show how data can be used to update our belief in competing hypotheses.\n",

"\n",

"Hemophilia is a rare genetic disorder that impairs the ability for the body's clotting factors to coagualate the blood in response to broken blood vessels. The disease is an **x-linked recessive** trait, meaning that there is only one copy of the gene in males but two in females, and the trait can be masked by the dominant allele of the gene. \n",

"\n",

"This implies that males with 1 gene are *affected*, while females with 1 gene are unaffected, but *carriers* of the disease. Having 2 copies of the disease is fatal, so this genotype does not exist in the population.\n",

"\n",

"In this example, consider a woman whose mother is a carrier (because her brother is affected) and who marries an unaffected man. Let's now observe some data: the woman has two consecutive (non-twin) sons who are unaffected. We are interested in determining **if the woman is a carrier**.\n",

"\n",

"\n",

"\n",

"To set up this problem, we need to set up our probability model. The unknown quantity of interest is simply an indicator variable \\\\(W\\\\) that equals 1 if the woman is affected, and zero if she is not. We are interested in the probability that the variable equals one, given what we have observed:\n",

"\n",

"\\\\[Pr(W=1 | s_1=0, s_2=0)\\\\]\n",

"\n",

"Our prior information is based on what we know about the woman's ancestry: her mother was a carrier. Hence, the prior is \\\\(Pr(W=1) = 0.5\\\\). Another way of expressing this is in terms of the **prior odds**, or:\n",

"\n",

"\\\\[O(W=1) = \\frac{Pr(W=1)}{Pr(W=0)} = 1\\\\]\n",

"\n",

"Now for the likelihood: The form of this function is:\n",

"\n",

"\\\\[L(W | s_1=0, s_2=0)\\\\]\n",

"\n",

"This can be calculated as the probability of observing the data for any passed value for the parameter. For this simple problem, the likelihood takes only two possible values:\n",

"\n",

"\\\\[\\begin{aligned}\n",

"L(W=1 &| s_1=0, s_2=0) = (0.5)(0.5) = 0.25 \\cr\n",

"L(W=0 &| s_1=0, s_2=0) = (1)(1) = 1\n",

"\\end{aligned}\\\\]\n",

"\n",

"With all the pieces in place, we can now apply Bayes' formula to calculate the posterior probability that the woman is a carrier:\n",

"\n",

"\\\\[\\begin{aligned}\n",

"Pr(W=1 | s_1=0, s_2=0) &= \\frac{L(W=1 | s_1=0, s_2=0) Pr(W=1)}{L(W=1 | s_1=0, s_2=0) Pr(W=1) + L(W=0 | s_1=0, s_2=0) Pr(W=0)} \\cr\n",

" &= \\frac{(0.25)(0.5)}{(0.25)(0.5) + (1)(0.5)} \\cr\n",

" &= 0.2\n",

"\\end{aligned}\\\\]\n",

"\n",

"Hence, there is a 0.2 probability of the woman being a carrier.\n",

"\n",

"Its a bit trivial, but we can code this in Python:"

]

},

{

"cell_type": "code",

"execution_count": 13,

"metadata": {},

"outputs": [],

"source": [

"prior = 0.5\n",

"p = 0.5\n",

"\n",

"L = lambda w, s: np.prod([(1-i, p**i * (1-p)**(1-i))[w] for i in s])"

]

},

{

"cell_type": "code",

"execution_count": 14,

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"0.2"

]

},

"execution_count": 14,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"s = [0,0]\n",

"\n",

"post = L(1, s) * prior / (L(1, s) * prior + L(0, s) * (1 - prior))\n",

"post"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Now, what happens if the woman has a third unaffected child? What is our estimate of her probability of being a carrier then? \n",

"\n",

"Bayes' formula makes it easy to update analyses with new information, in a sequential fashion. We simply assign the posterior from the previous analysis to be the prior for the new analysis, and proceed as before:"

]

},

{

"cell_type": "code",

"execution_count": 15,

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"0.5"

]

},

"execution_count": 15,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"L(1, [0])"

]

},

{

"cell_type": "code",

"execution_count": 16,

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"0.11111111111111112"

]

},

"execution_count": 16,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"s = [0]\n",

"prior = post\n",

"\n",

"L(1, s) * prior / (L(1, s) * prior + L(0, s) * (1 - prior))"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Thus, observing a third unaffected child has further reduced our belief that the mother is a carrier."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## More on Bayesian Terminology\n",

"\n",

"Replacing Bayes' Formula with conventional Bayes terms:\n",

"\n",

"\n",

"\n",

"The equation expresses how our belief about the value of \\\\(\\theta\\\\), as expressed by the **prior distribution** \\\\(P(\\theta)\\\\) is reallocated following the observation of the data \\\\(y\\\\), as expressed by the posterior distribution the posterior distribution.\n",

"\n",

"### Marginal\n",

"\n",

"The denominator \\\\(P(y)\\\\) is the likelihood integrated over all \\\\(\\theta\\\\):\n",

"\n",

"

\n",

"\n",

"This usually cannot be calculated directly. However it is just a normalization constant which doesn't depend on the parameter; the act of summation washes out whatever info we had about the parameter. Hence it can often be ignored;The normalising constant makes sure that the resulting posterior distribution is a true probability distribution by ensuring that the sum of the distribution is equal to 1.\n",

"\n",

"In some cases we don’t care about this property of the distribution. We only care about where the peak of the distribution occurs, regardless of whether the distribution is normalised or not\n",

"\n",

"Unfortunately sometimes we are obliged to calculate it. The intractability of this integral is one of the factors that has contributed to the under-utilization of Bayesian methods by statisticians.\n",

"\n",

"### Prior\n",

"\n",

"Once considered a controversial aspect of Bayesian analysis, the prior distribution characterizes what is known about an unknown quantity before observing the data from the present study. Thus, it represents the information state of that parameter. It can be used to reflect the information obtained in previous studies, to constrain the parameter to plausible values, or to represent the population of possible parameter values, of which the current study's parameter value can be considered a sample.\n",

"\n",

"### Likelihood\n",

"\n",

"The likelihood represents the information in the observed data, and is used to update prior distributions to posterior distributions. This updating of belief is justified becuase of the **likelihood principle**, which states:\n",

"\n",

"> Following observation of \\\\(y\\\\), the likelihood \\\\(L(\\theta|y)\\\\) contains all experimental information from \\\\(y\\\\) about the unknown \\\\(\\theta\\\\).\n",

"\n",

"Bayesian analysis satisfies the likelihood principle because the posterior distribution's dependence on the data is only through the likelihood. In comparison, most frequentist inference procedures violate the likelihood principle, because inference will depend on the design of the trial or experiment.\n",

"\n",

"What is a likelihood function? It is closely related to the probability density (or mass) function. Taking a common example, consider some data that are binomially distributed (that is, they describe the outcomes of \\\\(n\\\\) binary events). Here is the binomial sampling distribution:\n",

"\n",

"$p(Y|\\theta) = {n \\choose y} \\theta^{y} (1-\\theta)^{n-y}$\n",

"\n",

"We can code this easily in Python:"

]

},

{

"cell_type": "code",

"execution_count": 8,

"metadata": {},

"outputs": [],

"source": [

"from scipy.special import comb\n",

"\n",

"pbinom = lambda y, n, p: comb(n, y) * p**y * (1-p)**(n-y)"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"This function returns the probability of observing \\\\(y\\\\) events from \\\\(n\\\\) trials, where events occur independently with probability \\\\(p\\\\)."

]

},

{

"cell_type": "code",

"execution_count": 9,

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"0.1171875"

]

},

"execution_count": 9,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"pbinom(3, 10, 0.5)"

]

},

{

"cell_type": "code",

"execution_count": 10,

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"7.450580596923828e-07"

]

},

"execution_count": 10,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"pbinom(1, 25, 0.5)"

]

},

{

"cell_type": "code",

"execution_count": 11,

"metadata": {},

"outputs": [

{

"data": {

"image/png": "iVBORw0KGgoAAAANSUhEUgAAAX0AAAD8CAYAAACb4nSYAAAABHNCSVQICAgIfAhkiAAAAAlwSFlzAAALEgAACxIB0t1+/AAAADl0RVh0U29mdHdhcmUAbWF0cGxvdGxpYiB2ZXJzaW9uIDMuMC4yLCBodHRwOi8vbWF0cGxvdGxpYi5vcmcvOIA7rQAAEcpJREFUeJzt3X+MHOd93/H3h2Rl52IkkaNr0Yg6Hp0widWmtYqNktaoUdS/6CQQDcSG6Z4LpRFwQFG1ad2iUCqgAhgQcJKicP8QWh1SpUZ6ieIoAXIJ7CiK7SR/BHZ4tFwnkiKYZkjqSrdiKtctSsMqrW//mFWzPB19c+TeLm+f9ws4zM4zz+x+H+7uZ4czszupKiRJbdg37QIkSZNj6EtSQwx9SWqIoS9JDTH0Jakhhr4kNcTQl6SGGPqS1BBDX5IacmDaBWx222231eLi4rTLkKQ95fTp039WVfPb9bvpQn9xcZH19fVplyFJe0qS8336uXtHkhrSK/STHE3yXJIzSR7YYvkHkzyT5PNJPpHk0Miyryf53PBvbZzFS5J2ZtvdO0n2Aw8Dbwc2gFNJ1qrqmZFuTwGDqrqc5B8CPwO8b7jsq1X1pjHXLUm6Dn229O8GzlTV2ap6CXgMODbaoao+VVWXh7OfBg6Ot0xJ0jj0Cf3bgedH5jeGbddyH/DxkfnXJllP8ukk776OGiVJY9In9LNF25ZXXknyAWAA/OxI80JVDYC/B3w4yXdusd7y8INh/dKlSz1KkiZsdRUWF2Hfvm66ujrtiqTr0if0N4A7RuYPAhc3d0ryNuBB4J6q+tor7VV1cTg9C/wucNfmdatqpaoGVTWYn9/2NFNpslZXYXkZzp+Hqm66vGzwa0/qE/qngCNJDie5BTgOXHUWTpK7gEfoAv+FkfZbk7xmePs24M3A6AFg6eb34INw+fLVbZcvd+3SHrPt2TtVdSXJ/cATwH7g0ap6OskJYL2q1uh257wO+JUkABeq6h7gjcAjSV6m+4D50KazfqSb34ULO2uXbmK9vpFbVR8DPrap7V+P3H7bNdb7A+D7bqRAaeoWFrpdOlu1S3uM38iVtnPyJMzNXd02N9e1S3uMoS9tZ2kJVlbg0CFIuunKStcu7TE33Q+uSTelpSVDXjPBLX1JaoihL0kNMfQlqSGGviQ1xNCXpIYY+pLUEENfkhpi6EtSQwx9SWqIoS9JDTH0Jakhhr4kNcTQl6SGGPqS1BBDX5IaYuhLUkMMfUlqiKEvSQ0x9CWpIYa+JDXE0Jekhhj6ktQQQ1+SGmLoS1JDDH1Jaoihr71jdRUWF2Hfvm66ujrtinZfi2PWrjow7QKkXlZXYXkZLl/u5s+f7+YBlpamV9duanHM2nWpqmnXcJXBYFDr6+vTLkM3m8XFLvQ2O3QIzp2bdDWT0eKYdd2SnK6qwXb93L2jveHChZ21z4IWx6xd1yv0kxxN8lySM0ke2GL5B5M8k+TzST6R5NDIsnuTfGH4d+84i1dDFhZ21j4LWhyzdt22oZ9kP/Aw8C7gTuD9Se7c1O0pYFBVfw14HPiZ4bqvBx4CfgC4G3goya3jK1/NOHkS5uaubpub69pnVYtj1q7rs6V/N3Cmqs5W1UvAY8Cx0Q5V9amqGh5t4tPAweHtdwJPVtWLVfVl4Eng6HhKV1OWlmBlpdufnXTTlZXZPqDZ4pi16/qcvXM78PzI/Abdlvu13Ad8/Buse/vmFZIsA8sAC/7XVdeytNRe4LU4Zu2qPlv62aJty1N+knwAGAA/u5N1q2qlqgZVNZifn+9RkiTpevQJ/Q3gjpH5g8DFzZ2SvA14ELinqr62k3UlSZPRJ/RPAUeSHE5yC3AcWBvtkOQu4BG6wH9hZNETwDuS3Do8gPuOYZskaQq23adfVVeS3E8X1vuBR6vq6SQngPWqWqPbnfM64FeSAFyoqnuq6sUkP0X3wQFwoqpe3JWRSJK25TdyJWkG+I1cSdKrGPqS1BBDX5IaYuhLUkMMfUlqiKEvSQ0x9CWpIYa+JDXE0Jekhhj6ktQQQ1+SGmLoS1JDDH1JaoihL0kNMfQlqSGGviQ1xNCXpIYY+pLUEENfkhpi6EtSQwx9SWqIoS9JDTH0Jakhhr4kNcTQl6SGGPqS1BBDX5IaYuhLUkMMfUlqiKEvSQ0x9CWpIb1CP8nRJM8lOZPkgS2WvyXJZ5NcSfKeTcu+nuRzw7+1cRUuSdq5A9t1SLIfeBh4O7ABnEqyVlXPjHS7APwY8C+2uIuvVtWbxlCrJOkGbRv6wN3Amao6C5DkMeAY8P9Dv6rODZe9vAs1SpLGpM/unduB50fmN4Ztfb02yXqSTyd5946qkySNVZ8t/WzRVjt4jIWqupjkDcAnk/xRVX3xqgdIloFlgIWFhR3ctSRpJ/ps6W8Ad4zMHwQu9n2Aqro4nJ4Ffhe4a4s+K1U1qKrB/Px837uWJO1Qn9A/BRxJcjjJLcBxoNdZOEluTfKa4e3bgDczcixAkjRZ24Z+VV0B7geeAJ4FPlpVTyc5keQegCTfn2QDeC/wSJKnh6u/EVhP8l+ATwEf2nTWjyRpglK1k93zu28wGNT6+vq0y5CkPSXJ6aoabNfPb+RKUkMMfUlqiKEvSQ0x9CWpIYa+JDXE0Jekhhj6ktQQQ1+SGmLoS1JDDH1JaoihL0kNMfQlqSGGviQ1xNCXpIYY+pLUEENfkhpi6EtSQwx9SWqIoa+dW12FxUXYt6+brq5OuyLtBp/nmXRg2gVoj1ldheVluHy5mz9/vpsHWFqaXl0aL5/nmeWF0bUzi4tdAGx26BCcOzfparRbfJ73HC+Mrt1x4cLO2rU3+TzPLENfO7OwsLN27U0+zzPL0NfOnDwJc3NXt83Nde2aHT7PM8vQ184sLcHKSrdvN+mmKyse3Js1Ps8zywO5kjQDPJArSXoVQ1+SGmLoS1JDDH1JaoihL0kN6RX6SY4meS7JmSQPbLH8LUk+m+RKkvdsWnZvki8M/+4dV+GSpJ3bNvST7AceBt4F3Am8P8mdm7pdAH4M+MVN674eeAj4AeBu4KEkt9542ZKk69FnS/9u4ExVna2ql4DHgGOjHarqXFV9Hnh507rvBJ6sqher6svAk8DRMdQtSboOfUL/duD5kfmNYVsfN7KuJGnM+oR+tmjr+zXeXusmWU6ynmT90qVLPe9akrRTfUJ/A7hjZP4gcLHn/fdat6pWqmpQVYP5+fmedy1J2qk+oX8KOJLkcJJbgOPAWs/7fwJ4R5Jbhwdw3zFskyRNwbahX1VXgPvpwvpZ4KNV9XSSE0nuAUjy/Uk2gPcCjyR5erjui8BP0X1wnAJODNskSVPgr2xK0gzwVzYlSa9i6EtSQwx9SWqIoS9JDTH0Jakhhr4kNcTQl6SGGPqS1BBDX5IaYuhLUkMMfUlqiKEvSQ0x9CWpIYa+JDXE0Jekhhj6ktQQQ1+SGmLoS1JDDH1JaoihL0kNMfQlqSGGviQ1xNCXpIYY+pLUEENfkhpi6EtSQwx9SWqIoS9JDTH0Jakhhr4kNcTQl6SGGPqS1JBeoZ/kaJLnkpxJ8sAWy1+T5JeHyz+TZHHYvpjkq0k+N/z7D+MtX5K0Ewe265BkP/Aw8HZgAziVZK2qnhnpdh/w5ar6riTHgZ8G3jdc9sWqetOY65YkXYc+W/p3A2eq6mxVvQQ8Bhzb1OcY8JHh7ceBtybJ+MqUJI1Dn9C/HXh+ZH5j2LZln6q6AnwF+PbhssNJnkrye0n+9lYPkGQ5yXqS9UuXLu1oAJKk/vqE/lZb7NWzz5eAhaq6C/gg8ItJvuVVHatWqmpQVYP5+fkeJUmSrkef0N8A7hiZPwhcvFafJAeAbwVerKqvVdX/AKiq08AXge++0aI1tLoKi4uwb183XV2ddkXSjfN1vav6hP4p4EiSw0luAY4Da5v6rAH3Dm+/B/hkVVWS+eGBYJK8ATgCnB1P6Y1bXYXlZTh/Hqq66fKybxDtbb6ud12qNu+p2aJT8kPAh4H9wKNVdTLJCWC9qtaSvBb4BeAu4EXgeFWdTfKjwAngCvB14KGq+o1v9FiDwaDW19dvaFBNWFzs3hCbHToE585NuhppPHxdX7ckp6tqsG2/PqE/SYZ+T/v2dVtCmyXw8suTr0caB1/X161v6PuN3L1qYWFn7dJe4Ot61xn6e9XJkzA3d3Xb3FzXLu1Vvq53naG/Vy0twcpKt68z6aYrK127tFf5ut517tOXpBngPn1J0qsY+pLUEENfkhpi6EtSQwx9SWqIoS9JDTH0Jakhhr4kNcTQl6SGGPqS1BBDX5IaYuhLUkMMfUlqiKEvSQ0x9CWpIYa+JDXE0Jekhhj6ktQQQ1+SGmLoS1JDDP0btboKi4uwb183XV2ddkWSrlcD7+cD0y5gT1tdheVluHy5mz9/vpsHWFqaXl2Sdq6R93Oqato1XGUwGNT6+vq0y+hncbF7YWx26BCcOzfpaiTdiD3+fk5yuqoG2/Vz986NuHBhZ+2Sbl6NvJ8N/RuxsLCzdkk3r0bez4b+jTh5Eubmrm6bm+vaJe0tjbyfDf0bsbQEKyvdPr+km66szNRBH6kZjbyfe4V+kqNJnktyJskDWyx/TZJfHi7/TJLFkWU/OWx/Lsk7x1f6JtM61WppqTvI8/LL3XTGXiBSU6b1fp5gfm17ymaS/cDDwNuBDeBUkrWqemak233Al6vqu5IcB34aeF+SO4HjwF8BvgP4nSTfXVVfH+soGjnVStIMmnB+9dnSvxs4U1Vnq+ol4DHg2KY+x4CPDG8/Drw1SYbtj1XV16rqT4Ezw/sbrwcf/PN/sFdcvty1S9LNbML51Sf0bweeH5nfGLZt2aeqrgBfAb6957o3rpFTrSTNoAnnV5/QzxZtm7/Rda0+fdYlyXKS9STrly5d6lHSJo2caiVpBk04v/qE/gZwx8j8QeDitfokOQB8K/Biz3WpqpWqGlTVYH5+vn/1r2jkVCtJM2jC+dUn9E8BR5IcTnIL3YHZtU191oB7h7ffA3yyut93WAOOD8/uOQwcAf5wPKWPaORUK0kzaML51eu3d5L8EPBhYD/waFWdTHICWK+qtSSvBX4BuItuC/94VZ0drvsg8OPAFeCfVtXHv9Fj7anf3pGkm0Tf397xB9ckaQb4g2uSpFcx9CWpIYa+JDXE0Jekhtx0B3KTXAK2uHxNb7cBfzamcvaK1sbc2njBMbfiRsZ8qKq2/aLTTRf6NyrJep8j2LOktTG3Nl5wzK2YxJjdvSNJDTH0Jakhsxj6K9MuYApaG3Nr4wXH3IpdH/PM7dOXJF3bLG7pS5KuYWZCf7vr+M6aJHck+VSSZ5M8neQnpl3TpCTZn+SpJL857VomIcm3JXk8yZ8Mn++/Oe2adluSfzZ8Xf9xkl8a/qjjTEnyaJIXkvzxSNvrkzyZ5AvD6a3jftyZCP2R6/i+C7gTeP/w+ryz7Arwz6vqjcAPAv+ogTG/4ieAZ6ddxAT9O+C3qup7gb/OjI89ye3APwEGVfVX6X7d9/h0q9oV/wk4uqntAeATVXUE+MRwfqxmIvTpdx3fmVJVX6qqzw5v/2+6IBj/pShvMkkOAj8M/Ny0a5mEJN8CvAX4jwBV9VJV/c/pVjURB4BvGl6UaY4tLr6011XV79P9FP2o0euNfwR497gfd1ZCfzLX4r1JJVmku5bBZ6ZbyUR8GPiXwMvTLmRC3gBcAn5+uEvr55J887SL2k1V9V+BfwNcAL4EfKWqfnu6VU3MX6qqL0G3YQf8xXE/wKyEfq9r8c6iJK8DfpXuAjX/a9r17KYkPwK8UFWnp13LBB0A/gbw76vqLuD/sAv/5b+ZDPdjHwMOA98BfHOSD0y3qtkxK6Hf61q8sybJX6AL/NWq+rVp1zMBbwbuSXKObhfe303yn6db0q7bADaq6pX/xT1O9yEwy94G/GlVXaqq/wv8GvC3plzTpPz3JH8ZYDh9YdwPMCuh3+c6vjMlSej28z5bVf922vVMQlX9ZFUdrKpFuuf4k1U101uAVfXfgOeTfM+w6a3AM1MsaRIuAD+YZG74On8rM37wesTo9cbvBX593A9wYNx3OA1VdSXJ/cAT/Pl1fJ+eclm77c3A3wf+KMnnhm3/qqo+NsWatDv+MbA63KA5C/yDKdezq6rqM0keBz5Ld5baU8zgt3OT/BLwd4DbkmwADwEfAj6a5D66D7/3jv1x/UauJLVjVnbvSJJ6MPQlqSGGviQ1xNCXpIYY+pLUEENfkhpi6EtSQwx9SWrI/wMPxnMEu8DVOAAAAABJRU5ErkJggg==\n",

"text/plain": [

""

]

},

"metadata": {

"needs_background": "light"

},

"output_type": "display_data"

}

],

"source": [

"yvals = range(10+1)\n",

"plt.plot(yvals, [pbinom(y, 10, 0.5) for y in yvals], 'ro');"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"What about the likelihood function? \n",

"\n",

"The likelihood function is the exact same form as the sampling distribution, except that we are now interested in varying the parameter for a given dataset."

]

},

{

"cell_type": "code",

"execution_count": 12,

"metadata": {},

"outputs": [

{

"data": {

"image/png": "iVBORw0KGgoAAAANSUhEUgAAAX0AAAD8CAYAAACb4nSYAAAABHNCSVQICAgIfAhkiAAAAAlwSFlzAAALEgAACxIB0t1+/AAAADl0RVh0U29mdHdhcmUAbWF0cGxvdGxpYiB2ZXJzaW9uIDMuMC4yLCBodHRwOi8vbWF0cGxvdGxpYi5vcmcvOIA7rQAAIABJREFUeJzt3Xl8lOW99/HPb2ayEJIQIIFAFhJ2wo4hLG4oLriBu4BrS8tRa9fz9Kk9PdXWnu7H03Nal4rVU21VtFoV2dQqi1VZwhYICCQBsgIhgQDZZ+Z6/sjgE2MgEzIz9yy/9+uVF5OZe2a+N0m+uXPdyyXGGJRSSkUGm9UBlFJKBY6WvlJKRRAtfaWUiiBa+kopFUG09JVSKoJo6SulVATR0ldKqQiipa+UUhFES18ppSKIw+oAHSUnJ5usrCyrYyilVEjZsmXLMWNMSlfLBV3pZ2VlkZ+fb3UMpZQKKSJyyJvldHhHKaUiiJa+UkpFEC19pZSKIFr6SikVQbT0lVIqgnhV+iIyR0T2ikiRiDzcyePfE5HdIlIgIh+IyJB2j7lEZLvnY5kvwyullOqeLg/ZFBE78CRwJVAObBaRZcaY3e0W2wbkGmMaROQB4DfAHZ7HGo0xk3ycWyml1Hnw5jj9PKDIGFMCICJLgXnA56VvjFnTbvkNwF2+DKki29GTTVTVNXG62cmpplZONTk53ezkdJOTsWmJXDZqACJidUylQoI3pZ8GlLX7vByYdo7lFwGr2n0eKyL5gBP4lTHmrW6nVBHpVFMrj7+3jxc/PYj7HFM5jxmUyDcvH86csanYbFr+Sp2LN6Xf2U9Rpz+CInIXkAtc2u7uTGNMpYgMBT4UkZ3GmOIOz1sMLAbIzMz0KrgKX8YYVuys4rF3dlN9upkFeZnMHj2A+BgHCbFRJMQ6iI9x0CvazvKCKp5aU8SDL21l+IB4HrpsONdPGITDrscoKNUZMeYcm1CAiMwAfmKMudrz+Q8BjDG/7LDcFcAfgEuNMUfP8lp/BpYbY14/2/vl5uYavQxD5Dp4rJ5HlhWyfl81Ywcn8vObxjMpI+mcz3G5235JPPlhEXuPnGJI/zi+f/Uorp8wOECplbKeiGwxxuR2tZw3W/qbgREikg1UAPOBhR3ebDLwDDCnfeGLSF+gwRjTLCLJwIW07eRV6gucLjdPrS3miTVFRNttPHpDDndPH+LVFrvdJsydOJjrxw/i/T1H+P0H+3no5W3U1rdwz4ws/4dXKoR0WfrGGKeIPAS8C9iB540xhSLyGJBvjFkG/BaIB/7m2aFWaoyZC4wBnhERN22Hh/6qw1E/SmGM4ZFlhby8sZTrJwzix9fnMDAxttuvY7MJV49N5bJRA/jGy1t55O1CXG7DVy7M9kNqpUJTl8M7gabDO5Hn2fUl/HzlHh6YNYwfzBntk9dscbr55itbebfwCD++PodFF2nxq/Dm7fCO7u1Sllq9q4pfrNrDdeMH8f2rRvnsdaMdNp5YOIVrxqXys+W7+dNHJT57baVCmZa+ssyOshN859XtTMpI4vHbJ/r8cMsou43fL5jMdeMH8R8r9rBkfXHXT1IqzAXdJCoqMpQfb2DRC/mkJMTw7D25xEbZ/fI+UXYb/zN/Ejab8IuVn+FywwOzhvnlvZQKBVr6KuBONrXy1T9vptnpYuniaSTHx/j1/Rx2G7+7fSIC/Hr1Z4wcGM/sMQP9+p5KBSsd3lEB1epy842XtlJSXc8zd13A8AEJAXlfh93Gb2+bwOjUBH7wxk5qTjcH5H2VCjZa+iqg/vsf+/ho/zF+cdN4Zg5PDuh7xzjs/Pf8SZxsbOWHf99JsB25plQgaOmrgCmpPs2S9SXcPDmN26dmWJJhdGoi3796FO/tPsLrW8otyaCUlbT0VUAYY3hs+W5iHHYevtY3x+Kfr0UXZTMtux8/fWc3ZbUNlmZRKtC09FVAfLDnKGv3VvOdK0YwIKH7Z9v6ks0mPH77RAD+9bUduM51CU+lwoyWvvK7plYXP11eyIgB8dw7M8vqOACk943jp3PHsulgrZ64pSKKlr7yuyXrSyirbeQnc8cSFUSXPL55Shpzxqby+Hv72FN10uo4SgVE8PwEqrBUVtvAk2uKuG78IC4M8NE6XRERfnHzeBJ7RfHdV7fT7HRZHUkpv9PSV3718xV7sInwb9eNsTpKp/r1juY3t47ns8OneP6fB62Oo5Tfaekrv/lofzWrCw/z0OXDSUvqZXWcs7p89EBmjx7AU2uLOF7fYnUcpfxKS1/5RYvTzU+WFZLVP46vXRz8lzX+wTWjqW928vsP91sdRSm/0tJXfvHCJwcprq7n0RvGEuPwz8XUfGnkwARuz83grxsOcaim3uo4SvmNlr7yucYWF0+vK+aSkSlcNnqA1XG89t0rR+Kw2fjNu3utjqKU32jpK5/725YyautbeOiy4VZH6ZaBibF8/eJsVhRUsa30uNVxlPILLX3lU06XmyXrS5iSmcTUrL5Wx+m2xZcOIzk+ml+u/EwvyKbCkpa+8qkVO6soP97I/ZcOQ8S3M2EFQnyMg29fMZJNB2v5x56jVsdRyue09JXPGGP447oShg+I54oQnqRk/tQMhqb05ler9uB0ua2Oo5RPaekrn1m//xh7qk6y+JKhPp/vNpCi7DZ+MGc0xdX1vJpfZnUcpXxKS1/5zB/XFpOaGMuNk9KsjtJjV+UMZGpWX373/n5ONzutjqOUz2jpK5/YUXaCT0tqWHRRNtGO0P+2EhF+eO0Yjp1u5n//ecDqOEr5TOj/dKqg8Md1xSTGOlgwLdPqKD4zJbMvl48ewPMfH6ChRbf2VXjQ0lc9VlJ9mtWFh7l7xhDiYxxWx/GpB2cN43hDK69u1rF9FR609FWPPftRCVF2G/fNDP5r7HRXblY/pmb15dn1JbQ49UgeFfq09FWPHD3ZxBtbKrjtgnRSEmKsjuMXD84aTmVdE8t2VFodRake09JXPfL8xwdxut0svmSo1VH8ZtaoFEanJvDHdcW4dT5dFeK09NV5a2xx8dLGQ1wzbhBD+ve2Oo7fiAgPzBpG0dHTvL/niNVxlOoRr0pfROaIyF4RKRKRhzt5/HsisltECkTkAxEZ0u6xe0Vkv+fjXl+GV9ZaubOKU01O7p4xpOuFQ9x14weR2S+Op9YW6zV5VEjrsvRFxA48CVwD5AALRCSnw2LbgFxjzATgdeA3nuf2Ax4FpgF5wKMiEnpX4VKdWrq5lKHJvZmW3c/qKH7nsNtYfMnQz89HUCpUebOlnwcUGWNKjDEtwFJgXvsFjDFrjDENnk83AOme21cD7xtjao0xx4H3gTm+ia6sVHT0FJsPHueOqRkheWG183HrBekkx8fw9Npiq6Modd68Kf00oP1ByuWe+85mEbDqPJ+rQsTSTWVE2YVbLkjveuEwERtlZ9FF2Xy0/xg7y+usjqPUefGm9DvbjOt0UFNE7gJygd9257kislhE8kUkv7q62otIykrNThdvbC3nypyBJMeH52GaZ3PX9EwSYh08va7I6ihKnRdvSr8cyGj3eTrwpQOWReQK4EfAXGNMc3eea4xZYozJNcbkpqSkeJtdWeS9wiMcb2hl/tTwueSCtxJio7h7+hBW7TpMcfVpq+Mo1W3elP5mYISIZItINDAfWNZ+ARGZDDxDW+G3n3niXeAqEenr2YF7lec+FcKWbi4lLakXFw1PtjqKJb5yYTbRdhtL1pVYHUWpbuuy9I0xTuAh2sp6D/CaMaZQRB4TkbmexX4LxAN/E5HtIrLM89xa4Ge0/eLYDDzmuU+FqEM19XxcVMMdUzNC+pr5PZGSEMMtF6Tz1vYKautbrI6jVLd4dXUsY8xKYGWH+x5pd/uKczz3eeD58w2ogsurm8uwCdyWGzk7cDtz74wsXt5YytLNpTw4K7QmgFeRTc/IVV5rdbn525ZyLhs1gEF9elkdx1KjUhOYOaw/f/30kE6pqEKKlr7y2oefHaX6VDPz8yJvB25n7p2ZRWVdE//QSzOoEKKlr7y2dFMpAxNjuGyUHmEFcMWYgaQl9eJ/Pz5odRSlvKalr7xSeaKRdfuque2CDBx2/bYBsNuEe2YMYeOBWvZUnbQ6jlJe0Z9e5ZXX8stwG7hjakbXC0eQO6ZmEBtl48VPD1odRSmvaOmrLrnchr/ll3PxiGQy+sVZHSeoJMVFc9PkNN7cVsGJBj18UwU/LX3VpQ0lNVScaOT2XN3K78y9M7NoanXrPLoqJGjpqy69ta2C+BgHV+YMtDpKUBqdmsj0of148dNDuHRmLRXktPTVOTW1uli96zBXj00lNspudZygdd/MLCpONOrhmyroaemrc1rz2VFONTu5cfJgq6MEtTOHb77wyUGroyh1Tlr66pze2l5BcnwMM4b2tzpKUHPYbdw1fQifFNew9/Apq+ModVZa+uqs6hpbWfNZNTdMHKTH5nth/tQMYhw2Xvj0oNVRlDor/UlWZ7V6VxUtLjfzJulkZ97o2zuaeZMG8+bWCk42tVodR6lOaemrs3prWyVZ/eOYmN7H6igh4+7pWTS2unh7W4XVUZTqlJa+6tThuiY2HKhh3qS0iJn43BfGp/dhfFofXtpYijF6+KYKPlr6qlPv7KjEGJg3SY/a6a47p2Xy2eFTbC09bnUUpb5ES1916q3tFUxI78PQlHiro4ScGyYOJj7GwUsbS62OotSXaOmrLyk6eorCypPMnahb+eejd4yDmyansbygSq/Ho4KOlr76kre3V2ITtPR7YOG0TFqcbt7Yqjt0VXDR0ldfYIzh7e2VzByWzIDEWKvjhKwxgxKZkpnESxsP6Q5dFVS09NUXbCs7QWltA3N1B26PLZw2hJLqejYeqLU6ilKf09JXX/D2tgqiHTbmjEu1OkrIu37CIBJjdYeuCi5a+upzTpeb5QVVzB49gMTYKKvjhLzYKDu3XJDO6l1VHDvdbHUcpQAtfdXOx8U11NS36GUXfOjOaZm0ugyvbym3OopSgJa+amf5jkriYxzMGpVidZSwMXxAAnnZ/XhlUylunWBFBQEtfQVAq8vNe7uPcGXOQJ0sxcfunJbJoZoGPi4+ZnUUpbT0VZuPi45R19jKteMHWR0l7MwZl0q/3tG8rDt0VRDQ0lcArCioIj7GwcUjkq2OEnZiHHZuuyCd93Yf4ejJJqvjqAinpa90aCcA5udl4nIb/qY7dJXFtPSVDu0EQHZyb2YM7c/SzbpDV1lLS1+xoqCKBB3a8bv5eRmU1TbqDl1lKa9KX0TmiMheESkSkYc7efwSEdkqIk4RubXDYy4R2e75WOar4Mo3zgztXKFDO3539dhU+sZFsXRTmdVRVATrsvRFxA48CVwD5AALRCSnw2KlwH3Ay528RKMxZpLnY24P8yof06GdwImNsnPLlHTe231Yz9BVlvFmSz8PKDLGlBhjWoClwLz2CxhjDhpjCgC3HzIqP9KhncCan5dBq8vwhu7QVRbxpvTTgPZ/j5Z77vNWrIjki8gGEbmxW+mUX7U4dWgn0IYPSCAvqx9LN5fpJZeVJbwp/c5mxe7Od2umMSYXWAj8t4gM+9IbiCz2/GLIr66u7sZLq574uLhtaOc6HdoJqPl5GRw4Vs+GEr3ksgo8b0q/HMho93k6UOntGxhjKj3/lgBrgcmdLLPEGJNrjMlNSdHrvgTKyjNDOyN1aCeQrh3fdsnlVzbpGboq8Lwp/c3ACBHJFpFoYD7g1VE4ItJXRGI8t5OBC4Hd5xtW+c6ZoZ0rcwYS49ChnUCKjbJz85R0Vu86zPF6nUNXBVaXpW+McQIPAe8Ce4DXjDGFIvKYiMwFEJGpIlIO3AY8IyKFnqePAfJFZAewBviVMUZLPwicGdrRo3asMT8vgxaXmze26g5dFVgObxYyxqwEVna475F2tzfTNuzT8XmfAON7mFH5gQ7tWGt0aiKTM5N4ZVMpiy7KRqSzXWdK+Z6ekRuBdGgnOCzIy6S4up78Q8etjqIiiJZ+BNKhneBw/YRBJMQ4eEUvuawCSEs/Aq3aqUM7wSAu2sG8yYNZsbOKuoZWq+OoCKGlH2HaX2tHh3astyAvk2anm79v0x26KjC09CPMhpIaTjS0cs24VKujKGDs4D5MTO/DK5tK9QxdFRBa+hFm5c7D9I62c8lIPQkuWCyclsm+I6fZojt0VQBo6UcQp8vNe4WHuXyMXmsnmNwwcTDxMQ6dQ1cFhJZ+BNl0sJaa+hau1aGdoBIX7eDGyYNZvrOKEw16hq7yLy39CLJq52F6RdmZNWqA1VFUBwvzhtDidPP3rRVWR1FhTks/QrjchtWFh7lsdAq9onVoJ9jkDE5kUkYSL+sOXeVnWvoRYsuh41SfauaacXpCVrBaOC2ToqOn2XxQd+gq/9HSjxArd1YR7bBx2Wgd2glWZ87QfXnjIaujqDCmpR8B3G7D6l2HuXRkCvExXl1jT1kgLtrBTVPSWKmXXFZ+pKUfAbaVneDwySauHa9H7QS7hdMyaXHqJZeV/2jpR4BVO6uIsguzxwy0OorqwujURKZk6g5d5T9a+mHOGMOqXYe5eEQKibFRVsdRXlg4bQgl1fVsPKBz6Crf09IPcwXldVScaNRr7YSQ68YPIiFWz9BV/qGlH+ZW7qrCYROuzNGhnVDRK9rOLZ45dGt1h67yMS39MGaMYdXOw8wcnkxSXLTVcVQ3LJyWSYvLzetbyqyOosKMln4YK6w8SWltg15rJwSNHJjA1Ky+vLSxFLdbd+gq39HSD2OrdlVhtwlXjdXSD0V3z8jiUE0D6/ZXWx1FhREt/TB1ZmhnWnY/+vXWoZ1QNGdsKsnxMfzlUz1DV/mOln6Y2lN1ipJj9Vw/YbDVUdR5inbYWJiXwZq9RymrbbA6jgoTWvphanlBJXabcPVYPWonlC2YlolNhL9u0K195Rta+mHIGMOKnVXMHNaf/vExVsdRPTCoTy+uHDOQV/PLaGp1WR1HhQEt/TBUWHmSQzUNXDdeL6McDu6ZMYQTDa28s6PS6igqDGjph6HlBVWeoR09aicczBjWn+ED4vmLDvEoH9DSDzNtQzuVXDg8mb561E5YEBHunj6EgvI6tpedsDqOCnFa+mFmZ0UdZbWNXK9DO2Hl5ilp9I628+KnB62OokKcln6YWVHQdq2dq/SonbCSEBvFTVPSWF5QpdfjUT3iVemLyBwR2SsiRSLycCePXyIiW0XEKSK3dnjsXhHZ7/m411fB1ZcZY1heUMVFI/RaO+HonhlZtDjdvJav1+NR56/L0hcRO/AkcA2QAywQkZwOi5UC9wEvd3huP+BRYBqQBzwqIn17Hlt1ZofnMsp61E54GjkwgWnZ/fjrhkO49Ho86jx5s6WfBxQZY0qMMS3AUmBe+wWMMQeNMQWAu8NzrwbeN8bUGmOOA+8Dc3yQW3ViRUElUXbhqhw9aidc3TMji/Ljjazde9TqKCpEeVP6aUD7vyfLPfd5oyfPVd1gjGFFQRUXj0ihT5zOkBWurho7kAEJMbyg1+NR58mb0pdO7vP2b0uvnisii0UkX0Tyq6v1ioLnY1vZCSrrmnRoJ8xF2W3cPX0I6/dVs+/IKavjqBDkTemXAxntPk8HvD010KvnGmOWGGNyjTG5KSkpXr60am9FQRXRdhtX6lE7Ye/O6UOIjbLx3EcHrI6iQpA3pb8ZGCEi2SISDcwHlnn5+u8CV4lIX88O3Ks89ykfcrsNK3dWcclInfw8EvTrHc0tU9J5c3sF1aearY6jQkyXpW+McQIP0VbWe4DXjDGFIvKYiMwFEJGpIlIO3AY8IyKFnufWAj+j7RfHZuAxz33Kh7aVHaeqronrJ+jQTqT46kXZtDjdevVN1W0ObxYyxqwEVna475F2tzfTNnTT2XOfB57vQUbVheUFVUQ7bMweM8DqKCpAhqXEM3v0AP6y4RAPzBpGbJTd6kgqROgZuSHO5RnamTUyhQQd2okoX7t4KLX1Lby5rcLqKCqEaOmHuI0lNRw52cz1E3WGrEgzfWg/xg5O5Ll/HtDJ05XXtPRD3JvbKoiPcXDlGD1qJ9KICF+/eChFR0/r5OnKa1r6Iayp1cWqXYeZMy6VXtE6phuJrh0/iNTEWP70UYnVUVSI0NIPYf/Yc4TTzU5unqwnOUeqaIeNe2dm8XFRDbsrT1odR4UALf0Q9ta2ClITY5k2tL/VUZSFFuZlEhdt57l/6slaqmta+iGqtr6FtXurmTdpMHZbZ1e7UJGiT1wUt+dmsGxHBUdONlkdRwU5Lf0QtaKgEqfbcKMO7Sjgqxdm43QbnVlLdUlLP0S9ua2C0akJjBmUaHUUFQQy+8dxdU4qf91Qyulmp9VxVBDT0g9Bh2rq2Vp6Qrfy1RfcP2sYdY2temkGdU5a+iHorW2ViMBcPSFLtTMpI4lLRqbw7PoSGlp0a191Tks/xBhjeGt7BdOz+zM4qZfVcVSQ+fbs4dTUt/DyxlKro6ggpaUfYraXneDAsXpu0qEd1YkLhvRj5rD+PLO+hKZWl9VxVBDS0g8xb22rINphY854nQdXde6bl4+g+lQzr24u63phFXG09ENIq8vNOwVVXDlmoE6Wos5q+tB+5GX14+m1xTQ7dWtffZGWfgj5aH81tfUtetSOOicR4Zuzh3P4ZBOvbym3Oo4KMlr6IeTNbZX0jYvi0pE6j7A6t4uGJzM5M4mn1hTT6nJbHUcFES39EHGqqZX3dx/m+gmDiXbol02dm4jwrctHUHGikTe36iQr6v/T9ggRy3ZU0tTq5qYpOrSjvDNrVArj0/rwxJoinLq1rzy09EOAMYaXN5YyOjWByRlJVsdRIUJE+OblwymtbWDZjkqr46ggoaUfAgrK6yisPMmd0zIR0StqKu9dmTOQ0akJPPFhES6dUlGhpR8SXtp4iLhoux61o7pNRPjW7BGUHKvnja16JI/S0g96J5taeWdHFXMnDiZBj81X5+GacalMzEji8ff20tiix+1HOi39IPfWtgoaW10snJZpdRQVokSEH107hiMnm3nunzqXbqTT0g9iZ3bgjktLZEK67sBV5y8vux9X5Qzk6bXFVJ9qtjqOspCWfhDbWnqczw6fYmHeEKujqDDw8DWjaXa6+Z8P9lkdRVlISz+IvbSxlPgYB3Mn6XXzVc8NTYln4bRMXtlURtHR01bHURbR0g9SdQ2trCioYt6kwcTHOKyOo8LEt2ePoFeUnV+t+szqKMoiWvpB6o2t5TQ73boDV/lU//gYHpg1jH/sOcKGkhqr4ygLaOkHIWMML208xMSMJMYO7mN1HBVmFl2UzaA+sfxi5R7cesJWxNHSD0KbDtRSXF3PnbqVr/wgNsrO/7lqFAXldbxToJdniDRelb6IzBGRvSJSJCIPd/J4jIi86nl8o4hkee7PEpFGEdnu+fijb+OHp5c3lZIQ6+CGCboDV/nHTZPTyBmUyG9W79VpFSNMl6UvInbgSeAaIAdYICI5HRZbBBw3xgwHfgf8ut1jxcaYSZ6P+32UO2zV1rewaudhbp6cRq9ou9VxVJiy2YQfXTeGihONPLteT9iKJN5s6ecBRcaYEmNMC7AUmNdhmXnAC57brwOzRa8Mdl5e2VRKi8vNwml6bL7yrwuHJ3Pd+EH8YU0RxdV6CGek8Kb004D2MyyXe+7rdBljjBOoA/p7HssWkW0isk5ELu7sDURksYjki0h+dXV1t1YgnDS2uHj+nwe4dGQKo1ITrI6jIsCjc3OIddj44d936k7dCOFN6Xe2xd7xu+Nsy1QBmcaYycD3gJdFJPFLCxqzxBiTa4zJTUmJ3KkAX91cSk19C9+4bLjVUVSEGJAQy79fl8OmA7Us3VzW9RNUyPOm9MuBjHafpwMdd/l/voyIOIA+QK0xptkYUwNgjNkCFAMjexo6HLU43SxZX8LUrL7kZfezOo6KILflpjNjaH9+uWoPR042WR1H+Zk3pb8ZGCEi2SISDcwHlnVYZhlwr+f2rcCHxhgjIimeHcGIyFBgBKB7jTrx9vYKKuuaeFC38lWAiQi/uHk8LU43j75daHUc5Wddlr5njP4h4F1gD/CaMaZQRB4TkbmexZ4D+otIEW3DOGcO67wEKBCRHbTt4L3fGFPr65UIdS634el1xeQMSmTWyMgd3lLWyU7uzXeuGMnqwsOs3lVldRzlR15d1MUYsxJY2eG+R9rdbgJu6+R5bwBv9DBj2Hu38DAl1fU8uXCKToeoLPO1i7NZtqOSR94uZMawZPr00kl7wpGekWsxYwxPriliaHJv5oxLtTqOimBRdhu/vmU8x0438+vVekG2cKWlb7F1+6oprDzJ/bOGYbfpVr6y1oT0JBZdlM3LG0vZqBdkC0ta+hZ7ak0xg/vEcuMknfRcBYfvXjmSzH5xfPfV7Ryvb7E6jvIxLX0LbTpQy6aDtSy+ZCjRDv1SqOAQF+3giYWTOXa6he++tl1P2goz2jQWemptEf17R3PHVL2apgouE9KT+PH1Y1i7t5qn1xVbHUf5kJa+RXZV1LF2bzVfvShbL6ymgtJd04cwd+JgHn9vL58UH7M6jvIRLX2L/Nf7+0iIcXD3DL2wmgpOIsIvbx5PdnJvvvXKdo7q2bphQUvfAv/YfYQPPzvKt2aPIDFWj4VWwat3jIOn77qA+mYnD72yDafLbXUk1UNa+gHW1OriJ+8UMmJAPPddmGV1HKW6NHJgAj+/aRybDtTy+Pv7rI6jekhLP8CeXltM+fFGfjpvLFF2/e9XoeHmKeksyMvg6bXFfLDniNVxVA9o6wTQoZp6nl5XzA0TBzNzWLLVcZTqlkdvGMvYwYl8e+l2CspPWB1HnSct/QD66Tu7ibIJP7p2jNVRlOq22Cg7z907laS4KO59fhP7j5yyOpI6D1r6AXJm5+13rhhJap9Yq+ModV5S+8Ty0tem4bDbuOu5jZTVNlgdSXWTln4A6M5bFU6G9O/NXxdNo6nVzZ1/2qiHcoYYLf0A0J23KtyMSk3gz1+ZyrHTzdz13Ea9Rk8I0QbyM915q8LV5My+/OmeXA7WNHDfnzdzutlpdSTlBS19P3K5Df/+1i7deavC1szhyTyxYDK7Kur42gubOdXUanUk1QUtfT/6z/f28tH+Y/zw2jG681aFravGpvJft08k/+BxbnlBzOQlAAAKDklEQVT6E925G+S09P3k7e0VPL22mAV5mdw5Ta+iqcLbvElpvPDVPA7XNTHvyY/JP6hTYQcrLX0/2Flex/99vYC8rH78dO5YnfdWRYQLhyfz5jcupE+vKBY+u5G/by23OpLqhJa+jx091cTXX8wnOT6Gp+6aopOjqIgyLCWeNx+cyQVD+vK913bwm9Wf6SQsQUYbyYeanS7u/8sW6hpbWXLPBSTHx1gdSamAS4qL5sVFeSzIy+CptcU8+NJWTuoO3qChpe8jxhh+/NYutpae4D9vm8jYwX2sjqSUZaLsNn5x03h+fH0O7+0+zBWPr2P1riqrYym09H3mz58c5LX8cr51+XCumzDI6jhKWU5EWHRRNm9940KS42O4/69bWfxiPofr9AxeK2np95DbbfjDB/t5bPlursoZyHeuGGl1JKWCyoT0JN5+6EJ+eM1o1u+v5or/WsdfPj2oY/0W0dLvgfpmJw++tJXH39/HvImD+f2CydhseqSOUh1F2W38y6XDePc7lzApI4kfv13Ibc98yrbS41ZHizhiTHD9ts3NzTX5+flWx+jSoZp6Fr+4hf1HT/Fv145h0UXZemimUl4wxvDmtgr+Y8UeautbyMvux/2XDmXWyAG60dQDIrLFGJPb5XJa+t23fl8133xlGyLwxIIpXDRCr6mjVHfVNztZurmM5z4qobKuiZED41l8yTDmThyshzqfBy19P3C5DX/6qIRfr/6MkQMTWHJ3Lpn946yOpVRIa3W5WV5QyTPrSvjs8ClSE2OZn5fBnHGpjBqYoH9Be0lL34caW1y8vrWc5z4q4WBNA9eOT+W3t06kd4zD6mhKhQ1jDOv2VbNkfQmfltRgDGT1j+PqcalcPTaVSelJOvxzDj4tfRGZA/wPYAf+ZIz5VYfHY4AXgQuAGuAOY8xBz2M/BBYBLuBbxph3z/VewVT6NaebefHTQ/xlwyFq61uYmJHEv1wylGvGperWh1J+dPRUE+/vPsK7hUf4pOgYTrdhYGIMs0YOYFJmEhPS+zByYILOT9GOz0pfROzAPuBKoBzYDCwwxuxut8yDwARjzP0iMh+4yRhzh4jkAK8AecBg4B/ASGOM62zvZ3XpHzvdzM6KOt7ffYQ3tpTT7HRzxZiB/MulQ8kd0lfLXqkAq2tsZc1nR1m96zCfltRQ19h2dm+Mw8bYwYlMSE9iXFofhvSPI71vLwYkxGKPwL8IvC19b8Yn8oAiY0yJ54WXAvOA3e2WmQf8xHP7deAJaWvHecBSY0wzcEBEijyv96m3K+JrLrehvsVJfbOT001Oyo43sLP8JLsq69hVUUeV58SRaIeNW6ak87WLsxmWEm9VXKUiXp9eUdw4OY0bJ6dhjOFQTQM7yk9QUF5HQfkJXt1cxp8/Ofj58lF2YXBSL9L79iItqRf9esfQp1cUSXFRJPWKok+vKPrERREX7SA2ykaMw/75v5Hwy8Kb0k8Dytp9Xg5MO9syxhiniNQB/T33b+jw3LTzTnsOJxpauOXpTzAABkxbFs+/bdfFOd3kpL7ly39kiEB2cm/ysvsxPq0P4zwf8Tpmr1RQERGyknuTldybeZPaqsTpcnOotoHy442UHz/zb9vttXurOdHQSovL7dXrR9mFKLsNu01w2AS758NhsyECNhFEQDxZBMDz+dnydnr/WZYfPSiRPyyY7FXW8+VNq3WWr+OY0NmW8ea5iMhiYDFAZub5XXvebhNGpyZ+/gU48wU58wWKcdiJj3UQH+P58NwemBhLzuBELXilQpTDbmNYSvxZ/yI3xtDY6qKusZUTDW0fdY0tNLa6aGp109zqosnpprnVTZPTRavTjcsYXG6D021wt/vXAG5jMB02LDt/47PdffYh9Yy+vbqz6ufFm6YrBzLafZ4OVJ5lmXIRcQB9gFovn4sxZgmwBNrG9L0N315CbBRP3jnlfJ6qlApjIkJctIO4aAeD+vi/VIOdN7u+NwMjRCRbRKKB+cCyDsssA+713L4V+NC07SFeBswXkRgRyQZGAJt8E10ppVR3dbml7xmjfwh4l7ZDNp83xhSKyGNAvjFmGfAc8BfPjtpa2n4x4FnuNdp2+jqBb5zryB2llFL+pSdnKaVUGPD2kE09s0EppSKIlr5SSkUQLX2llIogWvpKKRVBtPSVUiqCBN3ROyJSDRzqwUskA8d8FCdURNo6R9r6gq5zpOjJOg8xxqR0tVDQlX5PiUi+N4cthZNIW+dIW1/QdY4UgVhnHd5RSqkIoqWvlFIRJBxLf4nVASwQaescaesLus6Rwu/rHHZj+koppc4uHLf0lVJKnUVIlr6IzBGRvSJSJCIPd/J4jIi86nl8o4hkBT6lb3mxzt8Tkd0iUiAiH4jIECty+lJX69xuuVtFxIhIyB/p4c06i8jtnq91oYi8HOiMvubF93amiKwRkW2e7+9rrcjpKyLyvIgcFZFdZ3lcROT3nv+PAhHx7UQhxpiQ+qDt8s7FwFAgGtgB5HRY5kHgj57b84FXrc4dgHW+DIjz3H4gEtbZs1wCsJ62aTlzrc4dgK/zCGAb0Nfz+QCrcwdgnZcAD3hu5wAHrc7dw3W+BJgC7DrL49cCq2ib9G86sNGX7x+KW/qfT9RujGkBzkzU3t484AXP7deB2XK2ySpDQ5frbIxZY4xp8Hy6gbZZykKZN19ngJ8BvwGaAhnOT7xZ568DTxpjjgMYY44GOKOvebPOBkj03O5DJ7PvhRJjzHra5h05m3nAi6bNBiBJRAb56v1DsfQ7m6i942TrX5ioHTgzUXuo8mad21tE25ZCKOtynUVkMpBhjFkeyGB+5M3XeSQwUkQ+FpENIjInYOn8w5t1/glwl4iUAyuBbwYmmmW6+/PeLaE4G3hPJmoPVV6vj4jcBeQCl/o1kf+dc51FxAb8DrgvUIECwJuvs4O2IZ5ZtP0195GIjDPGnPBzNn/xZp0XAH82xjwuIjNom6VvnDHG7f94lvBrf4Xiln53Jmqnw0TtocqrCeZF5ArgR8BcY0xzgLL5S1frnACMA9aKyEHaxj6XhfjOXG+/t982xrQaYw4Ae2n7JRCqvFnnRcBrAMaYT4FY2q5RE668+nk/X6FY+j2ZqD1UdbnOnqGOZ2gr/FAf54Uu1tkYU2eMSTbGZBljsmjbjzHXGBPKc2168739Fm077RGRZNqGe0oCmtK3vFnnUmA2gIiMoa30qwOaMrCWAfd4juKZDtQZY6p89eIhN7xjejBRe6jycp1/C8QDf/Pssy41xsy1LHQPebnOYcXLdX4XuEpEdgMu4PvGmBrrUveMl+v8r8CzIvJd2oY57gvljTgReYW24blkz36KR4EoAGPMH2nbb3EtUAQ0AF/x6fuH8P+dUkqpbgrF4R2llFLnSUtfKaUiiJa+UkpFEC19pZSKIFr6SikVQbT0lVIqgmjpK6VUBNHSV0qpCPL/AKHVemphw/VLAAAAAElFTkSuQmCC\n",

"text/plain": [

""

]

},

"metadata": {

"needs_background": "light"

},

"output_type": "display_data"

}

],

"source": [

"pvals = np.linspace(0, 1)\n",

"y = 4\n",

"plt.plot(pvals, [pbinom(y, 10, p) for p in pvals]);"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"So, though we are dealing with the same equation, these are entirely different functions; the distribution is discrete, while the likelihood is continuous; the distribtion's range is from 0 to 10, while the likelihood's is 0 to 1; the distribution integrates (sums) to one, while the likelhood does not."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### Posterior\n",

"\n",

"The mathematical form \\\\(p(\\theta | y)\\\\) that we associated with the Bayesian approach is referred to as a **posterior distribution**.\n",

"\n",

"> posterior /pos·ter·i·or/ (pos-tēr´e-er) later in time; subsequent.\n",

"\n",

"Why posterior? Because it tells us what we know about the unknown \\\\(\\theta\\\\) *after* having observed \\\\(y\\\\)."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Why be Bayesian?\n",

"\n",

"At this point, it is worth addressing the question of why one might consider an alternative statistical paradigm to the classical/frequentist statistical approach. After all, it is not always easy to specify a full probabilistic model, nor to obtain output from the model once it is specified. So, why bother?\n",

"\n",

"> ... the Bayesian approach is attractive because it is useful. Its usefulness derives in large measure from its simplicity. Its simplicity allows the investigation of far more complex models than can be handled by the tools in the classical toolbox. \n",

"*-- Link and Barker 2010*\n",

"\n",

"We already noted that there is just one estimator in Bayesian inference, which lends to its ***simplicity***. Moreover, Bayes affords a conceptually simple way of coping with multiple parameters; the use of probabilistic models allows very complex models to be assembled in a modular fashion, by factoring a large joint model into the product of several conditional probabilities.\n",

"\n",

"Bayesian statistics is also attractive for its ***coherence***. All unknown quantities for a particular problem are treated as random variables, to be estimated in the same way. Existing knowledge is given precise mathematical expression, allowing it to be integrated with information from the study dataset, and there is formal mechanism for incorporating new information into an existing analysis.\n",

"\n",

"Finally, Bayesian statistics confers an advantage in the ***interpretability*** of analytic outputs. Because models are expressed probabilistically, results can be interpreted probabilistically. Probabilities are easy for users (particularly non-technical users) to understand and apply."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### Example: confidence vs. credible intervals\n",

"\n",

"A commonly-used measure of uncertainty for a statistical point estimate in classical statistics is the ***confidence interval***. Most scientists were introduced to the confidence interval during their introductory statistics course(s) in college. Yet, a large number of users mis-interpret the confidence interval.\n",

"\n",

"Here is the mathematical definition of a 95% confidence interval for some unknown scalar quantity that we will here call \\\\(\\theta\\\\):\n",

"\n",

"

\n",

"\n",

"how the endpoints of this interval are calculated varies according to the sampling distribution of \\\\(Y\\\\), but for as an example, the confidence interval for the population mean when \\\\(Y\\\\) is normally distributed is calculated by:\n",

"\n",

"$Pr(\\bar{Y} - 1.96\\frac{\\sigma}{\\sqrt{n}}< \\theta < \\bar{Y} + 1.96\\frac{\\sigma}{\\sqrt{n}}) = 0.95$\n",

"\n",

"It would be tempting to use this definition to conclude that there is a 95% chance \\\\(\\theta\\\\) is between \\\\(a(Y)\\\\) and \\\\(b(Y)\\\\), but that would be a mistake. \n",

"\n",

"Recall that for frequentists, unknown parameters are **fixed**, which means there is no probability associated with them being any value except what they are fixed to. Here, the interval itself, and not \\\\(\\theta\\\\) is the random variable. The actual interval calculated from the data is just one possible realization of a random process, and it must be strictly interpreted only in relation to an infinite sequence of identical trials that might be (but never are) conducted in practice.\n",

"\n",

"A valid interpretation of the above would be:\n",

"\n",

"> If the experiment were repeated an infinite number of times, 95% of the calculated intervals would contain \\\\(\\theta\\\\).\n",

"\n",

"This is what the statistical notion of \"confidence\" entails, and this sets it apart from probability intervals.\n",

"\n",

"Since they regard unknown parameters as random variables, Bayesians can and do use probability intervals to describe what is known about the value of an unknown quantity. These intervals are commonly known as ***credible intervals***.\n",

"\n",

"The definition of a 95% credible interval is:\n",

"\n",

"

\n",

"\n",

"Notice that we condition here on the data \\\\(y\\\\) instead of the unknown \\\\(\\theta\\\\). Thus, the endpoints are fixed and the variable is random. \n",

"\n",

"We are allowed to interpret this interval as:\n",

"\n",

"> There is a 95% chance \\\\(\\theta\\\\) is between \\\\(a\\\\) and \\\\(b\\\\).\n",

"\n",

"Hence, the credible interval is a statement of what we know about the value of \\\\(\\theta\\\\) based on the observed data."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Bayesian Inference, in 3 Easy Steps\n",

"\n",

"We are now ready (and willing!) to apply Bayesian methods to our problem. Gelman et al. (2013) describe the process of conducting Bayesian statistical analysis in 3 steps:\n",

"\n",

"\n",

"\n",

"\n",

"\n",

"### Step 1: Specify a probability model\n",

"\n",

"As was noted above, Bayesian statistics involves using probability models to solve problems. So, the first task is to *completely specify* the model in terms of probability distributions. This includes everything: unknown parameters, data, covariates, missing data, predictions. All must be assigned some probability density.\n",

"\n",

"This step involves making choices.\n",

"\n",

"- what is the form of the sampling distribution of the data?\n",

"- what form best describes our uncertainty in the unknown parameters?\n",

"\n",

"### Step 2: Calculate a posterior distribution\n",

"\n",

"The posterior distribution is formulated as a function of the probability model that was specified in Step 1. Usually, we can write it down but we cannot calculate it analytically. In fact, the difficulty inherent in calculating the posterior distribution for most models of interest is perhaps the major contributing factor for the lack of widespread adoption of Bayesian methods for data analysis.\n",

"\n",

"**But**, once the posterior distribution is calculated, you get a lot for free:\n",

"\n",

"- point estimates\n",

"- credible intervals\n",

"- quantiles\n",

"- predictions\n",

"\n",

"### Step 3: Check your model\n",

"\n",

"Though frequently ignored in practice, it is critical that the model and its outputs be assessed before using the outputs for inference. Models are specified based on assumptions that are largely unverifiable, so the least we can do is examine the output in detail, relative to the specified model and the data that were used to fit the model.\n",

"\n",

"Specifically, we must ask:\n",

"\n",

"- does the model fit data?\n",

"- are the conclusions reasonable?\n",

"- are the outputs sensitive to changes in model structure?\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"---"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## References\n",

"\n",

"Gelman A, Carlin JB, Stern HS, Dunson DB, Vehtari A, Rubin DB. Bayesian Data Analysis, Third Edition. CRC Press; 2013."

]

},

{

"cell_type": "code",

"execution_count": 17,

"metadata": {},

"outputs": [

{

"data": {

"text/html": [

"\n",

"\n"

],

"text/plain": [

""

]

},

"execution_count": 17,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"from IPython.core.display import HTML\n",

"def css_styling():\n",

" styles = open(\"styles/custom.css\", \"r\").read()\n",

" return HTML(styles)\n",

"css_styling()"

]

}

],

"metadata": {

"kernelspec": {

"display_name": "Python 3",

"language": "python",

"name": "python3"

},

"language_info": {

"codemirror_mode": {

"name": "ipython",

"version": 3

},

"file_extension": ".py",

"mimetype": "text/x-python",

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

"version": "3.7.2"

},

"toc": {

"base_numbering": 1,

"nav_menu": {

"height": "12px",

"width": "325px"

},

"number_sections": true,

"sideBar": true,

"skip_h1_title": false,

"title_cell": "Table of Contents",

"title_sidebar": "Contents",

"toc_cell": true,

"toc_position": {},

"toc_section_display": true,

"toc_window_display": true

}

},

"nbformat": 4,

"nbformat_minor": 1

}