{

"cells": [

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Data Analysis and Machine Learning Applications for Physicists"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"*Material for a* [*University of Illinois*](http://illinois.edu) *course offered by the* [*Physics Department*](https://physics.illinois.edu). *This content is maintained on* [*GitHub*](https://github.com/illinois-mla) *and is distributed under a* [*BSD3 license*](https://opensource.org/licenses/BSD-3-Clause).\n",

"\n",

"[Table of contents](Contents.ipynb)"

]

},

{

"cell_type": "code",

"execution_count": 1,

"metadata": {},

"outputs": [],

"source": [

"%matplotlib inline\n",

"import matplotlib.pyplot as plt\n",

"import seaborn as sns; sns.set()\n",

"import numpy as np\n",

"import pandas as pd\n",

"import warnings\n",

"warnings.filterwarnings('ignore')"

]

},

{

"cell_type": "code",

"execution_count": 2,

"metadata": {},

"outputs": [],

"source": [

"import scipy.stats"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Bayesian Statistics"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### Types of Probability"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"We construct a probability space by assigning a numerical probability in the range $[0,1]$ to sets of outcomes (events) in some space.\n",

"\n",

"When outcomes are the result of an uncertain but **repeatable** process, probabilities can always be measured to arbitrary accuracy by simply observing many repetitions of the process and calculating the frequency at which each event occurs. These **frequentist probabilities** have an appealing objective reality to them."

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "hidden",

"solution2_first": true

},

"source": [

"**DISCUSS:** How might you assign a frequentist probability to statements like:\n",

" - The electron spin is 1/2.\n",

" - The Higgs boson mass is between 124 and 126 GeV.\n",

" - The fraction of dark energy in the universe today is between 68% and 70%.\n",

" - The superconductor Hg-1223 has a critical temperature above 130K."

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "hidden"

},

"source": [

"You cannot (if we assume that these are universal constants), since that would require a measurable process whose outcomes had different values for a universal constant.\n",

"\n",

"The inevitable conclusion is that the statements we are most interested in cannot be assigned frequentist probabilities.\n",

"\n",

"However, if we allow probabilities to also measure your subjective \"degree of belief\" in a statement, then we can use the full machinery of probability theory to discuss more interesting statements. These are called **Bayesian probabiilities**.\n",

"\n",

"Roughly speaking, the choice is between:\n",

" - **frequentist statistics:** objective probabilities of uninteresting statements.\n",

" - **Bayesian statistics:** subjective probabilities of interesting statements.\n",

" \n",

"---"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### Bayesian Joint Probability"

]

},

{

"cell_type": "markdown",

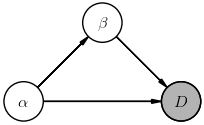

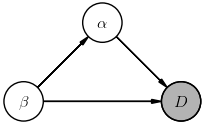

"metadata": {},

"source": [

"Bayesian statistics starts from a joint probability distribution\n",

"\n",

"$$ \\Large\n",

"P(D, \\Theta_M, M)\n",

"$$\n",

"\n",

"over \n",

"\n",

" - data features $D$\n",

" - model parameters $\\Theta_M$\n",

" - hyperparameters $M$\n",

" \n",

"The subscript on $\\Theta_M$ is to remind us that, in general, the set of parameters being used depends on the hyperparameters (e.g., increasing `n_components` adds parameters for the new components). We will sometimes refer to the pair $(\\Theta_M, M)$ as the **model**.\n",

"\n",

"This joint probability implies that model parameters and hyperparameters are random variables, which in turn means that they label possible outcomes in our underlying probability space.\n",

"\n",

"For a concrete example, consider the possible outcomes necessary to discuss the statement \"*the electron spin is 1/2*\", which must be labeled by the following random variables:\n",

" - $D$: the measured electron spin for an outcome, $S_z = 0, \\pm 1/2, \\pm 1, \\pm 3/2, \\ldots$\n",

" - $\\Theta_M$: the total electron spin for an outcome, $S = 0, 1/2, 1, 3/2, \\ldots$\n",

" - $M$: whether the electron is a boson or a fermion for an outcome.\n",

" \n",

"A table of random-variable values for possible outcomes would then look like:\n",

"\n",

"| $M$ | $\\Theta_M$ | $D$ |\n",

"| ---- |----------- | --- |\n",

"| boson | 0 | 0 |\n",

"| fermion | 1/2 | -1/2 |\n",

"| fermion | 1/2 | +1/2 |\n",

"| boson | 1 | -1 |\n",

"| boson | 1 | 0 |\n",

"| boson | 1 | +1 |\n",

"| ... | ... | ... |\n",

"\n",

"Only two of these outcomes occur in our universe, but a Bayesian approach requires us to broaden the sample space from \"*all possible outcomes*\" to \"*all possible outcomes in all possible universes*\"."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### Likelihood"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"The **likelihood** ${\\cal L}_M(\\Theta_M, D)$ is a function of model parameters $\\Theta_M$ (given hyperparameters $M$) and data features $D$, and measures the probability (density) of observing the data $\\vec{x}$ given the model. For example, a Gaussian mixture model has the likelihood function:\n",

"\n",

"$$ \\Large\n",

"{\\cal L}_M\\left(\\mathbf{\\Theta}_M, \\vec{x} \\right) = \\sum_{k=1}^{K}\\, \\omega_k G(\\vec{x} ; \\vec{\\mu}_k, C_k) \\; ,\n",

"$$\n",

"\n",

"with parameters\n",

"\n",

"$$ \\Large\n",

"\\begin{aligned}\n",

"\\mathbf{\\Theta}_M = \\big\\{\n",

"&\\omega_1, \\omega_2, \\ldots, \\omega_K, \\\\\n",

"&\\vec{\\mu}_1, \\vec{\\mu}_2, \\ldots, \\vec{\\mu}_K, \\\\\n",

"&C_1, C_2, \\ldots, C_K \\big\\}\n",

"\\end{aligned}\n",

"$$\n",

"\n",

"and hyperparameter $K$. Note that the likelihood must be normalized over the data for any values of the (fixed) parameters and hyperparameters. However, it is not normalized over the parameters or hyperparameters.\n",

"\n",

"The likelihood function plays a central role in both frequentist and Bayesian statistics, but is used and interpreted differently. We will focus on the Bayesian perspective, where $\\Theta_M$ and $M$ are considered random variables and the likelihood function is associated with the conditional probability\n",

"\n",

"$$ \\Large\n",

"{\\cal L}_M\\left(\\Theta_M, D \\right) = P(D\\mid \\Theta_M, M)\n",

"$$\n",

"\n",

"of observing features $D$ given the model $(\\Theta_M, M)$."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### Bayesian Inference"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Once we associated the likelihood with a conditional probability, we can apply the earlier rules (2 & 3) of probability calculus to derive the **generalized Bayes' rule**:\n",

"\n",

"$$ \\Large\n",

"P(\\Theta_M\\mid D, M) = \\frac{P(D\\mid \\Theta_M, M)\\,P(\\Theta_M\\mid M)}{P(D\\mid M)}\n",

"$$\n",

"\n",

"Each term above has a name and measures a different probability:\n",

" 1. **Posterior:** $P(\\Theta_M\\mid D, M)$ is the probability of the parameter values $\\Theta_M$ given the data and the choice of hyperparameters.\n",

" 2. **Likelihood:** $P(D\\mid \\Theta_M, M)$ is the probability of the data given the model.\n",

" 3. **Prior:** $P(\\Theta_M\\mid M)$ is the probability of the model parameters given the hyperparameters and *marginalized over all possible data*.\n",

" 4. **Evidence:** $P(D\\mid M)$ is the probability of the data given the hyperparameters and *marginalized over all possible parameter values given the hyperparameters*.\n",

" \n",

"In typical inference problems, the posterior (1) is what we really care about and the likelihood (2) is what we know how to calculate. The prior (3) is where we must quantify our subjective \"degree of belief\" in different possible universes.\n",

"\n",

"What about the evidence (4)? Using the earlier rule (5) of probability calculus, we discover that (4) can be calculated from (2) and (3):\n",

"\n",

"$$ \\Large\n",

"P(D\\mid M) = \\int d\\Theta_M' P(D\\mid \\Theta_M', M)\\, P(\\Theta_M'\\mid M) \\; .\n",

"$$\n",

"\n",

"Note that this result is not surprising since the denominator must normalize the ratio to yield a probability (density). When the set of possible parameter values is discrete, $\\Theta_M \\in \\{ \\Theta_{M,1}, \\Theta_{M,2}, \\ldots\\}$, the normalization integral reduces to a sum:\n",

"\n",

"$$ \\Large\n",

"P(D\\mid M) \\rightarrow \\sum_k\\, P(D\\mid \\Theta_{M,k}, M)\\, P(\\Theta_{M,k}\\mid M) \\; .\n",

"$$\n",

"\n",

"The generalized Bayes' rule above assumes fixed values of any hyperparameters (since $M$ is on the RHS of all 4 terms), but a complete inference also requires us to consider different hyperparameter settings. We will defer this (harder) **model selection** problem until later."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

""

]

},

{

"cell_type": "markdown",

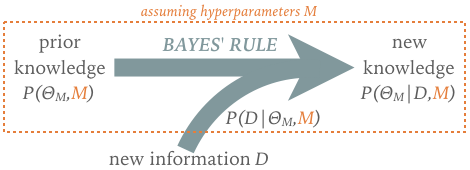

"metadata": {

"solution2": "shown",

"solution2_first": true

},

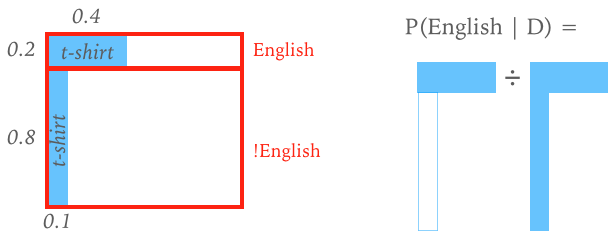

"source": [

"**EXERCISE:** Suppose that you meet someone for the first time at your next conference and they are wearing an \"England\" t-shirt. Estimate the probability that they are English by:\n",

" - Defining the data $D$ and model $\\Theta_M$ assuming, for simplicity, that there are no hyperparameters.\n",

" - Assigning the relevant likelihoods and prior probabilities (terms 2 and 3 above).\n",

" - Calculating the resulting LHS of the generalized Baye's rule above."

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "shown"

},

"source": [

"Solution:\n",

" - Define the **data** $D$ as the observation that the person is wearing an \"England\" t-shirt.\n",

" - Define the **model** to have a single parameter, the person's nationality $\\Theta \\in \\{ \\text{English}, \\text{!English}\\}$.\n",

" - We don't need to specify a full **likelihood function** over all possible data since we only have a single datum. Instead, it is sufficient to assign the likelihood probabilities:\n",

"\n",

"$$ \\Large\n",

"P(D\\mid \\text{English}) = 0.4 \\quad , \\quad P(D\\mid \\text{!English}) = 0.1\n",

"$$\n",

"\n",

"Note that the likelihood probabilities do not sum to one since the likelihood is normalized over the data, not the model, unlike the prior probabilities which must sum to one.\n",

"\n",

"- Assign the **prior probabilities** for attendees at the conference:\n",

"\n",

"$$ \\Large\n",

"P(\\text{English}) = 0.2 \\quad , \\quad P(\\text{!English}) = 0.8\n",

"$$\n",

"\n",

" - We can now calculate the LHS of the generalized Bayes' rule which gives an estimate of the probabilty that the person is English given the shirt (Note: we calculate the **evidence** $P(D)$ using a sum rather than integral, because $\\Theta$ is discrete.):\n",

"\n",

"$$ \\Large\n",

"\\begin{aligned}\n",

"P(\\text{English}\\mid D) &= \\frac{P(D\\mid \\text{English})\\, P(\\text{English})}\n",

"{P(D\\mid \\text{English})\\, P(\\text{English}) + P(D\\mid \\text{!English})\\, P(\\text{!English})} \\\\\n",

"&= \\frac{0.4\\times 0.2}{0.4\\times 0.2 + 0.1\\times 0.8} \\\\\n",

"&= 0.5\n",

"\\end{aligned}\n",

"$$\n",

"\n",

"\n",

"\n",

"You would have probably assigned different probabilities, since these are subjective assessments where reasonable people can disagree. However, by allowing some subjectivity we are able to make a precise statement under some (subjective) assumptions.\n",

"\n",

"A simple example like this can be represented graphically in the 2D space of joint probability $P(D, \\Theta)$:\n",

"\n",

"\n",

"---"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

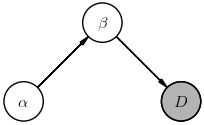

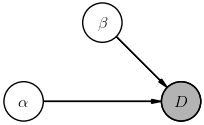

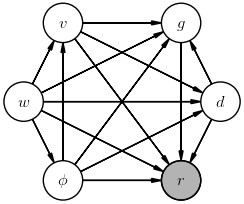

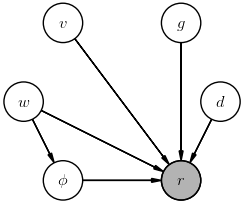

"The generalized Bayes' rule can be viewed as a learning rule that updates our knowledge as new information becomes available:\n",

"\n",

"\n",

"The implied timeline motivates the *posterior* and *prior* terminology, although there is no requirement that the prior be based on data collected before the \"new\" data.\n",

"\n",

"Bayesian inference problems can be tricky to get right, even when they sound straightforward, so it is important to clearly spell out what you know or assume, and what you wish to learn:\n",

" 1. List the possible models, i.e., your hypotheses.\n",

" 2. Assign a prior probability to each model.\n",

" 3. Define the likelihood of each possible observation $D$ for each model.\n",

" 4. Apply Bayes' rule to learn from new data and update your prior.\n",

" \n",

"For problems with a finite number of possible models and observations, the calculations required are simple arithmetic but quickly get cumbersome. A helper function lets you hide the arithmetic and focus on the logic:"

]

},

{

"cell_type": "code",

"execution_count": 3,

"metadata": {},

"outputs": [],

"source": [

"def learn(prior, likelihood, D):\n",

" # Calculate the Bayes' rule numerator for each model.\n",

" prob = {M: prior[M] * likelihood(D, M) for M in prior}\n",

" # Calculate the Bayes' rule denominator.\n",

" norm = sum(prob.values())\n",

" # Return the posterior probabilities for each model.\n",

" return {M: prob[M] / norm for M in prob}"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"For example, the problem above becomes:"

]

},

{

"cell_type": "code",

"execution_count": 4,

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"{'English': 0.5, '!English': 0.5}"

]

},

"execution_count": 4,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"prior = {'English': 0.2, '!English': 0.8}\n",

"\n",

"def likelihood(D, M):\n",

" if M == 'English':\n",

" return 0.4 if D == 't-shirt' else 0.6\n",

" else:\n",

" return 0.1 if D == 't-shirt' else 0.9\n",

" \n",

"learn(prior, likelihood, D='t-shirt')"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"Note that the (posterior) output from one learning update can be the (prior) input to the next update. For example, how should we update our knowledge if the person wears an \"England\" t-shirt the next day also?"

]

},

{

"cell_type": "code",

"execution_count": 5,

"metadata": {},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"{'English': 0.8, '!English': 0.2}\n"

]

}

],

"source": [

"post1 = learn(prior, likelihood, 't-shirt')\n",

"post2 = learn(post1, likelihood, 't-shirt')\n",

"print(post2)"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"The `mls` package includes a function `Learn` for these calculations that allows multiple updates with one call and displays the learning process as a pandas table:"

]

},

{

"cell_type": "code",

"execution_count": 6,

"metadata": {},

"outputs": [],

"source": [

"from mls import Learn"

]

},

{

"cell_type": "code",

"execution_count": 7,

"metadata": {},

"outputs": [

{

"data": {

"text/html": [

"

\n",

"\n",

"

\n",

" \n",

"

\n",

"

\n",

"

!English

\n",

"

English

\n",

"

\n",

" \n",

" \n",

"

\n",

"

PRIOR

\n",

"

0.8

\n",

"

0.2

\n",

"

\n",

"

\n",

"

D=t-shirt

\n",

"

0.5

\n",

"

0.5

\n",

"

\n",

"

\n",

"

D=t-shirt

\n",

"

0.2

\n",

"

0.8

\n",

"

\n",

" \n",

"

\n",

"

"

],

"text/plain": [

" !English English\n",

"PRIOR 0.8 0.2\n",

"D=t-shirt 0.5 0.5\n",

"D=t-shirt 0.2 0.8"

]

},

"metadata": {},

"output_type": "display_data"

}

],

"source": [

"Learn(prior, likelihood, 't-shirt', 't-shirt')"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"\n",

"https://commons.wikimedia.org/wiki/File:Dice_(typical_role_playing_game_dice).jpg"

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "shown",

"solution2_first": true

},

"source": [

"**EXERCISE:** Suppose someone rolls 6, 4, 5 on a dice without telling you whether it has 4, 6, 8, 12, or 20 sides.\n",

" - What is your intuition about the true number of sides based on the rolls?\n",

" - Identify the models (hypotheses) and data in this problem.\n",

" - Define your priors assuming that each model is equally likely.\n",

" - Define a likelihood function assuming that each dice is fair.\n",

" - Use the `Learn` function to estimate the posterior probability for the number of sides after each roll."

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "shown"

},

"source": [

"We can be sure the dice is not 4-sided (because of the rolls > 4) and guess that it is unlikely to be 12 or 20 sided (since the largest roll is a 6).\n",

"\n",

"The models in this problem correspond to the number of sides on the dice: 4, 6, 8, 12, 20.\n",

"\n",

"The data in this problem are the dice rolls: 6, 4, 5.\n",

"\n",

"Define the prior assuming that each model is equally likely:"

]

},

{

"cell_type": "code",

"execution_count": 8,

"metadata": {

"solution2": "shown"

},

"outputs": [],

"source": [

"prior = {4: 0.2, 6: 0.2, 8: 0.2, 12: 0.2, 20: 0.2}"

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "shown"

},

"source": [

"Define the likelihood assuming that each dice is fair:"

]

},

{

"cell_type": "code",

"execution_count": 9,

"metadata": {

"solution2": "shown"

},

"outputs": [],

"source": [

"def likelihood(D, M):\n",

" if D <= M:\n",

" return 1.0 / M\n",

" else:\n",

" return 0.0"

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "shown"

},

"source": [

"Finally, put the pieces together to estimate the posterior probability of each model after each roll:"

]

},

{

"cell_type": "code",

"execution_count": 10,

"metadata": {

"solution2": "shown"

},

"outputs": [

{

"data": {

"text/html": [

"

\n",

"\n",

"

\n",

" \n",

"

\n",

"

\n",

"

4

\n",

"

6

\n",

"

8

\n",

"

12

\n",

"

20

\n",

"

\n",

" \n",

" \n",

"

\n",

"

PRIOR

\n",

"

0.2

\n",

"

0.200

\n",

"

0.200

\n",

"

0.200

\n",

"

0.200

\n",

"

\n",

"

\n",

"

D=6

\n",

"

0.0

\n",

"

0.392

\n",

"

0.294

\n",

"

0.196

\n",

"

0.118

\n",

"

\n",

"

\n",

"

D=4

\n",

"

0.0

\n",

"

0.526

\n",

"

0.296

\n",

"

0.131

\n",

"

0.047

\n",

"

\n",

"

\n",

"

D=5

\n",

"

0.0

\n",

"

0.635

\n",

"

0.268

\n",

"

0.079

\n",

"

0.017

\n",

"

\n",

" \n",

"

\n",

"

"

],

"text/plain": [

" 4 6 8 12 20\n",

"PRIOR 0.2 0.200 0.200 0.200 0.200\n",

"D=6 0.0 0.392 0.294 0.196 0.118\n",

"D=4 0.0 0.526 0.296 0.131 0.047\n",

"D=5 0.0 0.635 0.268 0.079 0.017"

]

},

"metadata": {},

"output_type": "display_data"

}

],

"source": [

"Learn(prior, likelihood, 6, 4, 5)"

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "shown"

},

"source": [

"Somewhat surprisingly, this toy problem has a practical application with historical significance!\n",

"\n",

"Imagine a factory that has made $N$ items, each with a serial number 1--$N$. If you randomly select items and read their serial numbers, the problem of estimating $N$ is analogous to our dice problem, but with many more models to consider. This approach was successfully used in World-War II by the Allied Forces to [estimate the production rate of German tanks](https://en.wikipedia.org/wiki/German_tank_problem) at a time when most academic statisticians rejected Bayesian methods.\n",

"\n",

"For more historical perspective on the development of Bayesian methods (and many obstacles along the way), read the entertaining book [The Theory That Would Not Die](https://www.amazon.com/Theory-That-Would-Not-Die/dp/0300188226).\n",

"\n",

"---"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"The discrete examples above can be solved exactly, but this is not true in general. The challenge is to calculate the evidence, $P(D\\mid M$), in the Bayes' rule denominator, as the marginalization integral:\n",

"\n",

"$$ \\Large\n",

"P(D\\mid M) = \\int d\\Theta_M' P(D\\mid \\Theta_M', M)\\, P(\\Theta_M'\\mid M) \\; .\n",

"$$\n",

"\n",

"With careful choices of the prior and likelihood function, this integral can be performed analytically. However, for most practical work, an approximate _numerical approach_ is required. Popular methods include **Markov-Chain Monte Carlo** and **Variational Inference**, which we will meet soon."

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"### What Priors Should I Use?"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"The choice of priors is necessarily subjective and sometimes contentious, but keep the following general guidelines in mind:\n",

" - Inferences on data from an informative experiment are not very sensitive to your choice of priors.\n",

" - If your (posterior) results are sensitive to your choice of priors you need more (or better) data.\n",

" \n",

"For a visual demonstration of these guidelines, the following function performs exact inference for a common task: you make a number of observations and count how many pass some predefined test, and want to infer the fraction $0\\le \\theta\\le 1$ that pass. This applies to questions like:\n",

" - What fraction of galaxies contain a supermassive black hole?\n",

" - What fraction of Higgs candidate decays are due to background?\n",

" - What fraction of of my nanowires are superconducting?\n",

" \n",

"For our prior, $P(\\theta)$, we use the [beta distribution](https://en.wikipedia.org/wiki/Beta_distribution) which is specified by hyperparameters $a$ and $b$:\n",

"\n",

"$$ \\Large\n",

"P(\\theta\\mid a, b) = \\frac{\\Gamma(a+b)}{\\Gamma(a)\\Gamma(b)}\\, \\theta^{a-1} \\left(1 - \\theta\\right)^{b-1} \\; ,\n",

"$$\n",

"\n",

"where $\\Gamma$ is the [gamma function](https://en.wikipedia.org/wiki/Gamma_function) related to the factorial $\\Gamma(n) = (n-1)!$"

]

},

{

"cell_type": "code",

"execution_count": 11,

"metadata": {},

"outputs": [],

"source": [

"def binomial_learn(prior_a, prior_b, n_obs, n_pass):\n",

" theta = np.linspace(0, 1, 100)\n",

" # Calculate and plot the prior on theta.\n",

" prior = scipy.stats.beta(prior_a, prior_b)\n",

" plt.fill_between(theta, prior.pdf(theta), alpha=0.25)\n",

" plt.plot(theta, prior.pdf(theta), label='Prior')\n",

" # Calculate and plot the likelihood of the fixed data given any theta.\n",

" likelihood = scipy.stats.binom.pmf(n_pass, n_obs, theta)\n",

" plt.plot(theta, likelihood, 'k:', label='Likelihood')\n",

" # Calculate and plot the posterior on theta given the observed data.\n",

" posterior = scipy.stats.beta(prior_a + n_pass, prior_b + n_obs - n_pass)\n",

" plt.fill_between(theta, posterior.pdf(theta), alpha=0.25)\n",

" plt.plot(theta, posterior.pdf(theta), label='Posterior')\n",

" # Plot cosmetics.\n",

" plt.legend(bbox_to_anchor=(0., 1.02, 1., .102), loc=3,\n",

" ncol=3, mode=\"expand\", borderaxespad=0., fontsize='large')\n",

" plt.ylim(0, None)\n",

" plt.xlim(theta[0], theta[-1])\n",

" plt.xlabel('Pass fraction $\\\\theta$')"

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "hidden",

"solution2_first": true

},

"source": [

"**EXERCISE:**\n",

"\n",

"**Q1:** Think of a question in your research area where this inference problem applies.\n",

"\n",

"**Q2:** Infer $\\theta$ from 2 observations with 1 passing, using hyperparameters $(a=1,b=1)$.\n",

" - Explain why the posterior is reasonable given the observed data.\n",

" - What values of $\\theta$ are absolutely ruled out by this data? Does this make sense?\n",

" - How are the three quantities plotted normalized?\n",

" \n",

"**Q3:** Infer $\\theta$ from the same 2 observations with 1 passing, using instead $(a=10,b=5)$.\n",

" - Is the posterior still reasonable given the observed data? Explain your reasoning.\n",

" - How might you choose between these two subjective priors?\n",

"\n",

"**Q4:** Use each of the priors above with different data: 100 trials with 60 passing.\n",

" - How does the relative importance of the prior and likelihood change with better data?\n",

" - Why are the likelihood values so much smaller now?"

]

},

{

"cell_type": "code",

"execution_count": 12,

"metadata": {

"solution2": "hidden"

},

"outputs": [

{

"data": {

"image/png": "iVBORw0KGgoAAAANSUhEUgAAAXkAAAEhCAYAAACa3tCnAAAABHNCSVQICAgIfAhkiAAAAAlwSFlzAAALEgAACxIB0t1+/AAAADl0RVh0U29mdHdhcmUAbWF0cGxvdGxpYiB2ZXJzaW9uIDIuMi4zLCBodHRwOi8vbWF0cGxvdGxpYi5vcmcvIxREBQAAIABJREFUeJzs3Xd8W9X5+PGPhmVbllfsJM7ytk+cSUhIAgQSCIQkzEILlNIWWgq0tLS0vy+j4wsdwLeloYWWUVYpBQqUUWZCISSQvYezTvZwhmM73lvS/f0hRXFCYju2rCvJz/v14kV0Jd37+Eh6dHTuuc+xGIaBEEKI6GQ1OwAhhBA9R5K8EEJEMUnyQggRxSTJCyFEFJMkL4QQUUySvBBCRDFJ8kIIEcUkyQshRBSTJC+EEFFMkrwQQkQxSfJCCBHF7GYHEE6UUtnADqC4zWYL8JjW+oUTHnsFcJHW+s7QRSja8r9eG7TWrhO2/wbYrrV+SSllAH211uWd3OcU4K9a6xHd2c/pUEqNA97UWmcHe9+92el8nju5v0uBCVrr/z3N5wXeR6d7zGCQJP9ljVrrM47eUEoNAjYopVZqrdcf3a61fg94z4wARftO90PY0/sRpurU57mTzgL6nG4AZr+PJMl3QGu9Xym1DZimlHoCSACqgX8AX9VaX6aUGgw8BWTj6yn8Q2v9iL8nsQDY7L9vstb6YOj/it5FKfUivh7+H9tsywA+BZ7SWj+hlCoCHgPSABvw+El+rZ24n18rpSb6n/OI1voJ/+N+BXwdcANbgR9qrQ+d6n3hf873gbvwvZfa9jRFD2rzeS5USl3JyV+3q4FfAl7AA/wP0AzcDtiUUtVa618opb4L/ADfsHeF//lb/O+bPkAe8AHQH//7SCl1HvAI4ARagF9qrecopW4Cvos/v2itLwjW32x6kr/8Z+8+Anythw/z7/dnXfk/XXmiUupsIB+IB4YD2VrrGv+LctQrwLta60eVUsnAF0qpfcBSYDBwg9Z6Qbf+gjBisVh2A0sNw7jef/tq4FHgHsMwXvdv+ydwHlBoGEaLxWJJB1YCHxqGcYf/Md8DfgHcYhjGp/5tHxmGMTPIIQ/G9xo9pLV+RSllB94Evqm1Xu1/zZYopTZ1sJ+dWus7lFJj/I9/BrgRmAGcpbWuV0o9ALwITOfU74stwAPAaH9SeTrIf69prn39+yH5PL9x3VPd/TwXcerX7RHgG1rrpUqpacAUrfVv/K9Tuj/BTwa+DZyntW7wP+4d/34BnFrr4f5jvuj/fxq+990VWutlSqnhwOdKqbP8zwnkl678badiepIPQ/FKqbX+f9uBcuAb+L6N15/4AiilEoBzgWkAWutq/4s6A1+SdwNLQhO6OIWPgBLgVf/tQny9rBeUUkcfEw+Mwfer61SOPn8tEAsk4Xud/661rvff9xjwC39SP9X7YhDwX631If9znsGXXETwnerzfDMnf90cwGvAO0qpD4FPgD+cZL+X4vuyWNzmPZSqlDo6nLPwJM+ZgG9sfhmA1nqjUmoRMAUwOEl+CQbTk7y/h92lb+UectwY3lH+nnvdSR5vxfdT/MRtMf5/N2ut3UGN0GSGYWSfcPtt4O0Ttn3zhNvl+IYt2m57Fnj2hG3B7sUD3IbvF8NPgVn4hmeqTxir7Y9v6GRiO/tpBdBaG/4PtsW/r7Yr71g59rlq733R9r6oeX/4e9iR8Hm+hZO/bhZ/T/0F4GLgJuBnwPgTdmED/qm1vse/PyswEKj033+yXHHie+XocWPwDd2c7DndJlMou0lrXYuvx34HgL8H9y18PQARHpbg+2n9S6XUCEADjUqpGwGUUkOADcDYLux7DvAd/y86gDuBL7TW1Zz6ffFffOd4Bvufc1NX/ijRLSd93QCPUmo3vuGWp/GNuY9SSsXi+zI++iX9MfB1pdQA/+3bgbkdHHMJMFQpNR7AP1xzPjA/GH/QqUiSD45vAFOVUsXAcny92hdNjaj3SFBK1bX9D0g88UFaaw38FnjZv+lK4Bal1Hp8SfdXWutFXTj+8/hO6C5XSm0GzsT3foBTvC+01sXA3cBcpdRKIK4LxxXdc9LXzf+r+yfAq0qp1cC/ge9orZuBz4BLlFJ/0Vr/F/g98In/PXQDcLXW+pTrqfqn334N+Iv/PfEqcLPWemvP/ZlgkTVehRAieklPXgghopgkeSGEiGKS5IUQIopJkhdCiCgmSV4IIaKYJHkhhIhikuSFECKKSZIXQogoJkleCCGimCR5IYSIYiGvQul2e4zKyoZQHzYspaY6kbbwkbY4RtriGGmLY/r2TTyxqmmnhLwnb7fbQn3IsCVtcYy0xTHSFsdIW3SfDNcIIUQUkyQvhBBRTJK8EEJEMUnyQggRxSTJCyFEFJMkL4QQUUySvBBCRLGQXwwlRDgwDIMWbytN7maaPU14DW/gPgsWYu2xxNpiibU5sFqkLyQilyR5EZUMw6CquZpDDYcpbSjjcEM5FY1HqGmpobq5ltqWOrx4O94R4IpJIDk2iSRHIn3iUujn7Et//3/p8WnyJSDCmiR5ERWa3M3srN7Njurd7K0pYW9tCXWt9V96nM1iIyHGSf+EvjhsDhzWGGKsMdisx66s9BpeWj2ttHhbafG00OBupKyhnP11B7+0v1hbLEMSB5KZOJic5CwKUnJJdLh69G8V4nRIkhcRyWt42VNTwobyTejK7eypKTmuZ57kSCQ/JYe0uD6kxCaTGpdCsiOJWJsDi6VLJUBo8bRS01JLVXMVlU3VHGmq5HBjOTuqdrO9ahfsWwDAgIT+FKbmMyJtKAWpecRY5WMmzCPvPhExPF4PWyt3sOrwOjaUb6a2tQ4AK1b6J/RlkGsAg1wD6O/sR7w9LujHd9hiSI/vQ3p8n+O2t3haKWv09fT31x3kQP0hDtaX8nnJImJtDor6FDKm3yhGpg8j1uYIelxCtEeSvAh7e2r2sezQKlaXrg8kdqc9nmF9FLnJWQxJHIzDFmNafA5bTOALBnxfRgfqD7Greg+7avawtmwDa8s24LA6GNV3GOMzxlLUp0DG8kVISJIXYanJ3cSK0rUs2r+MfXX7AYi3xzEqfRiFqfkMTMjo8rBLT7NZbQxJHMSQxEGcZ5zNkaZKtlbuQFduZ2XpWlaWrqVPXArnDpzAxAHjSIlNNjtkEcUshmGE+phGWVltqI8Zlvr2TUTawudoW1Q0VjK/ZCGLDiyn2dOMBQu5yVkMTysiK2lwRPd+DcOgtOEwGyu2oCu30+p1Y7VYGdtvNFMzz2dI4iBA3hdtSVsc09V68pLkTSRv4GMaYqp5bc0HrDlcjBcvCTFORqUPZ1iawhWTYHZ4QdfsaWFr5XbWlW2goqkSgMKUPKZlX8B5hWdSXl5ncoThQT4jx0iSj0DyBob9dQf5aNenrC0rBiA9rg9n9htNYWrecdMao5VhGOytLWHV4XXsq/UNS6m0XC4eciFDUwvCdkgqVOQzckxXk7yMyQtTlDVU8N7O2aw+vB6AQYkZjO07huykIb0qsVksFrKShpCVNITDDWUsP7QaXbETXbGT3ORsvpI/k9zkbLPDFBFMevIm6o29lLqWembv/pQF+5fiMTz0d/Zl4oBxjB6iqK5uNDu8sNBoq+PTbYvYWb0bgDP6juTKvOn0c/Y1NzAT9MbPyKn0aE9eKTUB+L3Wesop7n8GOKK1vrcrQYjo5/F6WLB/KR/s/JhGTxPJjiTOHTie/JRcLBZLr+q9d2RAYj8uz72EA3WHWHhgKWvLillfvpELhkxiZvZFxPXANQAienWY5JVSdwPfBL58jbjv/tuAkcDnwQ1NRIvtVbt4Q/+H/fUHibU5OH/QOYxKH9Yrxty7Y6Arg68VXMn26l0s3L+UuXu/YOWhtVydfylj+58hX4yiUzrTk98BXA3888Q7lFJnAxOBvwFDgxuaiHQNrY28s/0DFh9cAcCwPopzB07AGRNvcmSRw2KxUJCSS05SJitL17KqdC1/3/QvFh9cwQ1DryE9Ps3sEEWY6zDJa63fUkpln7hdKTUAeAD4CnBt0CMTEW1d2QZe0+9Q01JLenwaFw6ZxICEDLPDilh2q52JA8ZR1KeQ+SWL0JXbeXDZo1yeN50pg8+N6OsHRM/q1IlXf5J/TWs9sc22O4FvA7VABuAE/ldr/WIHuwv5mV4ROnXN9Ty36l8s3rcKm8XG5OyJnJM5VoZmgsgwDDYc1szZNo+G1iYK0nL44YSbGJDYz+zQRM/quXnyJ0vyJ9x/EzC0kydeZXaNX7TNHNh8ZCv/3PQG1S01DEjoz0WZk+kTl9qp56akOKmqaujhCCNDZ9uiobWRz0sWsbVqBw5rDFcXXM6kgROiaqw+2j4j3RGyefJKqRsAl9b6ma4cUESfVk8r7+6YzbyShVixcvaAsxjX/wwZQuhhzph4ZuRcRF5lNp/tW8Br+m02lG/mxqKvSU17ESDz5E0UDb2Uww1lPL/hFUrqDpAam8Il2RfSvwvzuaUnf0xX2qK2pY5P9sxnX91+khyJ3Dz8BgpT83oowtCJhs9IsHS1Jy9dLdFlq0rX8n8rHqek7gAj0oby9aFXdynBi+5LdLj4Sv6lTBo4gdqWOh5f8wyzd809bu1a0TtJWQNx2txeN29t+4Av9i8mxmrnkqwLGdqnwOywej2LxcLY/mcwICGDObvn8sGuj9lRvYubh99AQozT7PCESaQnL05LTUstj695hi/2LyYtrg/Xq6slwYeZga4Mvj70GrKTMtl8ZCu/X/H4SdenFb2DJHnRabtr9vL7FY+xo3o3BSm5XFt4Vadnz4jQirfHcUXudMZnnElF0xH+uPKvrCpda3ZYwgQyXCM6ZeWhNfxz87/xGB7OHTiBsf1GR9VUvWhksVg4e8BZ9ItP5+M983hh46scrC/l0pxp8tr1IpLkRbsMw2D27k/5cNcnOKwOLs29mOykTLPDEqchLyWH6+NSeG/HHGbvnsvhhnJuLLrW1HVxRejIcI04pVavm39sep0Pd31CkiORawuvlAQfofrEpXKduoqBCRmsOryOx9b8jZoWmZrYG0iSFyfV6G7kibXPsaJ0NRnOflxX+BXS4vuYHZbohnh7PF/Jv4yhqQXsrtnLrJVPcLih3OywRA+TJC++pKq5mj+tepptVTvJS87hmoLLpXJklLBbbUzLuoDxGWdS3nSEWaueYE/NPrPDEj1Ikrw4Tmn9YWateoL99QcZlT6MmTkXYbfKqZtocvSE7AVDzqO+tYE/r/4bmyq02WGJHiJJXgTsrS1h1uqnONJUxdkDzmLK4ElSfyaK+b7EL8ZjeHh6/YuB9XZFdJFPsABgZ/VuHl/9DPWt9Uwdcj7jM86UaXa9QH5KDl/Jn4nNYuWFDa+w9OBKs0MSQSZJXrDlyDb+suZZmjzNTM+ayoj0IrNDEiE0yDWQr+RfhsPm4J+b3+DzksVmhySCSJJ8L7exQvPUur/jMTxcmjMN1Sff7JCECTIS+vHVgstx2uN5Y+t/+HSvLNkcLSTJ92IbKzTPrP8HYHB57gzyUrLNDkmYKD0+ja8WXIErJoF3tn8oiT5KSJLvpY5L8HnTyUoabHZIIgykxqVwTcHlkuijiCT5XujEBJ+ZKAleHJMSmyyJPopIku9ltlbu4NliSfCifScm+i/kZGzEkiTfi+yq3uM/yerl0txpkuBFu1Jik7k6/zKc9nhe3/ofmV4ZoSTJ9xL7ag/wxLrnafW2MiP7Iik0JjolNS6Fq/IvJdYWy8ub/y0XTEWgTiV5pdQEpdT8k2z/ulJqmVJqsVLqaaWUfGmEocMNZfx17bM0upuYljWF/JQcs0MSEaRvfBpX5c0kxmrn7xtfZXPFVrNDEqehw6SslLobeA6IO2F7PPA74AKt9TlAMnBZTwQpuq66uYa/rH2OutZ6Lhg8iaF9Cs0OSUSgjIR+XJE3HQsWnil+id01e80OSXRSZ3reO4CrT7K9GThHa93gv20HmoIVmOi+htZGnlj7PEeaKpmQMZZRfYebHZKIYINcA5mRPZVWbytPrn2B0vrDZockOsFiGEaHD1JKZQOvaa0nnuL+HwEzgZla64522PEBRbe1eFp58PO/sLlsG+MGjWZmwQVSi0YExeoDxbyvPyXdmcrvpt5NH2eK2SH1Fl36AHerhqx/DP4PQCFwTScSPABlZbIiDUDfvok90hZew8sLG19lc9k28lNyObvveKqrG4N+nGBKSXFSVdXQ8QN7gXBvi1xnHmcPqGbJwRX8dt7j3HXm94m3x3X8xC7oqc9IJOrbN7FLz+vuidK/4Rurv6rNsI0w2bs7ZrPm8HoGJmRwSdYFUi5YBN1Z/ccwMn0Y++sO8vyGl/F4PWaHJE7htHvySqkbABewEvgusAD4TCkF8JjW+p2gRihOyxclS/h07+ekxqZwee4lsuCH6BEWi4Upg8+ltqWOzUe28pp+hxuGXiNDgmGoUxlAa70bmOj/96tt7pIuYhjZUL6ZN7b+h3h7HFfmzSCuh35CCwFgtViZkX0Rb257j8UHl5Me34dLsi80OyxxAknSUWJ/3UFe2PgKNouNK3KnkxybZHZIohdw2GK4Im86iTEu3ts5hzWHi80OSZxAknwUqG2p4+n1f6fZ08K0rAvISOhvdkiiF3HFJHB53nRirHb+sek19taWmB2SaEOSfIRr9bp5pvgljjRVMTFjHAWpuWaHJHqhvvFpTPfPof/b+hepbq4xOyThJ0k+ghmGwb+2vMXO6t0UpuQxPuNMs0MSvVhucjbnDpxAVXMNf1v/Ii2eVrNDEkiSj2jzShay7NAq+jv7cnHWFJnZIEw3tt9oivoUsqe2hH/pt+jMxZaiZ0mSj1BbK7fzzrYPcdqdXJYjUyVFeLBYLFw45Dz6O/ux/NBq5pcsMjukXk+SfASqaKzkuQ2vgAUuzbkYlyPB7JCECLBb7VyWMw2nPZ63t33A1srtZofUq0mSjzAtnlaeLX6J+tZ6Jg8+l4GuDLNDEuJLXI4EZuZcDMBzG17hSFOlyRH1XpLkI4hhGLym32Zf3X6Gpw1lZFqR2SEJcUqDXAOYPPgc6lvrebb4n7R63WaH1CtJko8giw8sD5xonTJ4kpxoFWFvZPowivoUsre2hDe3vWd2OL2SJPkIsbe2hDe2vkucLZaZORdjt9rMDkmIDlksFi4YMon0uD4s3L+U5YdWmx1SryNJPgI0tDbwXPHLuA03l2RdSJKjayVHhTBDjDWGS3On4bA6eHXLWxyoO2R2SL2KJPkwZxgGL21+nYqmI4zPOJPsZFmAW0SelNhkLs6aQqvXN3GgyS2LyIWKJPkwN2/fAorLNzPENYgJGWPNDkeILstPyeHMfqM43FjOa/oduVAqRCTJh7HdNXv5z47ZOO3xXJJ9oSz+ISLeOQPHk+Hsx4rSNSw9uNLscHoFyRphqtHdyAsbXsVjeLgk60ISYpxmhyREt9ksNmZkX0SszcHrW//DwfpSs0OKepLkw5BhGLyy+U0qmo5wVv8xZCYNNjskIYImKTaRizIn0+pt5fkNL9PiaTE7pKgmST4MLTqwjDVlxQxMyGDigHFmhyNE0OWn5DIqfTgH60t5a9v7ZocT1STJh5lD9Yd5c9v7xNocTM+eKuPwImqdN2iib/78gWWsK9tgdjhRq1MZRCk1QSk1/yTbL1dKrVBKLVFKfS/o0fUybq+bv298lVZvK1OHTCbR4TI7JCF6jN1qZ3r2VOwWG69sfpOq5mqzQ4pKHSZ5pdTdwHNA3AnbY4A/AdOAycCtSimpltUN7+/8mJK6Awzro2SFJ9ErpMX3YdKgidS7G3hp0+t4Da/ZIUWdzvTkdwBXn2R7EbBda12ptW4BFgLnBTO43mTLkW18uvdzUmKTmTz4XLPDESJkRqUPJycpE125nc/2LTA7nKjT4UoTWuu3lFLZJ7krCWj7+6oWSO5of9/93X/xeOQiCACbzYLHY2BYW2jK+QzsFmo3j+C5Nb1vWpnVasXrlV4c9M62MGyFkFfKO1tnM/uTBqzNvlRy9DMi4MX7L+nS87qznFAN0LaISiJQ1dGTWty9683bHo/b9+Zt7b8eI6YJS2khNKbQG1vI6+mNf/XJ9cq2cMdi3T8Sb9ZKmgesImb3+VgMW+AzIrquO0l+M1CglOoD1AHnA3/s6El33ziOqqqGbhw2eqSkOFlRsoo5pSWkxqRx/hln9trZNC5XHHV1Us8EenNbJLOmqpLd7GDkxArOSZtCSopT8kU3nXZGUUrdoJS6VWvdCvwU+BhYArygtd4f7ACjWW1rLfPK5mCz2BibenavTfBCHDUy6UwSbC5WVy3lQGOJ2eFEBUuoiwQVby835JvZd1XrnPK32F6zjdHJ48hNKDQ7JFP13t7rl/X2tqhoLuOLik9Isqfw/RE/pKHWY3ZIYeG8cZldWiVIuo4m2Vy7nu012+gXm0GOs8DscIQIG2mxfSlwDaPGXcXc/f81O5yIJ0neBLXuGhaUf0qMNYYxKRNkGT8hTlCUOJJEezIry5ZT0rjH7HAimiT5EDMMg88Of0SL0cLYvhNw2hLMDkmIsGOz2BibMhELFj49/CEtXili1lWS5ENsc+169jbuol/sAPKTZJhGiFNJdaQxvM9Iat3VLK6YZ3Y4EUuSfAjV+Ydp7JYYxqSMl2EaITowqs8YkuzJFNeslmGbLpIkHyKGYTCvbA4tRgsjk8bIMI0QnWCz2jjTP2wz9/BHtHpbzQ4p4kiSD5FtdZvY3bCDvo7+ZDnzzA5HiIiR6kgjP2EoNe4qlh35wuxwIo4k+RBo9DTwefkn2Cw2mU0jRBcMTRxJgs3F2uoVlDYdMDuciCJJPgQWlH9Kk7eRosRRJNilRrwQp8tutTMmZQIGBnPLPsJjyAVSnSVJvoftrt+BrttIakwf8hOU2eEIEbH6xvqGOitaylhdtdTscCKGJPke1OJtYX75HCxY/MM00txCdMeIpDHEWeNZfmQRlS0VZocTESTr9KDlRxZQ666hwDWM5JhUs8MRIuI5rA5GJY/Fi4f5ZR8T6tpbkUiSfA8paz7E2uoVJNhcDE0cbnY4QkSNgXFDyIgdREnTHrbUyQLgHZEk3wO8hpfPyuZgYHBG8lnYLN0p2y+EaMtisTA6eRw2i52F5XNp9EhV2/ZIku8BxTWrOdx8kCHx2fSLG2B2OEJEHac9gaLEkTR5G1kkJQ/aJUk+yOrctSyp+JwYi4ORSWeaHY4QUSsvQZEck8rm2vXsb9xrdjhhS5J8kC2smEur0cLwpDOItcWZHY4QUctqsTImeTwA88s+lrnzpyBJPoj2NexmW91mUmPSyJbSBUL0uFRHGjnOfI60lrOuaoXZ4YQlSfJB4jHczC//GAsWzkg+S0oXCBEiw5JG47DGsqxyIXXuGrPDCTuS5INkTdVyqlqPkJNQQIqjj9nhCNFrOKyxjEg6A7fRyoLyuWaHE3Y6nNunlLICTwKjgWbgFq319jb3/z/g64AXeEhr/U4PxRq2alqrWFG5iFhrHEWJo8wOR4heJzM+l931O9hev4U9DTvJcuaaHVLY6ExP/iogTmt9NnAvMOvoHUqpFOBO4GxgGvDnnggy3C2omIvbcDMiaQwOq8PscITodSwWC2eknIUFC5+X/xeP4TY7pLDRmSQ/CZgDoLVeCoxrc189sAdI8P/nDXaA4W5vwy521m8lzdGXIfHZZocjRK+VHJNKbkIB1a2VrJWTsAGduRQzCahuc9ujlLJrrY9+Ve4DNgE24OHOHDQlxXlaQYYrj+Fh0f65WLAwMeMcEuPiT3sfLpdMszxK2uIYaYtjTqctxsafRcnuvayoWsT4QWeR6EjqwcgiQ2eSfA2Q2Oa2tU2CnwEMAHL8tz9WSi3SWi9vb4dVVdFxGfKaquWUN5WR4yzA4U6grq7ptJ7vcsWd9nOilbTFMdIWx3SlLYa5RrGmejmzd33EtP5X9FBkkaMzwzWLgJkASqmJQHGb+yqBRqBZa90EVAEpwQ4yHNW761h2ZAEOi4OiJDnZKkS4yHLmkhLTB123kQON+8wOx3SdSfLvAE1KqcXAn4C7lFI/VUpdobVeAKwAliqllgBbgU96LtzwseTIfFqNFoqSRhNrjTU7HCGEn8ViZVTyWAA+L/8vXqPXnSo8TofDNVprL3D7CZu3tLn/fuD+IMcV1kqbDrC5tphkewo5cmWrEGHHNxEih32Nu9hUu54RSWeYHZJp5GKo02QYBgsqPgVgZPJYWe1JiDA1PGk0NoudpRWf0+zpvec4JEOdpm11mznYtJ+BcUPoG9vf7HCEEKcQb3OiXMNo9Dawsmqx2eGYRpL8aXB7W1lUMQ8rVkYkjTE7HCFEB/JdQ3HaElhbtYKq1iNmh2MKSfKnYU31cuo8NeS5hpJgd5kdjhCiAzaLneFJZ+DF22sXF5Ek30l17lpWVi4h1hqHcsmarUJEikFxmfRx9GVn/Vb2New2O5yQkyTfSUuPfIHbaKUocRQx1hizwxFCdJLFYmGUf5W2hRVzMQzD5IhCS5J8J5Q1l7K5dj1J9mSypbqdEBEn1ZHGkPhsylsOs6W2uOMnRBFJ8h0wDIOFFZ8BMCJpjEyZFCJCDUscjRUbS458Tqu31exwQkYyVgf2NOykpHE3/WIH0D9uoNnhCCG6yGlPIN+lqPfUsba63fJaUUWSfDu8hpdFFZ9hwSJTJoWIAoWu4cRaY1lVuYQGd73Z4YSEJPl2bKpdx5HWcjKduSTH9Iq6a0JEtRhrDEMTR9JqtLKscoHZ4YSEJPlTaPW2sOzIAmwWG8NkST8hoka2M59EexIba9ZypKXC7HB6nCT5U1hbvYIGTz35CUXE2U5/MRAhRHiyWqwMSxyNgcGSI/PNDqfHSZI/iUZPA6sql+KwxlLgKjI7HCFEkA2IG0yfmHR21m/lYFOJ2eH0KEnyJ7GichGtRgtDXSPkwichopDFYmG4v/zwoop5UX2BlCT5E1S3VlFcvRqnzUVOQr7Z4Qghekh6bD8y4gZxsKmE3Q3bzQ6nx0iSP8HSI5/jxcuwxFFYLTazwxFC9KDhiaMBC4sr5kftClKS5Nsoay5la90mkmNSGRyfZXY4QogelhSTQpYzhyOt5Wyp3WB2OD1CknwbS458DsDwxDMd5hZrAAAgAElEQVSwWCwmRyOECIWixFFYsbK8cgEew212OEEnSd7vQOM+9jTsIN3Rj36xGWaHI4QIkXibk9yEQmrdNWyoXmN2OEHX4ULeSikr8CQwGmgGbtFab29z/wyOLeS9GrhDax1Rp6oNwwj04ocljZZevBC9TKFrGLsbtrOicjFFSaNxWB1mhxQ0nenJXwXEaa3PBu4FZh29QymVCDwCXKa1ngjsBtJ7IM4etadhJwea9pERO4g0R1+zwxFChFisLY78hKE0ehtYV73C7HCCqjNJfhIwB0BrvRQY1+a+c4BiYJZSagFQqrUuC3qUPcjXi58P+HrxQojeKd9VhMMay+rKpTR6GswOJ2g6HK4BkoDqNrc9Sim71tqNr9d+AXAGUAcsUEot0VpvbW+HKSnOrsYbdBuPFFPecpicxDwGpYZ+LN7ligv5McOVtMUx0hbHhK4t4hjpGc2qsuVsbFzFRYMvCdFxe1ZnknwNkNjmttWf4AEqgBVa60MASqkv8CX8dpN8VVV4fEt6DS/zSuZiwUJ+/DDq6ppCenyXKy7kxwxX0hbHSFscE+q2GGTPYaO1mBWHl1IUN4YEuytkx+4pnRmuWQTMBFBKTcQ3PHPUKmCEUipdKWUHJgKbgh5lD9lat5HK1gqynLm47IkdP0EIEdVsFhtDE0fgNtysqlpidjhB0Zkk/w7QpJRaDPwJuEsp9VOl1BX+8ff7gI+BZcDbWuuIuKLAY3hYdmQhVqwo1wizwxFChIksZy5OWwIbatZQ564xO5xu63C4RmvtBW4/YfOWNve/BrwW5Lh63JbaYmrcVeQmFOK0J5gdjhAiTFj9vfnVVctYUbmYC/pONzukbumVF0N5DDfLjyzCig3lGm52OEKIMDMkPgeXLZFNNeuoaa0yO5xu6ZVJfmPNOuo8NeQmFMiCIEKIL7FarAxNHIEXLysqF5kdTrf0uiTv9rpZWbkYm8VOoWuY2eEIIcLU4PgsEu3JbK4tpqr1iNnhdFmvS/Iba9dS76kjN6GQWJvMRRZCnJzF35s3MFhZudjscLqsVyV5Xy9+CTaLnYKEoWaHI4QIc4PiMkm0J7OldkPE9uZ7VZLfWLuWBunFCyE6yWKxRHxvvtckeenFCyG6ItJ7870myUsvXpyotaWFmqoqmpuOXTZfW11NZXk5Xq9vKTjDMHC3tkb1Qs+ifZHem+8VSV568dHH6/EE/l1ZXs68Dz5g+6ZjFTU+fP11fvOjH1FVUQH4kvV3Z8zgdz/+ceAxKxYs4PYrrmDBxx8Htj398MPccfXVNDU2AtDY0MC3pk5l1n33BR6z+NNPuffmmylecawk7cdvvcXb//hH4MvA7XZTun8/Lc3NQf7LhRkiuTffK5L8sV58gfTiI8ye7duZ/cYbVJSWBrbdc9NN/OzGGwO3Sw8c4Nk//IGVCxce27Z/P1vWraO+thbw9caG5ObSb8CAwGPS+/Vj/JQp9G2zrWj0aM6eOpWYmBjfBsNgxLhxZBUUBB5TX1dHeWkpbvexpeLmffABH73+emDBmdKSEu76+td56fHHA49ZPHcuz8+aRemBA4FtTQ3hUaxPtO/43nxk1bSxhPpnaPH2ciOUVSg9hpuX9jxNo7eBS/pdGVZJvrdXGzQMg5qqKpJTU3G54jhQcoi//PrXZBcU8I0f/ACA9//1L/711FP87KGHGDtpEgCP3X8/TQ0N3PPII4BviGXNkiVkFxSQmZcHQEtzM3a7HavNFpK/pWTXLupqaxk6ahTg+5J55x//YMS4cUyaNg2AZ//wB+Z98AF/fPllBmZmAnDLzJkMzs7mgSefBKDi8GHKD5YwICuXpJSUkMQezsLpM2IYXuaWfUS9u45vZt5GUkxoX5/zxmV2acm6zpQajmiba4up89SSl6DCKsH3Nl6PhwP79hEbGxvoOT/x29+y+NNPefq993C5MnC6XGxZt47Y2NjA88aeey79Bgwgf9ixC9d+/OtfH7fvxORkzp9+fH0RR5t9hMLgnJzjbvcfNIjbf/7z47Z94447uOSaa+g3cCDgOydQMHz4cb8k1i1bxnOPPMKt99zDlEsvBWDue+8BMGXmTGz2qP/Ihi2LxUqhazirqpawqmppxNS0iep3jMfwsLJyCVascnVriJWXlrJ/zx5Gjx8PgC4u5rd33sml113HN+64A4C8oUNpbmwMDFnY7Xaenz37uAQ9MDMz0OuNdM6EhMAvDYAYhyPwa+SovKIibvj+bSj/LwKA9199lYb6ei68/HLA17b/eeklzp46leFnnhma4AXguwp2S20xm2rWc1bqObjsSWaH1KGoTvJbazdS664m1yk1anqS1+Nh9/btJKWkkN6/PwB/eeABdmzZwvOzZxMbF0d2YSHnT59OYZvkNePaa5lx7bXH7SvUPfBwk5Wfz/AzRhw3RPHjX/+a6srKwHj/9o0b+ez99xkwZEggyX/yzjvU19Ux/ZpriHOGz8pr0cZqsVKYOJw1VctYVbWUyenTzA6pQ1F74tVreFlRtRgLVgoSpRcfTF6vl8NtTh6uXryYX37ve3wxZ05g24VXXMF1t96Kxz8LJt7p5Paf/5yzzjsv5PFGuhylOGPixMDtceefz4PPPsvZU6cGtn367rv856WXsDscADTU1fHmCy+wS+uQxxvtMuNzcNoS2Fizjnp3ndnhdChqe/Lb6jZT3VpJtjMPp03qxXeXYRiBnuQDP/gB+3fv5m8ffIDdbmfo6NFccNllFAw/VrZ58owZZoUa9ex2OzlKHbftvlmzOLhvH3b/mP2W9et5+8UXMQwj8NitxcU4XS4GZWcHXktx+qwW3/Dv2uoVrKlaxqT0qR0/yURR2ZM3DN80JwsWCqVefLds37SJu7/9bf779tuBbaPGj+esyZNprK8HwJWUxPfuvpuR48aZFWavl5KWRtEZZwRuDx09mp899FBgZg/Aq089xT0330x9na/36fV4AtcDiNOT6cwlzhpPcc0aGj3hPQ02KpP8robtHGktY3B8VlQsxBtKKxYs4O+PPhq4qCclLY3DBw5QW10deMxXv/Mdbr/vPhKTk80KU3TAmZDA2EmTGDBkSGDbRVddxVXf/CauRN96xts2beK2yy/n47feMivMiGWz2ChwFeE2WllfvcrscNoVdcM1vl68r8i/9OI7dvSy/r4ZGQAsmTuXpZ99xkVXXcWQ3FzS+/fnmQ8+6PUnRKNB2149QFNjIxmDB5Puf+0B3nj2WeJdLmZ+7WsyXbMD2c58dN1G1lWvZEzKeBzW8PyMdPgqKqWswJPAaKAZuEVrvf0kj/kQeFdr/XRPBNpZJY17KG0+yIC4wSTFSE+zPfv37OGem27inKlT+cEvfwnAV771La688cbj5n1Lgo9Oo8ePZ/T48cdKMbS2MufNN+nTty+XXX894LvQrKmh4bi5/MLHbrWTl6DYXLueDTVrOTNlgtkhnVRnhmuuAuK01mcD9wKzTvKY3wF9ghlYV62s8l1yLGu3ftn65ct55N57qT7iq70xMDOTM885h8KRIwOPGZKbS1Z+vpyY60WOvtb2mBj+/Prr3PGrXwW2fTF7Nj++7jqWz59vYoThKzehELslhjVVy3B73R0/wQSdSfKTgDkAWuulwHFn15RSXwW8wOygR3eaDjXtp6RxN/1iM0h1pJkdjum8Hg9lBw8Gbu/fs4c1ixdTvHIl4Ptw//TBB7noyivNClGEmaSUlONm7gzIzGTkWWdRNGYM4Js++/RDD7Hiiy/MCjGsOKwOchIKaPDUs7l2vdnhnFRnBt2SgOo2tz1KKbvW2q2UGgHcAHwV+N+eCPB0HC0cJGPxvgR/tIjXo6++isVi4bxLLmH0hAlRcwWp6HlnnnMOZ55zTuD2Lq1910NYLJx1/vmAr1JnXHx8r/31l5+g2FG3hVVVSxmedAZWS3jNZ+lMkq8BEtvctmqtj/4u+RYwCPgMyAZalFK7tdZzaEdKSvCvyCtrPMyuhm2kx/Ulu09mxLzhXK7g1NNpbmpi4SefMjg7GzVyBADjJp2Du9WN3WYQ74zH5YojY2C/oByvJwSrLaJBuLbF6LPG8MSbr2Oz2wIx/vXXD7Bv5y4efu5vJKUGv2hXuLbFUS7iyG8qZGv1FvYbOxiZOtrskI7TmSS/CLgceEMpNREoPnqH1vruo/9WSj0AHOoowQP0RBXK+YfnA5AXX0R9fWTU8A5mhb1tGzfy2P2/YeIFF3Cnv4DXN+74EQAeL2FTye9UwqnaoNnCvS1S+/lOwtbVNWEYBlZ7DDGxsVjssdTVNVFdWcn+3bspOuOMbne2wr0tjsqOLWQbmgX7P2ewJbzOaXXmd8U7QJNSajHwJ+AupdRPlVJX9GxonVfrrmFr7UYS7UkMiBtkdjghsX75ch78yU8CJ1Hzhw3jxh/+kOtvu83kyERvYrFY+MEvfsFvnnoqkNjmffABv/vxj1kwp8P+XtRIsLsYFJ9JRUsZexp2mh3OcTrsyWutvcDtJ2zecpLHPRCkmE7b2qrlePFS4CoKq2/QYHO73YHL1g+VlLBx9WqKV65k0rRpWCwWZp5Q7EuIUGlbt3/E2LEc2rePcf4xe6/Xy5svvMC5F1/MoKwss0LscQWuYZQ07mF11VKyE/I6fkKIRPzVDk2eRjbUrCXOGs+Q+Gyzw+kxz//xj2xZt47fv/giVpuN86ZPZ+RZZx13RaMQ4SB/2LDj6v8Xr1jBf156icrycm67914TI+tZKTGp9IsdwP6mvRxq2k9GmIwqhNdp4C4orlmN22gl3zUUqyU0qwCFgtfrpc6/dB2Ax+PBaxgcKS8HfFUdJcGLSDBi7Fh+8pvfBC6wAnjtb39j/ocfHrdWbzQ4um7FqqqlJkdyTET35N3eVtZVrSTG4iDbmW92OEFTU1XFb374QwZmZfHTBx8E4Js/+hGxcXFYrRH/vSx6GZvdzvgpUwK362pqmP3vfzMwK4vJM2eaF1gPSHf0IzWmDzvrt1LZUhEW1+tEdMbYXFtMo7eBnIQCYqwxZofTLY0NDTT6V0hKTE4mMSWFhMREvF4v4Ou5S4IX0cCVlMSjr77K9+6+O3AObc6bb/LKk08e9+s1ElksFgr81+msrlpmcjQ+EZs1vIaXNVXLsGIlL6HQ7HC6ZeuGDdz5ta/x0euvA743yq8ee4zb7r1XEruISmn9+pHrv7LWMAy+mD2bz95/H2sUTJwYGDcIly2RLbUbwmJRkYjNIDvrt1HtriLTmRORS/vVVlcHCkNl5uaSlJqK03WsLHLb2QpCRDOLxcKvn3qKnz/6aOAzsG75cp5+6CFK26xAFiksFiv5rqF48YRFGeKITfJr/D+F8hOGmhzJ6Vv62Wfcee21LPvcV/8jzunkkZdeYsbXvmZyZEKYI8bhIK+oKHB78Sef8MWcOdTXmt8T7opMZw4OayzFNatp8baYGktEJvkDjSUcavZNUUqMkHLCrS3HXugheXkkuFy422yTYRkhjrntvvv437/+lVzlG4otLy3lmf/7v+PWFg5nNoud3IRCmr1Nphcui8jMsqba14svSCjq4JHhYd4HH3DHNddwcN8+AAZlZfHY668zadrFJkcmRHiyWq0MHTUqcHv+hx8y/6OP2LJunYlRnZ5cZwFWbKypWo7X8JoWR8Ql+cqWCnbWbyU1Jo00R1+zw+mU+ATfQuKHSkoC22TVHSE67+pvf5uf/Pa3nHuxr2Pkbm3lnX/8g7qaGpMjO7VYWxxZzhxq3dXsqNemxRFxSX5t9QqAsC5hsPjTT7n/+9+nuclXWGn85Mk89vrrjDn7bJMjEyIyWW02xk+eHOgcfTFnDv9+/nne/ec/TY6sffku3znD1VVLAxMtQi2iknyjp4HNtcU4bS4Gxg02O5xT2rtzJ7u3bWP7xo2A76dnvDP45ZWF6K0mTZvGjT/8IZfdcENgW/GKFWF3Ba3LnsSAuMEcbj7EgaaSjp/QAyIqyW+oWYPHcJOfoLCEUWH+vTt28NozzwS+qa/4xjeY9corDB871uTIhIhOjthYZl57LcmpqQBsWbeOh3/2M5575BGTI/uyozMA11YvN+X44ZMpO+Ax3KyrXkWMJYZMZ67Z4RznX08/zXsvv8w2f8/dmZBAev/+JkclRO/Rb+BAJs+cyQWXXx7Y1lAXHtMv0xx9A6UOqlsrQ378iEnyW2s30eipJ9uZb3oJg9aWFjavXRu4feMdd3DPI49QOGKEiVEJ0Xv16duX2+69l4LhvpICVRUV3Hnttbzx3HMmR+a72Ovo2PzRc4qhFBFJ3jAM1lQvx4KFXJf5JQx+/z//w8M/+1lgzu6g7GxGT5hgclRCiKMqy8tJTk0lrW94zMAbGJdJvNXJppr1NHkaQ3rsiEjyJY17qGgpY1B8Jk5bgikxuFtbA/++6KqruPiqq0hITGznGUIIs+Qoxe9ffJELLrsMAI/bzeMPPHDcL/BQslqs5LoKcRutbKwJ7Vz/iEjya/wnLPISlCnHf/OFF7j35ptpafatHTvxggv45o9+JEleiDBmj4kJ1IDavG4dy+bNY8HHH5sWT7YzH5vFzvrqlXiM0M0CCvskf6Slgj0NO0hz9KWPI92UGJobG2lpbo6YS6qFEMcbMXYsv3n66ePWQF63bBketztkMTisDrKdudR5atlR96UVVHtM2Cf59f4TFaHsxR8+cID3Xn45cPua73yHP7z0EoNzckIWgxAiuPKKikhKSQFAFxfz+//5H55++OGQxpDrz2Nrq1eG7JgdXluvlLICTwKjgWbgFq319jb33wUcXdfrI631r4MVXJOnkc21G3DaEhgQwoufnp81i+IVKygcOZKho0cTFx95pYyFEKc2YMgQpsycyRT/mD34ltzs6UKBLnsiGXGDONS0P2TrwHbmL7oKiNNanw3cC8w6eodSKhf4BnAOcDYwTSk16qR76YJNNetwG63kJhRi7eGLn2qqqgL//uYPf8gdv/oValTQ/hQhRBhJSknh1nvvDUx7rq6s5J6bbmLFF1/0+LHzA7350Eyn7EzmnATMAdBaLwXGtblvHzBda+3RWnuBGKApGIF5DS/ra1Zhs9jIcuYFY5en9O/nn+cn110XGHMfnJPDuRdfHLa1cYQQwbV940YOHzjAkbKyHj9WuqM/SfZkttdtoc7d8wXWOlMKMQmobnPbo5Sya63dWutWoFwpZQEeAdZorbd2tMOUlI7ruGyu3Eitu4bC5KH0SUrqRJhdl52fS3r//hjeVlyuuB491olCfbxwJm1xjLTFMaFoi8nTL0KNLKJvRgY2mw2Px8OSuZ9xzkVTe2QIZ5h3JEtLF7K1uZgL03u25HhnknwN0HauoFVrHTglrZSKA14AaoEfdOagVVUNHT5m0YGFAGTG5lNXF5QfBwG11dV8+NprXHPzzcQ4HIw7fwpjzpmEPSYm6Mdqj8sVF9LjhTNpi2OkLY4JZVu4ktNobGwFWvnv22/z4p//zDU3b+eam28O+rH6WQfisMay6vAKRsaP79Gr+DvzFbUImAmglJoIFB+9w9+DfxdYp7W+TWsdlMmfh5sPcbCphP6xA0i0B78X/97LL/PeK68w/8MPAd9lx/YYc0slCCHCx/jJkzl/xgwuuvLKwLZglgq2WexkO/Np8jai6zYGbb8n05me/DvAxUqpxYAFuFkp9VNgO2ADJgOxSqkZ/sffp7Ve0p2g1vmnFwVz2mRTY2NglszVN99MekYGF7YpZiSEEEelpKVx+333BW5v37SJV598klvuvpuBmZlBOUZuQgHb6jaxrmolwxNH99g5wA6TvP+E6u0nbG47kz+oA2YN7nq21m7CZUukX+yAoOyzeOVKnvzd7/j+z3/OqPHjiXc6ueSaa4KybyFE9Fu5cCFb1q+nqqIiaEk+3uZkYNwQ9jftZX/TXgbHZwVlvycKu4uhNtauxYuH3ITCoH2zJSYl0dLURMXhw0HZnxCid7n+1lt56PnnGTZmDOArYxyMK+CPjlasr17V7X2dSlgleY/hobh6DXaLvds14zeuWkVleTkA2YWFPPbvfweKFQkhxOnKLigI/Pulxx/n3ptvZueW7pUn6ONIJzkmlZ31W6ltre74CV0QVkl+Z/1W6j21ZDpzu3W2ecu6dTx411288OijgW0uKSYmhAiSEePGUTB8OJn5+d3aj8ViIS+hEAOD4po1QYrueGGV5I/+ZMlN6F7N+MKRI5l65ZVc9c1vBiMsIYQ4zqRp07h31izs/oXFl86bx+rFi7u0r8HxWTissWysWYvb29rxE05T2CT5suZSDjTto19sxmlPm/R6vXz0xht8/NZbgG/h7O/+7GfkFRX1RKhCCBE4Z9jS3MyLf/oTT/zmN9RWn/6Qi286ZR5N3ka21m0OdpidmkIZEsd68ac/bbK+tpb3XnmFmJgYLrjsMhyxscEOTwghTsoRG8vP//Qnyg4eJDE5GTj9Ymc5zgK21m1mffVKihJHBnU6ZVgk+SZPI1vrNpJgc5FxGtMmm5uaiI2LIzE5mf/38MP0zciQBC+ECLnMvDwy83w1ttxuNw/ddRcTpkxh2tVXdyphO+0JDIwbzIGmfRxq3h/UqrthMVyzubYYt+EmJ6EASyeqTRqGwStPPskvbrmFpkbfeon5w4aR3KdPT4cqhBDtOlRSwoE9e9ix+fSGXnITfLN3iqtXBzUe03vyhmFQXL0KK7ZOT5u0WCwYXi8GUH3kCHGDer4msxBCdMbg7GwefuEF4pzOQC++vLSU9P79231euqM/LnsS2+q2MCltKk57cNazNr0nv7dxF9XuKgbHZxFrbX+oZafWgX9ff9ttPPjMM/SXBC+ECDOp6enEO33VdjesWsVd11/PJ//5T7vPsVgs5DoL8OJhU23wFvs2PckXB064FrT7uPf/9S9++b3vsXjuXMC3SG+cs+OSxUIIYSZHbCxp/fuTU9jx1PBMZw42i50NNWvwGt6gHN/UJF/TWsWuhu2kxqSR6khr97Fjzz2XwhEjyOrmxQdCCBFKhSNG8MeXXyZ/2DDAV+pcFxef9LExVgeZ8dnUumvY3bD9pI85XaYm+Q01awHIOUUvftXChZSXlgIwMDOT+594gkFZPVPERwghesrRi6YAnvn97/ntnXey9RSJPsd/MWiwTsCaluTdXjcba9bisMaetPrato0bmfXzn/P0Qw8FtslyfEKISHfpddcxado08ocPP+n9yTEppDn6srdxF5UtFd0+nmmza7bXb6HJ20hBQhE2i+1L9+cPG8YVN97IOVOnmhCdEEL0jKGjRzN09OjA7SVz5zIoOzswzx58pV0qWsrYULOG89Iv6tbxTOvJb/AX42k7VLN1w4ZAaQKLxcL1t9563B8uhBDRpLK8nKcffphH7rkHd+uxujUD4wYTa43zXUPUzXo2pvTky5sPB5b3S7C7APC43Tz14IOUl5YydtKkDueUCiFEpEtNT+dH99+PIzb2uCVIrRYbWc48ttZtZFvdZoqSRnX5GKYk+aO9+GznsV68zW7nR/ffT2N9vSR4IUSvMe688wL/bm5q4uW//pWvfuc7ZCf5knxxzZpuJfmQD9e0eJrZUruBeKsTR42dx+6/P1C5LXfoUIaPHRvqkIQQIizM//BD5r73Hh+98QYJdhf9YwdS2nyAsuZDXd5nhz15pZQVeBIYDTQDt2itt7e5/3vAbYAb+J3W+oP29rfhyHpajRbyXYrF737KsnnzKBg+nJnXXtvlP0IIIaLBtKuvJs7pDEw4yUkooLT5AMU1a7ia8V3aZ2eGa64C4rTWZyulJgKzgCsBlFIZwJ3AOHwLei9USn2itW4+1c5Wli3HgoUsZx6FNwxnUHY2Z557bpeCF0KIaGKxWJg8Y0bg9r4VO/AO8KAtG7u8z84M10wC5gBorZfiS+hHjQcWaa2btdbVwHag3cGj0sZD2CqtxNucWK1Wxk6aJPPfhRDiBIZh8P7Lr6Bnr8NtdH2GTWd68klA2+VOPEopu9bafZL7aoHkjna46d1VXFX0dWx204tgmirW6qDFGpz6FJFO2uIYaYtjentb3P/nv7Jt+0Y0XV8xqjNZtgZouwq21Z/gT3ZfIlDV3s7euO4pC9edVoxCCNF7TbuwW0/vzHDNImAmgH9Mvm3BheXAeUqpOKVUMlAEbOhWREIIIYLGYhhGuw9oM7tmFGABbsaX9Ldrrd/zz665Fd8XxkNa67d6NmQhhBCd1WGSF0IIEblMXzRECCFEz5EkL4QQUUySvBBCRLEem6ge7HIIkawTbXEXcL3/5kda61+HPsrQ6Kgt2jzmQ+BdrfXToY8yNDrxvpgB3O+/uRq4Q2sddSfROtEO/w/4OuDFN7njHVMCDSGl1ATg91rrKSdsvxz4X3x58wWt9bMd7asne/KBcgjAvfjKIQDHlUM4F7gEeFgpFduDsZitvbbIBb4BnAOcDUxTSnW95Fz4O2VbtPE7oE9IozJHe++LROAR4DKt9URgN5BuRpAh0F47pODLFWcD04A/mxJhCCml7gaew1cqpu32GOBP+NphMnCrP5e2qyeTfFDLIUS49tpiHzBda+3RWnuBGKAp9CGGTHttgVLqq/h6bLNDH1rItdcW5+C7JmWWUmoBUKq1Lgt9iCHRXjvUA3uABP9/veHy1x3A1SfZXoRv6nql1roFWAicd5LHHacnk/xJyyGc4r5OlUOIYKdsC611q9a6XCllUUr9EVijtd5qSpShccq2UEqNAG7A93O0N2jvM5IOXADcA8wAfqKUKgxxfKHSXjuAryO0Cd+Q1eOhDMwM/muNTlaspkt5syeTfFDLIUS49toCpVQc8Ir/MT8IcWyh1l5bfAsYBHwG3AT8VCk1PbThhVR7bVEBrNBaH9Ja1wFfAGeEOsAQaa8dZgADgBwgE7hKKdW1mruRr0t5syeTvJRDOOaUbaGUsgDvAuu01rdprT3mhBgyp2wLrfXdWusJ/pNNLwKPaq3nmBFkiLT3GVkFjFBKpft7tZKHvukAAAQeSURBVBPx9WajUXvtUAk0As1a6yZ8SS0l5BGGh81AgVKqj1LKAZwPLOnoST1ZBvId4GKl1GL85RCUUj/lWDmEx4EF+L5ofuF/AaPVKdsCsOE7iRLrn00BcJ/WusMXL0K1+74wN7SQ6+gzch/wsf+xb2ito7Uj1FE7XAQsVUp58Y1Df2JirCGnlLoBcGmtn/G3y8f48uYLWuv9HT1fyhoIIUQUk4uhhBAiikmSF0KIKCZJXgghopgkeSGEiGKS5IUQIopJkhdCiCgmSV4IIaKYJHkhhIhiPXnFqxDtUkplA1vxXa5vAA7gAHCz1rqkB473AjAF3xXW/+riPpKBF7XWX1FKjQNu11rfEqT4bsVXuygFeElr3VsKtYkeJElemO2A1jpQeEspNQtfHfWv98CxbsJXt7ylG/tIBcYAaK1XAsFK8F/FV3XyLHxfdtuVUk9prQ8GY/+i95IkL8LNPHyLyNiBp4ARQH9gPb7En4avYufR2uJ3AiUnbvPXJQ9QSr2Hry7KcqXUQ8D/w1c3aAPw3VMcqwn4P+Ar+Fbi+RswFRiolHoHeAx44OjqPUqpnwM3Ah7gv8Dd+Op9/xxowFeIrxi4oe0XjX9lpIeAiVrrVqD1/7d3PyEyh3Ecx9+bJHLj5CAKX5HaxV42F3/2QJqS0abUWifFVSk3R1dOlM3JQexlj9qDwoG0hfic1JKD4khc1uH7DLPjt2a3kZ1+fV6XmZ7fr3lmDvOdZ75PPZ+I+ADsBFzkrSfuyVvfKMk3TfJkvRHgR0kL2ka2MI6RBXla0n7y3PkDi4wtIKlRHgeBT8AO4JCk8b/M1STTy/aQQTcTZDH+KOlEx3s/CjTIwIuh8jrny+UR4CJZ5DeTaWjtRsgfl5mImI2IWfJY4bqGhNh/5JW8rbRNpagBrCGPob4s6UtEfI6IC+SKdjuwHngIPIiIITIH9gawr2KsG5VUMiQ9WmSuYfL0x+9k9uhg2Ueochi4K+kr/Or/jwOvgVetPYaIeMOf0YbDwE1Jl8o9u4En5H6FWU9c5G2lLejJt0REA7hKtkQmyaSkAUmPI2IXcBwYA85KGu0cA0a7zPut21xkOs98231byJZQlc5/xQP8/n61H6M9X66120i2c1pOkSHmvewdmAFu11j/OkKuoifJoIiDwKqIuAackXSHbIHsrRr7F3ORaUwnI2J1RKwjc0g3UL04mgFOR8Tasp8wQe4vLMVbSouprOLPAVeW+RnMKrnIW7+6RRbNl8A9Mj1oK3AdaJYWzxQZGVg11vNckqbK8xfAM3Kl/xSYi4gFBVzSNDANPCdbNHPlfS3FfXKz9R1wGxiT9H6Zn8GskkNDzMxqzCt5M7Mac5E3M6sxF3kzsxpzkTczqzEXeTOzGnORNzOrMRd5M7Mac5E3M6uxn6DQEwQAy2hDAAAAAElFTkSuQmCC\n",

"text/plain": [

""

]

},

"metadata": {},

"output_type": "display_data"

}

],

"source": [

"binomial_learn(1, 1, 2, 1)"

]

},

{

"cell_type": "markdown",

"metadata": {

"solution2": "hidden"

},

"source": [

" - The posterior peaks at the mean observed pass rate, 1/2, which is reasonable. It is very broad because we have only made two observations.\n",

" - Values of 0 and 1 are absolutely ruled out, which makes sense since we have already observed 1 pass and 1 no pass.\n",

" - The prior and posterior are probability densities normalized over $\\theta$, so their area in the plot is 1. The likelihood is normalized over all possible data, so does not have area of 1 in this plot."

]

},

{

"cell_type": "code",

"execution_count": 13,

"metadata": {

"solution2": "hidden"

},

"outputs": [

{

"data": {