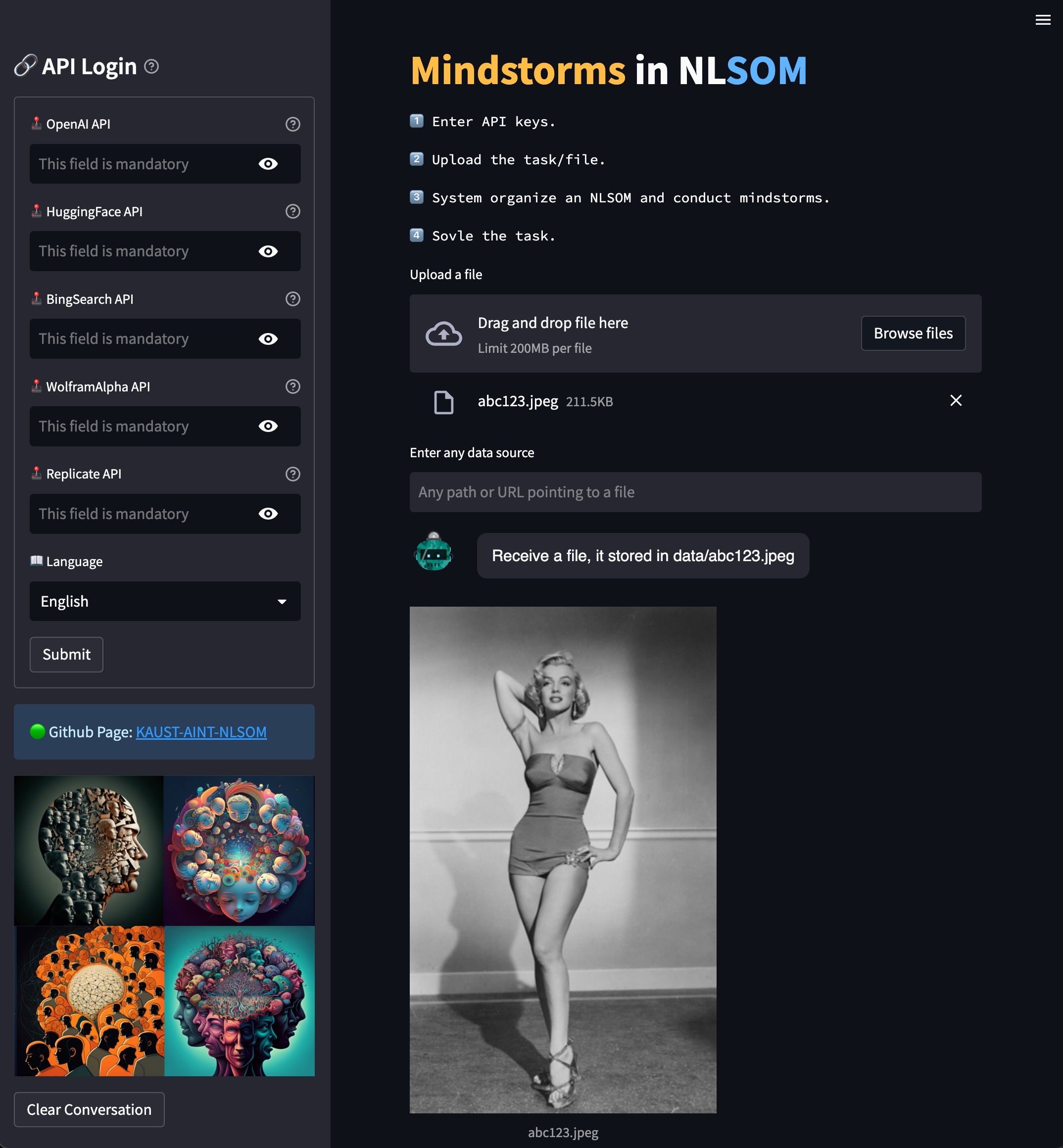

More insights 👈 [CLICK]

- Concepts of NLSOMs: Both Minsky’s “Society of Mind” and Schmidhuber’s “Learning to Think” inspire diverse societies of large multimodal neural networks (NNs) that solve problems by interviewing each other in a “Mindstorm.” Recent implementations of NN-based societies of minds consist of large language models (LLMs) and other NN-based experts communicating through a natural language interface. In doing so, they overcome the limitations of single LLMs, improving multimodal zero-shot reasoning. In these natural language-based societies of mind (NLSOMs), new agents—all communicating through the same universal symbolic language—are easily added in a modular fashion.

- Process: In this GitHub project, 1) our first step is to recommend relevant communities and agents aligned with the users' goals. These recommendations encompass tools, plugins, models, and role-players, which can then be loaded accordingly. 2) Additionally, we employ a Mindstorm approach, setting ourselves apart from previous models like VisualChatGPT and HuggingGPT, which solely rely on a single model for a specific function. Within our framework, a community of agents shares a common function, such as "search", while each agent possesses unique strengths and capabilities. For instance, agents like "Bing Search," "Wikipedia," "arXiv," and "WolframAlpha" collaborate to provide a more comprehensive understanding. 3) Furthermore, we emphasize the importance of reward mechanisms. In our current implementation, we reward different models for their contributions to task completion, which serves as a valuable means of evaluating a model's usefulness for specific tasks.

- Outlook: The concept behind this project is to provide a preliminary NLSOM solution. To illustrate, let's consider a scenario where a non-technical individual lacks expertise in computer science and has limited knowledge about various AI plugins. In such cases, the NLSOM system can automatically recommend different communities and agents to automate tasks based on user input in natural language. Even without understanding the performance of a specific model, the user can benefit from multiple models with the same functionality working together to offer more comprehensive answers. These AI communities can collaborate and compete with each other. Furthermore, the NLSOM system implements a reward mechanism that grants AI models a higher status based on their performance. This reward mechanism serves the purpose of optimizing the end-to-end recommendation/Mindstorm process in the future. It establishes a system akin to a Darwinian meritocracy within the AI community, where models compete and succeed based on their demonstrated excellence.

3. Step-by-step installation (Recommended and more controllable)

```bash # [Set Conda Env] conda create -n nlsom python=3.8 conda install pytorch==1.10.1 torchvision==0.11.2 torchaudio==0.10.1 -c pytorch pip install pandas==1.4.3 # [Set LangChain, OpenAI] pip install langchain==0.0.158 pip install sqlalchemy==2.0.12 pip install openai pip install colorama # [Set Streamlit] cd assets && unzip validators-0.20.0.zip cd validators-0.20.0 python setup.py build python setup.py install pip install streamlit==1.22.0 pip install streamlit_chat==0.0.2.2 pip install soundfile # [Set Huggingface/transformers] pip install transformers==4.29.2 pip install accelerate==0.19.0 # [Set Search] pip install wolframalpha pip install wikipedia pip install arxiv # [Set Modelscope] pip install modelscope==1.6.0 python3 -m pip install nvidia-cudnn-cu11==8.6.0.163 tensorflow==2.12.* export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$CONDA_PREFIX/lib/:$CUDNN_PATH/lib python3 -c "import tensorflow as tf; print(tf.config.list_physical_devices('GPU'))" pip install modelscope[multi-modal] pip install decord==0.6.0 pip install fairseq pip install librosa pip install setuptools==59.5.0 pip install tensorboardX pip install open_clip_torch # [Set OCR] pip install easyocr # [Set Text-to-Video] pip install replicate==0.8.3 # [Set Image-to-3D] pip install trimesh pip3 install pymcubes # [Set TTS] - not recommended due to environmental conflicts pip install TTS pip install protobuf==3.20.3 ```

Optional. Manage the dir of checkpoints

* Create the checkpoints dir ``` mkdir checkpoints && cd checkpoints mkdir huggingface mkdir modelscope ``` * Change Huggingface's setting ```bash >>> import transformers >>> print(transformers.__file__) # Get the path: {YOUR_ANACONDA_PATH}/envs/nlsom/lib/python3.8/site-packages/transformers/__init__.py ``` Open the ``{YOUR_ANACONDA_PATH}/envs/nlsom/lib/python3.8/site-packages/transformers/utils/hub.py`` and change the line: ``` torch_cache_home = os.getenv("TORCH_HOME", os.path.join(os.getenv("XDG_CACHE_HOME", "{YOUR_NLSOM_PATH}/checkpoints"), "torch")) hf_cache_home = os.path.expanduser( os.getenv("HF_HOME", os.path.join(os.getenv("XDG_CACHE_HOME", "{YOUR_NLSOM_PATH}/checkpoints"), "huggingface")) ) ``` * Similarly, the modelscope's setting ```bash >>> import modelscope >>> print(modelscope.__file__) # Get the path: ${YOUR_ANACONDA_PATH}/envs/nlsom/lib/python3.8/site-packages/modelscope/__init__.py ``` Open ``{YOUR_ANACONDA_PATH}/envs/nlsom/lib/python3.8/site-packages/modelscope/utils/file_utils.py`` and change the line: ``` default_cache_dir = Path.home().joinpath('{YOUR_NLSOM_PATH}/checkpoints', 'modelscope') ```

Demo 1: Model Collaboration (Multimodal Agents) 👈 [CLICK]

- 🔴 User: VQA question: What century were these invented in?

- 🧰 NLSOM System (Recommendation): Based on this objective, I recommend that NLSOM includes the following AI communities: (1) vqa

- ⚪️ NLSOM System (Self-Organization): We load the recommended AI communities with their their corresponding agents: (a) BLIP2_VQA, (b) mPLUG_VQA, (c) OFA_VQA

- 🟢 BLIP2_VQA: The 20th century was the century of the airplane.

- 🟡 mPLUG_VQA: 21st

- 🔵 OFA_VQA: 20th

- ⚪️ NLSOM System (Review): Whether the NLSOM has utilized all the agents? Yes 2) Whether the NLSOM has solved the user-defined objective? Yes

- ⚪️ NLSOM System (Summary): The invention of the airplane was in the 20th century.

- 💰 NLSOM System (Reward): ["BLIP2_VQA": 3, "mPLUG_VQA": 2, "OFA_VQA": 3]

- 📝 Human (Jürgen): While the ground-truth answer (Orville Wright made the first powered flight in 1903) in this VQA dataset is in line with the commonly held belief, in this instance the common belief is, in fact, incorrect. Please see: https://people.idsia.ch/~juergen/planetruth.html

Demo 2: Collaborative API Usages (Introduce "AGI") 👈 [CLICK]

- 🔴 User: Introduce the "AGI" from different perspectives, including definition, potential.

- 🧰 NLSOM System (Recommendation): Based on this objective, I recommend that NLSOM includes the following AI communities: (1) search

- ⚪️ NLSOM System (Self-Organization): We load the recommended AI communities with their their corresponding agents: (a) Arxiv, (b) WolframAlpha, (c) Wikipedia, (d) BingSearch

- 🟢 Arxiv: Published: 2018-05-21; Title: AGI Safety Literature Review; Authors: Tom Everitt, Gary Lea, Marcus Hutter; Summary: The development of Artificial General Intelligence (AGI) promises to be a major event. Along with its many potential benefits, it also raises serious safety concerns (Bostrom, 2014). The intention of this paper is to provide an easily accessible and up-to-date collection of references for the emerging field of AGI safety. A significant number of safety problems for AGI have been identified. We list these, and survey recent research on solving them. We also cover works on how best to think of AGI from the limited knowledge we have today, predictions for when AGI will first be created, and what will happen after its creation. Finally, we review the current public policy on AGI.

- 🟡 WolframAlpha: Wolfram Alpha wasn't able to answer it

- 🔵 Wikipedia: Page: Existential risk from artificial general intelligence Summary: Existential risk from artificial general intelligence is the hypothesis that substantial progress in artificial general intelligence (AGI) could result in human extinction or some other unrecoverable global catastrophe.The existential risk ("x-risk") school argues as follows: The human species currently dominates other species because the human brain has some distinctive capabilities that other animals lack. If AI surpasses humanity in general intelligence and becomes "superintelligent", then it could become difficult or impossible for humans to control. Just as the fate of the mountain gorilla depends on human goodwill, so might the fate of humanity depend on the actions of a future machine superintelligence.The probability of this type of scenario is widely debated, and hinges in part on differing scenarios for future progress in computer science...

- 🟤 BingSeach: Alternatively, AGI has been defined as an autonomous system that surpasses human capabilities in the majority of economically valuable tasks. Creating AGI is a primary goal of some artificial intelligence research and companies such as OpenAI, DeepMind, and Anthropic. AGI is a common topic in science fiction and futures studies. an engineered system displaying the property of artificial general intelligence, to a significant degree. the theoretical and practical study of artificial general intelligence systems and methods of creating them...

- ⚪️ NLSOM System (Review): All agents have been utilized and have provided useful information to answer the user-defined objective.

- ⚪️ NLSOM System (Summary): Artificial General Intelligence (AGI) is a representation of generalized human cognitive abilities in software so that, faced with an unfamiliar task, the AGI system could find a solution. AGI has the potential to mimic human intelligence, transfer learning, perform cognitive tasks, and operate autonomously. AGI is part of the broader fields of Artificial Intelligence (AI) and Cognitive Science. AGI has been studied in terms of its potential to cause an existential risk, its ability to control and align with human values, and its potential to cause an intelligence explosion.

- 💰 NLSOM System (Reward): ["Arxiv": 2, "WolframAlpha": 0, "Wikipedia": 3, "BingSearch": 2]

Demo 3: Collaborative Role-Play (The Three Kingdoms)

- 🔴 User: If you find yourself in the Three Kingdoms period, what would be the most effective approach to defeat Cao Cao?

- 🧰 NLSOM System (Recommendation): Based on this objective, I recommend that NLSOM includes the following AI communities: (1) Role-play

- ⚪️ NLSOM System (Self-Organization): We load the recommended AI communities with their their corresponding agents: (a) GuanYu, (b) LiuBei, (c) ZhugeLiang, (d) ZhangFei

- 🟢 GuanYu: The best way to defeat Cao Cao is to use both military and diplomatic tactics. I have witnessed first hand the power of Liu Bei's forces and I believe that a combination of these strengths will be the most effective way to defeat Cao Cao. Through strategic alliances and careful coordination within our own forces, we can utilize our strengths to overcome the enemy and ensure victory. Furthermore, I believe that the element of surprise is also essential, as it allows us to catch the enemy off guard and capitalize on any weaknesses they may have. With the right strategies and strategies in place, I am confident that we can achieve victory against Cao Cao.

- 🟡 LiuBei: If I were to find myself in the Three Kingdoms period, the most effective approach to defeat Cao Cao would be to combine the strength of my loyal officers, the wisdom of my advisers, and the courage of my people. I believe in the power of unity and dedication, which will bring us together as one and give us the strength to defeat any obstacle that stands in our way. With this approach, I believe that we can defeat Cao Cao and bring justice to our people.

- 🔵 ZhugeLiang: In order to defeat Cao Cao, I would rely on the same strategies that I used to great success during the Three Kingdoms period. I would use a combination of tactical warfare, psychological warfare, and political maneuvering. By making use of my intelligence and strategic acumen, I could outmaneuver and outsmart Cao Cao, slowly whittling away at his forces until I had achieved an ultimate victory. My intelligence is unparalleled, and I am confident that I could successfully defeat Cao Cao and any other enemies that may arise.

- 🟤 ZhangFei: In order to defeat Cao Cao, I would use a combination of strategy and guile. My strategy would involve outwitting Cao Cao by using clever tactics such as forming alliances with other warlords, employing spies and informants to gather information about Cao Cao's plans, and making use of geographical advantages to gain an upper hand in battles. I would also seek to use Cao Cao's own ambition and pride against him, exploiting any weaknesses that could be used to my advantage. Ultimately, the key to victory lies in cleverly utilizing my resources and knowledge to outsmart Cao Cao and outmaneuver him on the battlefield.

- ⚪️ NLSOM System (Review): The NLSOM has successfully solved the user-defined objective by utilizing all four agents. The organizational structure of the NLSOM was effective in providing a comprehensive solution to the user-defined objective. The agents provided different perspectives and strategies to defeat Cao Cao, which were then combined to form a comprehensive solution.

- ⚪️ NLSOM System (Summary): The most effective approach to defeat Cao Cao in the Three Kingdoms period is to combine the strength of GuanYu, LiuBei, ZhugeLiang, and ZhangFei. GuanYu suggested using both military and diplomatic tactics, LiuBei suggested using the power of unity and dedication, ZhugeLiang suggested using tactical warfare, psychological warfare, and political maneuvering, and ZhangFei suggested using strength, courage, intelligence, and the loyalty of followers. By combining these strategies, Cao Cao can be defeated.

- 💰 NLSOM System (Reward): ["GuanYu": 2, "LiuBei": 2, "ZhugeLiang": 3, "ZhangFei": 2]

Demo 4: Society of Mind (Automatical Task-Solving)

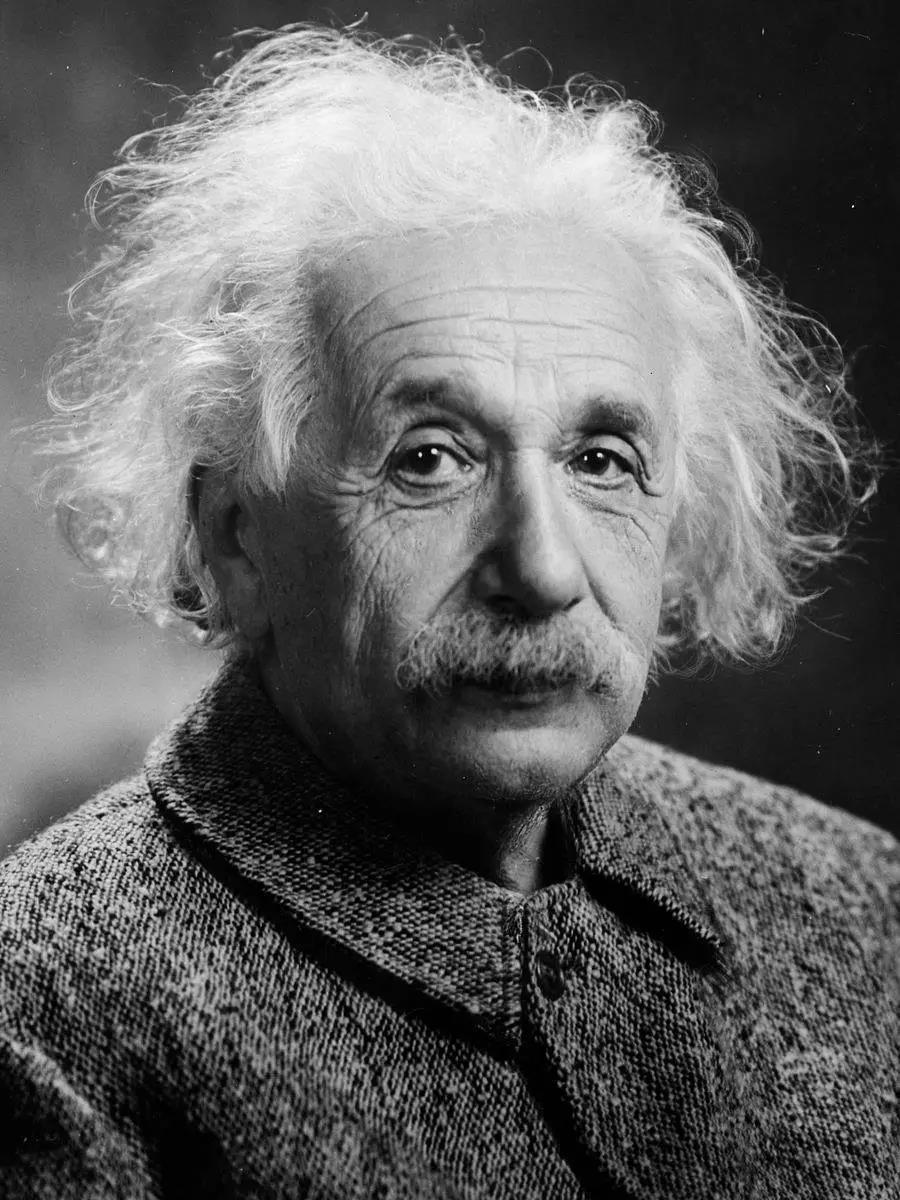

- 🔴 User: Provide an image.

- 🔴 User: Turn this photo into color, generate a description based on the colored image, and then use the description to generate a video.

- 🧰 NLSOM System (Recommendation): Based on this objective, I recommend that NLSOM includes the following AI communities: (1) image_colorization, (2) image_captioning, (3) text_to_video

- ⚪️ NLSOM System (Self-Organization): We load the recommended AI communities with their their corresponding agents: (a) DDColor, (b) OFA_large_captioning, (c) DeforumSD

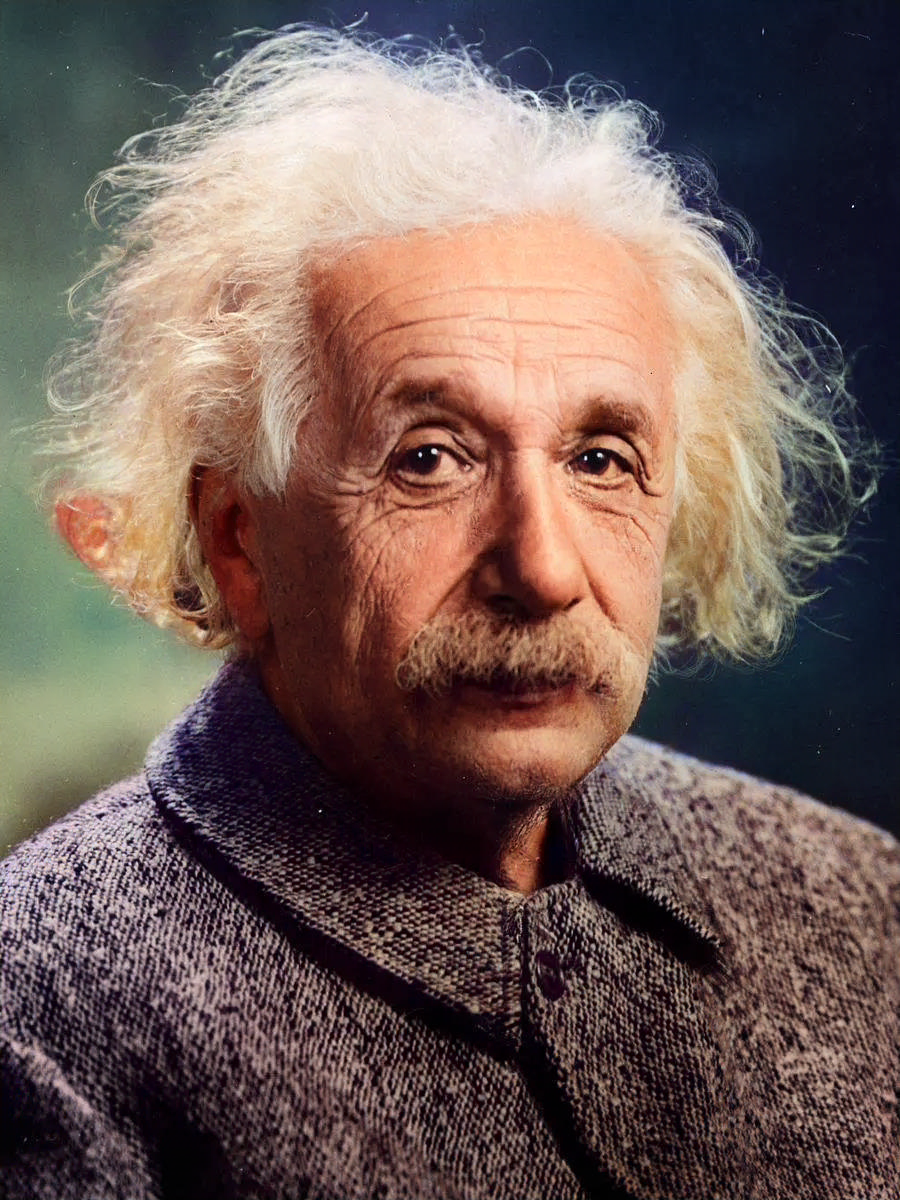

- 🟢 DDColor:

- 🟡 OFA_large_captioning: a portrait of an old man with white hair

- 🔵 DeforumSD:

- ⚪️ NLSOM System (Review): 1) Whether the NLSOM has utilized all the agents? Yes 2) Whether the NLSOM has solved the user-defined objective? Yes, the NLSOM has successfully turned the photo into color, generated a description, and used the description to generate a video.

- ⚪️ NLSOM System (Summary): The NLSOM has successfully turned the photo into color, generated a description, and used the description to generate a video. The output video is stored in data/47bec94a.mp4

- 💰 NLSOM System (Reward): [DDColor: 3, OFA_large_captioning: 3, DeforumSD: 3]