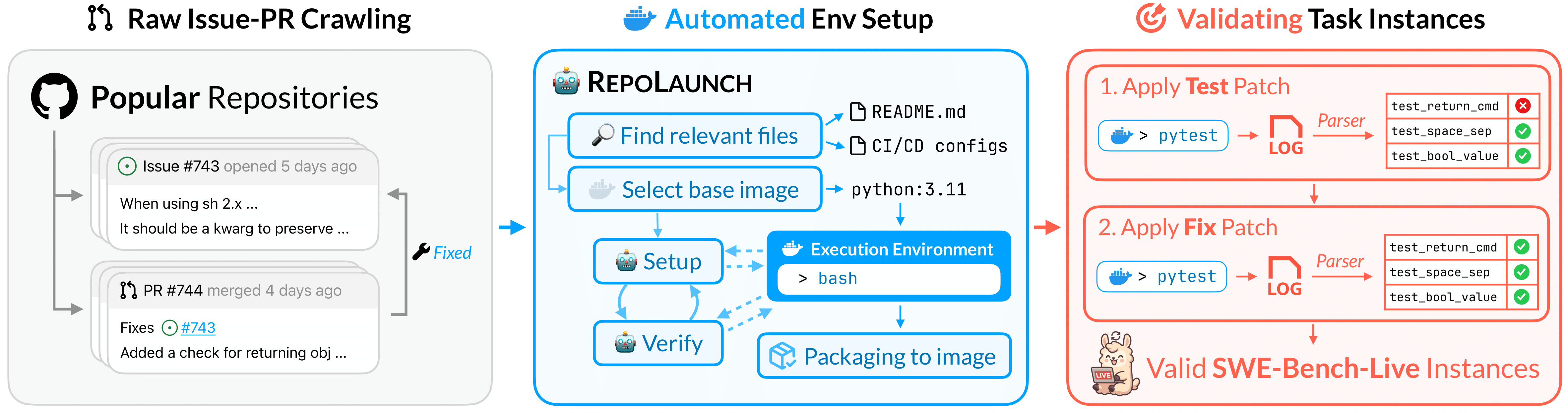

A brand-new, continuously updated SWE-bench-like dataset powered by an automated curation pipeline.

--- SWE-bench-Live is a live benchmark for issue resolving, designed to evaluate an AI system's ability to complete real-world software engineering tasks. Thanks to our automated dataset curation pipeline, we plan to update SWE-bench-Live on a monthly basis to provide the community with up-to-date task instances and support rigorous and contamination-free evaluation. ## News - **08/03/2026**: SWE-bench-Live/Windows has been released along with the leaderboard, evaluating LLM's ability to resolve Windows-specific implementation and take actions in powershell. Newest paper updating all progress since the last NIPS paper is available at [RepoLaunch: Automating Build&Test Pipeline of Code Repositories on ANY Language and ANY Platform](https://arxiv.org/abs/2603.05026). - **10/01/2026**: SWE-bench-Live/Multi-Language with the leaderboard has been released. Merged into main. For old source code SWE-bench-Live/SWE-bench-Live (Python-only, the NIPS paper version), refer to [python-only branch](https://github.com/microsoft/SWE-bench-Live/tree/python-only). - **04/12/2025**: We have updated eval result of GPT-5 and Claude-4.5 on our website. Though Claude might have seen the ground truth because its knowledge cutoff month is July 2025. We have also separated the RepoLaunch project to [RepoLaunch](https://github.com/microsoft/RepoLaunch/). Please contribute repolaunch agent relevant codes to this new repository. For more info please refer to [PR#35](https://github.com/microsoft/SWE-bench-Live/pull/35). - **09/17/2025**: Dataset updated (through 08/2025)! We’ve finalized the update process for huggingface dataset SWE-bench-Live/SWE-bench-Live (Python tasks): **Each month, we will add 50 newly verified, high-quality issues to the dataset test split**. The `lite` and `verified` splits will remain frozen, ensuring fair leaderboard comparisons and keeping evaluation costs manageable. To access all the latest issues, please refer to the `full` split! ## 🚀 Set Up ```bash # Python >= 3.10 pip install -e . ``` > [!NOTE] > Though this eval script has ensured backward compatibility with SWE-bench-Live/SWE-bench-Live (Python-only, the NIPS paper version), which uses swebench library for evaluation, if you want to evaluate on SWE-bench-Live/SWE-bench-Live (Python), for fair comparison we still recommend you to go to our old [Python-only branch](https://github.com/microsoft/SWE-bench-Live/blob/python-only/README.md) and follow the old evaluation method. The below eval script is more suitable for our new datasets SWE-bench-Live/MultiLang and SWE-bench-Live/Windows. Test your installation by running: ```bash python -m evaluation.evaluation \ --dataset SWE-bench-Live/MultiLang \ --instance_ids rsyslog__rsyslog-6047 \ --platform linux \ --patch_dir gold \ --output_dir logs/test \ --workers 1 \ --overwrite 1 ``` ## 🚥 Evaluation Evaluate your model on SWE-bench-Live. Collect patch diff of your agent: ```bash # unix cd /testbed; [ -d .git ] || { g=$(find . -maxdepth 2 -mindepth 2 -type d -name .git -print -quit); [ -n "$g" ] && cd "${g%/.git}"; } ; git --no-pager diff HEAD --text; ``` ```powershell # win cd C:\testbed; if (-not (Test-Path .git)) { $g = Get-ChildItem -Directory -Recurse -Depth 2 -Force -ErrorAction SilentlyContinue | Where-Object { $_.Name -eq '.git' } | Select-Object -First 1; if ($g) { Set-Location $g.Parent.FullName } }; git --no-pager diff HEAD --text; ``` Prediction patch file format: ```json { "instance_id1": { "model_patch": "git diff", ... }, "instance_id2": { "model_patch": "git diff", ... }, ... } ``` Run gold patch: ```bash # For windows if there are decoding issues: $env:PYTHONUTF8="1" ; $env:PYTHONIOENCODING="utf-8" python -m evaluation.evaluation \ --dataset SWE-bench-Live/SWE-bench-Live \ # or SWE-bench-Live/MultiLang, SWE-bench-Live/Windows # or path to local dataset file like jsonl --split < refer to Huggingface SWE-bench-Live > \ # if local jsonl file then ignore this field --platform linux \ # or windows --patch_dir gold \ --output_dir logs/gold \ --workers 10 \ --overwrite 0 \ # 0 for no and 1 for yes --start-month 2025-06 \ --end-month 2025-07 # default to oldest and newest if not specified ``` > [!NOTE] > Users have reported task instances may become invalid over time. For benchmarking and training we suggest running evaluation with gold patch three times to filter invalid instances. We allow success rate report with the dorminator the actual number of instances passed with gold patch on your machine at your experiment time. Evaluation command: ```bash # For windows if there are decoding issues: $env:PYTHONUTF8="1" ; $env:PYTHONIOENCODING="utf-8" python -m evaluation.evaluation \ --dataset SWE-bench-Live/SWE-bench-Live \ # or SWE-bench-Live/MultiLang, SWE-bench-Live/Windows # or path to local dataset file like jsonl --split < refer to Huggingface SWE-bench-Live > \ # if local jsonl file then ignore this field --platform linux \ # or windows --patch_dir

SWE-bench-Live Curation Pipeline