{

"cells": [

{

"cell_type": "markdown",

"id": "2ddc3c7c",

"metadata": {},

"source": [

"# PyTorch and Mitsuba interoperability"

]

},

{

"cell_type": "markdown",

"id": "abfc1227",

"metadata": {},

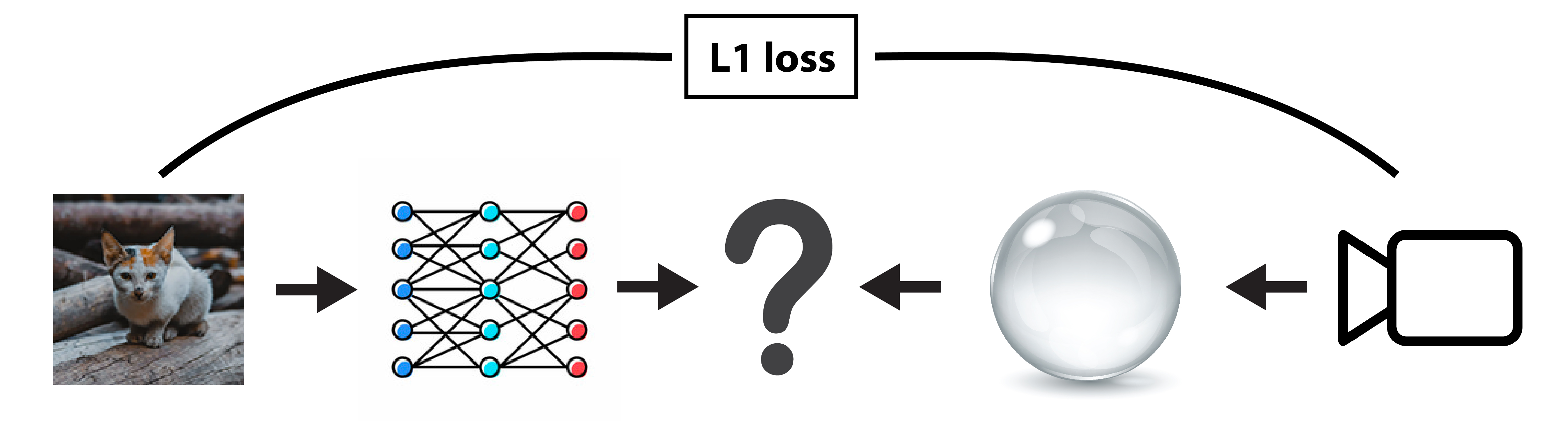

"source": [

"## Overview\n",

"\n",

"This tutorial shows how to mix differentiable computations between Mitsuba and [PyTorch][1]. The ability to combine these frameworks allows us to squeeze an entire rendering pipeline between neural layers whilst still preserving the differentiability (end-to-end) of their combination.\n",

"\n",

"Note that the necessary communication and synchronization between Dr.Jit and PyTorch along with the complexity of traversing two separate computation graph data structures produces an overhead when compared to an implementation which only uses Dr.Jit. We generally recommend sticking with Dr.Jit unless the problem requires neural network building blocks like fully connected layers or convolutions, where PyTorch provides a clear advantage.\n",

"\n",

"In this example, we are going to train a single fully connected layer to pre-distort a texture image to counter the distortion introduced by a refractive object placed in front of the camera when looking at the textured plane. The objective of this optimization will be to minimize the difference between the rendered image and the input texture image.\n",

"\n",

"We assume the reader is familiar with the PyTorch framework or has followed at least the basic [PyTorch tutorials][2].\n",

"\n",

"\n",

"\n",

"\n",

"

\n",

"\n",

"🚀 **You will learn how to:**\n",

"\n",

"

\n",

"

Use the dr.wrap() function decorator to insert Mitsuba computations in a PyTorch pipeline

\n",

"

\n",

"\n",

"

\n",

"\n",

"[1]: https://pytorch.org\n",

"[2]: https://pytorch.org/tutorials/beginner/basics/quickstart_tutorial.html"

]

},

{

"cell_type": "markdown",

"id": "aec07a13",

"metadata": {},

"source": [

"## Setup\n",

"\n",

"As always, let's start by importing `mitsuba` and `drjit` and setting an AD-aware variant."

]

},

{

"cell_type": "code",

"execution_count": 1,

"id": "0c6f3cc2-6edb-425c-bc56-fe65c270d623",

"metadata": {},

"outputs": [],

"source": [

"import drjit as dr\n",

"import mitsuba as mi\n",

"mi.set_variant('cuda_ad_rgb', 'llvm_ad_rgb')"

]

},

{

"cell_type": "markdown",

"id": "853d7afa",

"metadata": {},

"source": [

"We will then import `torch` as well as `matplotlib` to later display the resulting textures and rendered images."

]

},

{

"cell_type": "code",

"execution_count": 2,

"id": "f40f1dcd-9fbe-4dd3-93e3-adce381b696b",

"metadata": {},

"outputs": [],

"source": [

"import torch\n",

"import torch.nn as nn\n",

"import torch.nn.functional as F\n",

"\n",

"from matplotlib import pyplot as plt"

]

},

{

"cell_type": "markdown",

"id": "8805bde2-8fc3-4cf6-8695-c9552eb5a313",

"metadata": {},

"source": [

"

\n",

"\n",

"⚠️ **Note on caching memory allocator**\n",

"\n",

"Similarly to Dr.Jit, PyTorch uses a [caching memory allocator][1] to speed up memory allocations. It is possible for the two frameworks to over allocate memory on the GPU, resulting in allocation failure on the Mitsuba side. When running into such problem, we recommend trying releasing all unoccupied cached memory of PyTorch using `torch.cuda.empty_cache()` which should mitigate this issue.\n",

"\n",

"

\n",

"\n",

"[1]: https://pytorch.org/docs/stable/notes/cuda.html#memory-management"

]

},

{

"cell_type": "markdown",

"id": "e7f0e85c",

"metadata": {},

"source": [

"## Load texture dataset\n",

"\n",

"\n",

"In order for the fully connected layer to learn the distortion mapping rather than the distorted textured itself, we are going to train it on multiple input texture images.\n",

"\n",

"The following code loads a few squared images using `mi.Bitmap` and converts them into 32 bits floating point RGB images."

]

},

{

"cell_type": "code",

"execution_count": 3,

"id": "83fbe367-016b-411b-ad41-fa24bc77518e",

"metadata": {},

"outputs": [

{

"data": {