repo,created_at,label,title,body

facebook/react,2023-08-02 02:26:00,bug,Bug: [18.3.0-canary] renderToString hoists some tags to top(working in 18.2),"

React version: 18.3.0-canary-493f72b0a-20230727

## Steps To Reproduce

1. run following code.

```js

import * as ReactDOMServer from ""react-dom/server"";

const element = (

{/* meta and title are hoisted */}

title

{/* the script tag is not hoisted */}

{/* but this is hoisted */}

);

console.log(ReactDOMServer.renderToString(element));

```

Link to code example:

https://codesandbox.io/s/react1830-canary-493f72b0a-20230727-ssr-hoist-bug-lvhj45?file=/src/index.js

## The current behavior

console.log outputs `title`

## The expected behavior

console.log outputs `title`"

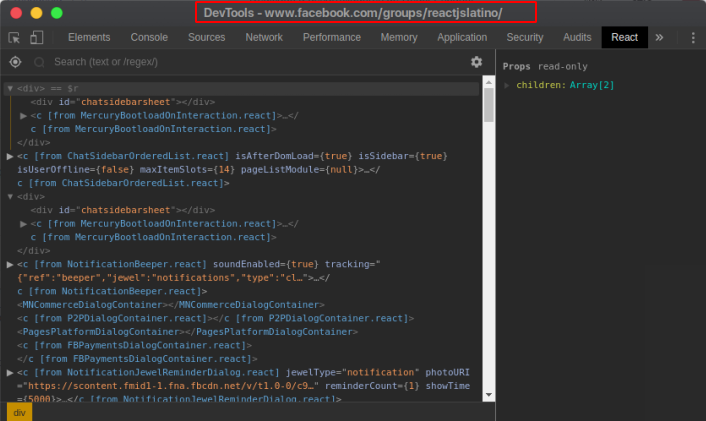

facebook/react,2023-07-17 22:43:05,bug,[DevTools Bug]: Chrome extension gets disconnected from the page after 30sec of inactivity,"### Website or app

https://react.dev/

### Repro steps

Steps:

1. go to a react page like https://react.dev/

2. open the devtools Components tab, everything works correctly.

3. change tab (a non react one) and wait 30 sec - 5 min (not super exact)

4. go back to the tab that has the react page you're debugging

5. the Components does not work anywore: you can't select and view components on the page.

My guess is that it's related to Chrome killing the service worker after inactivity on the page. See https://bugs.chromium.org/p/chromium/issues/detail?id=1152255#c185

Going back to the page doesn't seem to wake the serviceworker up.

Chrome: Version 114.0.5735.198 (Official Build) (x86_64)

React Extension: 4.28.0

macOS: 13.4.1

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-07-13 19:01:47,bug,[DevTools Bug]: Deprecated __REACT_DEVTOOLS_GLOBAL_HOOK__ ????,"### Website or app

N/A

### Repro steps

Hi, I have heard that the new versions of React will not support the REACT_DEVTOOLS_GLOBAL_HOOK. If there any information about this update that you can share. Is there a new way to achieve the same result of using the REACT_DEVTOOLS_GLOBAL_HOOK but with a different method? What is the future of React without the REACT_DEVTOOLS_GLOBAL_HOOK?

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-06-07 17:26:43,bug,"[DevTools Bug]: React devtools stuck at Loading React Element Tree, troubleshooting instructions are Chrome-specific","### Website or app

corporate project (private)

### Repro steps

Load page, then open React DevTools. Reloading or closing and reopening the tab does not fix the problem. Quitting and reopening Firefox sometimes fixes the problem.

The [linked troubleshooting instructions](https://github.com/facebook/react/blob/main/packages/react-devtools/README.md#the-react-tab-shows-no-components) provide no guidance for users of browsers other than Chrome; I am running Firefox v114 (on macOS 13.2.1).

### How often does this bug happen?

Often

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-05-31 15:17:41,bug,Bug: Radio button onChange not called in current React Canary,"

React version: 18.3.0-canary-a1f97589f-20230526

## Steps To Reproduce

1. Create radio buttons that toggle `disabled` in `onChange`

2. After selecting each radio button, `onChange` is no longer called

Link to code example:

The following CodeSandbox demonstrates the issue with the current react canary version. The issue is not present when react & react-dom versions are changed to stable 18.2.0

https://codesandbox.io/s/react-canary-radio-buttons-deiqb3?file=/src/App.js

## The current behavior

``'s `onChange` prop is not called on subsequent clicks of the input

## The expected behavior

``'s `onChange` prop should be called on subsequent clicks of the input

"

facebook/react,2023-05-16 18:27:09,bug,[DevTools Bug]: Strict mode badge points to the old docs,"### Website or app

https://fb.me/devtools-strict-mode

### Repro steps

The Strict mode warning badge points to https://fb.me/devtools-strict-mode which points to the strict mode section in [the old docs](https://legacy.reactjs.org/docs/strict-mode.html) instead of [the new docs](https://react.dev/reference/react/StrictMode).

Badge:

Code:

https://github.com/facebook/react/blob/4cd7065665ea2cf33c306265c8d817904bb401ca/packages/react-devtools-shared/src/devtools/views/Components/InspectedElement.js#L240

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

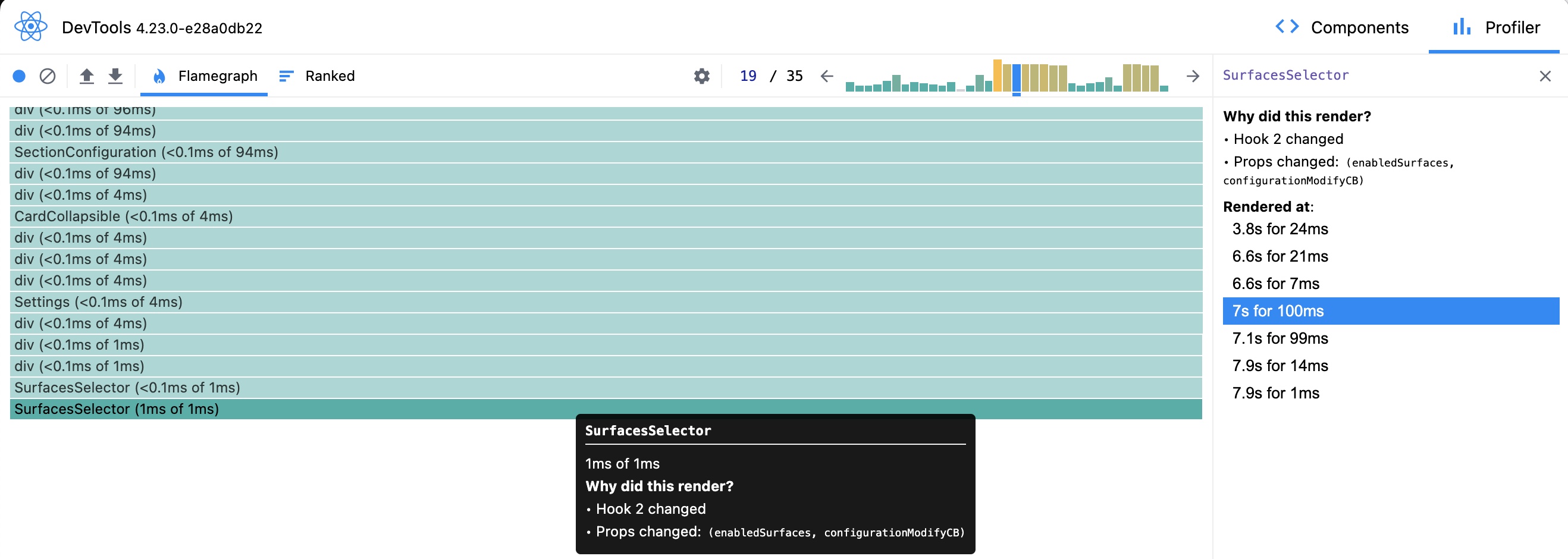

facebook/react,2023-05-08 16:35:24,bug,[DevTools Bug]: Regression - profiling doesn't store props value ,"### Website or app

Doesn't apply

### Repro steps

Old version of DevTools provided ability to see changes in props / state between commits.

https://legacy.reactjs.org/cc2a8b37bbce52c49a11c2f8e55dccbc/see-which-props-changed.gif

Current version provide information about the reason to re-render, but lack of ability to see exactly how props / state variables are changing between re-renders is a huge regression for utility of Profiler.

Components tab keeps only the latest values for the props / state.

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

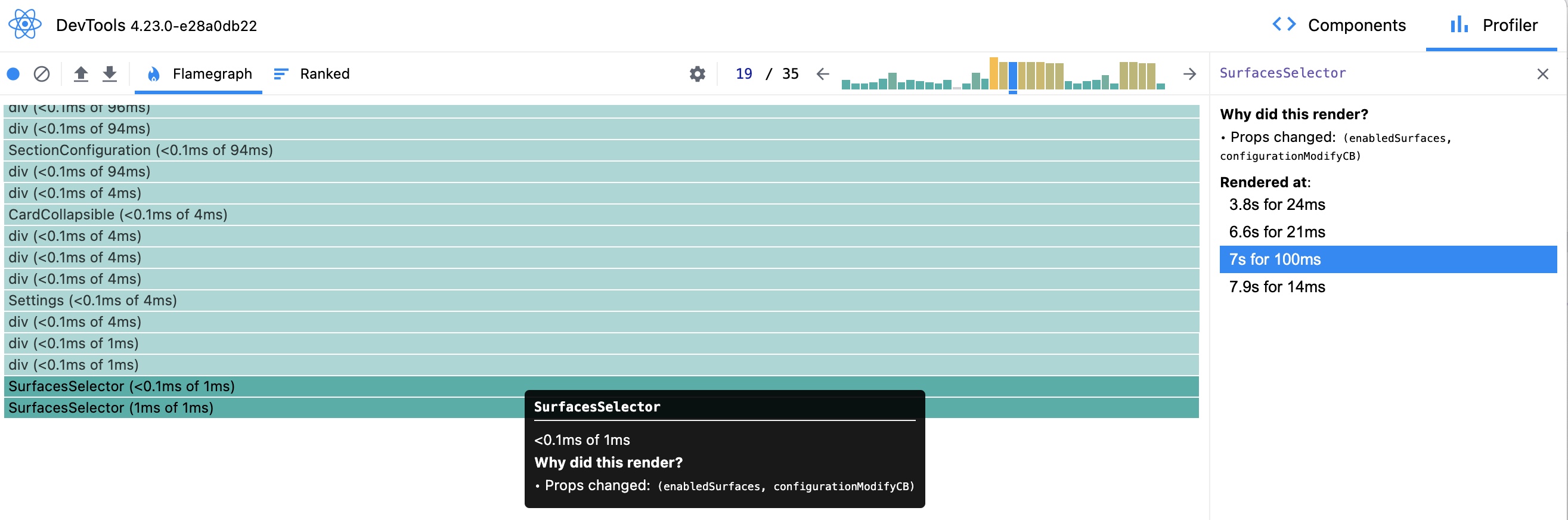

facebook/react,2023-05-06 23:03:50,bug,"[DevTools Bug] Cannot add node ""1751"" because a node with that id is already in the Store.","### Website or app

local repo

### Repro steps

Loading a React component with the React profiler recording enabled

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.6-7f8c501f6

### Error message (automated)

Cannot add node ""1751"" because a node with that id is already in the Store.

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:28581:41

at bridge_Bridge.emit (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:26606:22)

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:26775:14

at listener (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:57029:39)

```

### Error component stack (automated)

_No response_

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Cannot add node because a node with that id is already in the Store. in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2023-05-02 03:53:41,bug,[DevTools Bug]: React pages not being detected as using React in Incognito mode,"### Website or app

https://opensource.fb.com

### Repro steps

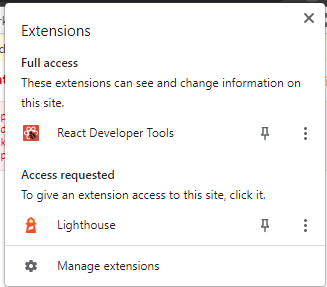

It seems that with the latest update of Chrome and React DevTools, it cannot detect pages as using React on incognito. Screenshot attached below:

* _Chrome version: 112.0.5615.137 (arm64)_

* _React DevTools version: 4.27.6 (4/20/2023)_

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-04-24 07:00:55,bug,[DevTools Bug]: components tree not loaded in Microsoft Edge,"### Website or app

https://react.dev/

### Repro steps

1. Load react app in Microsoft Edge

2. Open dev tools and go to components tree

3. The tree is not loaded:

4. This is the message I get: Loading React Element Tree... If this seems stuck, please follow the [troubleshooting instructions](https://github.com/facebook/react/blob/main/packages/react-devtools/README.md#the-react-tab-shows-no-components).

Edge version - latest: Microsoft Edge

Version 112.0.1722.58 (Official build) (64-bit)

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-04-07 09:03:43,bug,"[DevTools Bug] Cannot add node ""108084"" because a node with that id is already in the Store.","### Website or app

local react development

### Repro steps

Open React Dev Tools

### How often does this bug happen?

Often

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.3-28ce1c171

### Error message (automated)

Cannot add node ""108084"" because a node with that id is already in the Store.

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:28167:41

at bridge_Bridge.emit (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:26196:22)

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:26365:14

at listener (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56618:39)

```

### Error component stack (automated)

_No response_

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Cannot add node because a node with that id is already in the Store. in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2023-04-04 12:09:08,bug,[DevTools Bug]: DevTools settings are not being saved in the Edge browser,"### Website or app

https://react.dev/

### Repro steps

1. Open DevTools

2. Click View Settings

3. Change one of the options

4. Refresh page

5. No option is being saved

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-03-22 18:12:35,bug,[DevTools Bug]: 'Unable to find React on the page' in incognito on Firefox,"### Website or app

https://react.dev

### Repro steps

1. Open an incognito tab

2. visit a react 18 website

3. try and use dev tools (I'm on `4.27.1`)

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-03-14 19:55:26,bug,"[DevTools Bug] Cannot add node ""8891"" because a node with that id is already in the Store.","### Website or app

localhost

### Repro steps

Tried to use the react dev tools Components tab inspector tool

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.2-1a88fbb67

### Error message (automated)

Cannot add node ""8891"" because a node with that id is already in the Store.

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:27863:41

at bridge_Bridge.emit (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:25892:22)

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:26061:14

at listener (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56323:39)

```

### Error component stack (automated)

_No response_

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Cannot add node because a node with that id is already in the Store. in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2023-03-03 19:05:34,bug,Bug: Error occurs when returning empty fragment '<>' on a page,"In was working when i comment a code and see this error

Then i start to test possible ways of reproduce the bug

Notes:

1.using <>{null} dont give the error

2.using <>{undefined} give the error

React version: 18.2.0

## Steps To Reproduce

1.import { Fragment } from ""react"";

export default async function Companies() {

return (

{/* Or => <>*/}

);

}

2.Start the localHost (dev server, etc)

## The current behavior

## The expected behavior

"

facebook/react,2023-02-23 04:42:56,bug,why not?[DevTools Bug]: ,"### Website or app

Website

### Repro steps

Loading React Element Tree...

If this seems stuck, please follow the [troubleshooting instructions](https://github.com/facebook/react/tree/main/packages/react-devtools#the-issue-with-chrome-v101-and-earlier-versions).

### How often does this bug happen?

Every time

### DevTools package (automated)

Loading React Element Tree... If this seems stuck, please follow the troubleshooting instructions.

### DevTools version (automated)

Loading React Element Tree... If this seems stuck, please follow the troubleshooting instructions.

### Error message (automated)

Loading React Element Tree... If this seems stuck, please follow the troubleshooting instructions.

### Error call stack (automated)

```text

Loading React Element Tree...

If this seems stuck, please follow the troubleshooting instructions.

```

### Error component stack (automated)

```text

Loading React Element Tree...

If this seems stuck, please follow the troubleshooting instructions.

```

### GitHub query string (automated)

```text

Loading React Element Tree...

If this seems stuck, please follow the troubleshooting instructions.

```

"

facebook/react,2023-02-10 17:36:03,bug,Unable to establish connection with the sandpack bundler.[DevTools Bug]: ,"### Website or app

https://beta.reactjs.org/learn/sharing-state-between-components

### Repro steps

https://beta.reactjs.org/learn/sharing-state-between-components

Unable to establish connection with the sandpack bundler.

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-02-03 16:17:32,bug,[DevTools Bug]: React Dev Tools breaks craigslist?,"### Website or app

https://newyork.craigslist.org/search/hhh

### Repro steps

1. Enable React Dev Tools

2. Visit https://newyork.craigslist.org/search/hhh

3. 50% of the time you will see broken page https://imgur.com/a/yJPeAvA

4. Disable React Dev Tools

5. Visit https://newyork.craigslist.org/search/hhh

6. 100% of the time you will see a working page

### How often does this bug happen?

Often

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

```text

TypeError: svs-boot-setManifest-exception:Cannot read properties of null (reading 'insertBefore')

at cl.injectCss (bbe8a523a089547e594fa2f101021699a377645c.js:6:12097)

at cl.injectResource (bbe8a523a089547e594fa2f101021699a377645c.js:6:14293)

at bbe8a523a089547e594fa2f101021699a377645c.js:6:20658

at Array.forEach ()

at injectResourceSet (bbe8a523a089547e594fa2f101021699a377645c.js:6:20634)

at bigBang (bbe8a523a089547e594fa2f101021699a377645c.js:6:17286)

at cl.setManifest (bbe8a523a089547e594fa2f101021699a377645c.js:6:18123)

at manifest.js:1:4

cl.unexpected @ bbe8a523a089547e594fa2f101021699a377645c.js:6

cl.setManifest @ bbe8a523a089547e594fa2f101021699a377645c.js:6

(anonymous) @ manifest.js:1

VM1218:1 Uncaught SyntaxError: Unexpected end of JSON input

at JSON.parse ()

at localStorage-092e9f9e2f09450529e744902aa7cdb3a5cc868d.html:38:37

```

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-01-30 03:38:53,bug,[DevTools Bug]: Expected static flag was missing,"### Website or app

https://github.com/Contrick64/scryfall-random

### Repro steps

This error reproduces on initial page load. I can't seem to find what caused it to start showing up, as it points to a part of my code that has existed since well before I first got the error.

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-01-25 11:39:56,bug,"ERROR TypeError: Cannot read property 'createElement' of undefined, js engine: hermes","### Website or app

I'm using flipper to debug react-native app

### Repro steps

migrate to current version of RN-0.71.1

using flipper

enable hermes engine

run the APP

[see](https://github.com/facebook/react/issues/26042#issue-1556179961)

[here ](https://github.com/facebook/react-native/issues/35958#issue-1556258460)

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

```text

ERROR TypeError: Cannot read property 'createElement' of undefined, js engine: hermes

ERROR TypeError: Cannot read property 'createElement' of undefined, js engine: hermes

ERROR TypeError: Cannot read property 'createElement' of undefined, js engine: hermes

ERROR TypeError: Cannot read property 'createElement' of undefined, js engine: hermes

```

### Error component stack (automated)

```text

this is related to --->> path: node_modules/react-devtools-core/dist/backend.js

function initialize() {

canvas = window.document.createElement('canvas');

canvas.style.cssText = ""\\n xx-background-color: red;\\n xx-opacity: 0.5;\\n bottom: 0;\\n left: 0;\\n pointer-events: none;\\n position: fixed;\\n right: 0;\\n top: 0;\\n z-index: 1000000000;\\n "";

var root = window.document.documentElement;

root.insertBefore(canvas, root.firstChild);

}

```

### GitHub query string (automated)

_No response_"

facebook/react,2023-01-19 23:00:02,bug,[DevTools Bug]: This page doesn't appear to be using react,"### Website or app

reactjs.org

### Repro steps

Go to website.

Click on the react DevTools icon in the extensions.

after reaload, hover the extension.

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2023-01-05 08:46:14,bug,Bug: Suspense | client component should have a queue,"React version: 18.2.0

Next version: 13.1.1

## Steps To Reproduce

1. Suspend using a (fake) promise in a client component in app dir (next)

2. Try to useState after the use() call

````ts

'use client';

import { use, useState } from 'react';

const testPromise = new Promise((resolve) => {

setTimeout(() => {

resolve('use test promise');

});

});

export default function Page() {

use(testPromise);

useState(0);

return

Test

;

}

````

Link to code example:

https://codesandbox.io/s/github/xiel/app-playground/tree/suspense-error/?from-embed=&file=/app/page.tsx

## The current behavior

Suspending for a promise in a client component and using state/reducer after results in errors during hydration:

```

react-dom.development.js?9d87:94 Warning: An error occurred during hydration. The server HTML was replaced with client

react-dom.development.js?9d87:94 Warning: You are accessing ""digest"" from the errorInfo object passed to onRecoverableError.

on-recoverable-error.js?eb92:17 Uncaught Error: Should have a queue. This is likely a bug in React. Please file an issue.

```

## The expected behavior

No error during hydration.

"

facebook/react,2022-12-13 11:39:55,bug,[DevTools Bug]: Too Much Recursion - Firefox & Appsync,"### Website or app

https://eu-west-1.console.aws.amazon.com/appsync/

### Repro steps

1. Use Firefox with React Dev Tools added

2. Log into AWS, go to Appsync console and select an API

3. The app will then freeze and you should get a `too much recursion` error in the console

This only seems to happen in Firefox. Not confident on whether this is a DevTools, Firefox or Appsync issue but it only seems to happen when DevTools is enabled.

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-12-11 00:02:00,bug,"[DevTools Bug] Element ""5"" not found","### Website or app

local dev environment

### Repro steps

Occurs on app launch

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.0-bd2ad89a4

### Error message (automated)

Element ""5"" not found

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Element not found in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-12-06 11:31:26,bug,"[DevTools Bug] Element ""65"" not found","### Website or app

https://sh0ny-it.github.io/hw-master/#/junior

### Repro steps

E

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.0-bd2ad89a4

### Error message (automated)

Element ""65"" not found

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Element not found in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-12-05 15:47:21,bug,"[DevTools Bug] Element ""24"" not found","### Website or app

http://localhost:3000

### Repro steps

Someone know solution? I saw a peoples that have same problem, but no one helped :/

### How often does this bug happen?

Only once

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.0-bd2ad89a4

### Error message (automated)

Element ""24"" not found

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Element not found in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-12-05 10:48:01,bug,[DevTools Bug]: Components Tab does not show up,"### Website or app

https://beta.reactjs.org/

### Repro steps

1. Visit website

2. Open dev tools

This happens on https://beta.reactjs.org/ but I first noticed in on a personal project (localhost).

When I open the dev tools, the CPU goes up. At first, the Components tab does not show up. After a loooooong time, it does show up, however when I click on it nothing renders inside.

I don't know if it's the newest Chrome version or the newest extension version that's causing it.

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-12-04 16:36:01,bug,"[DevTools Bug] Element ""35"" not found","### Website or app

.

### Repro steps

.

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.0-bd2ad89a4

### Error message (automated)

Element ""35"" not found

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Element not found in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-12-04 06:45:46,bug,"[DevTools Bug] Element ""7"" not found","### Website or app

The error was thrown at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

### Repro steps

The error occurred at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.0-bd2ad89a4

### Error message (automated)

Element ""7"" not found

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Element not found in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-12-02 23:53:37,bug,"[DevTools Bug] Element ""717"" not found","### Website or app

http://localhost:3000/managerCr

### Repro steps

Al darle un nuevo key al form, para que este se resetee, me salta este error

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.0-bd2ad89a4

### Error message (automated)

Element ""717"" not found

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Element not found in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-12-02 09:38:22,bug,"[DevTools Bug] Element ""34"" not found","### Website or app

test

### Repro steps

React devtool send me this error, how i can fix it

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.0-bd2ad89a4

### Error message (automated)

Element ""34"" not found

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Element not found in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-12-01 15:31:57,bug,"[DevTools Bug] Element ""6"" not found","### Website or app

localhost

### Repro steps

If click to the App > State, browser drop me a error ""Element ""6"" not found"", and more red line, i don't understand what is it. Browser updating 10 minutes later to 108.0.5359.72 version. Help me

### How often does this bug happen?

Only once

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.0-bd2ad89a4

### Error message (automated)

Element ""6"" not found

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Element not found in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-12-01 04:52:51,bug,"[DevTools Bug] Element ""21"" not found","### Website or app

https://inquisitive-haupia-f14ecb.netlify.app/

### Repro steps

map list

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.27.0-bd2ad89a4

### Error message (automated)

Element ""21"" not found

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39558:15

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:40933:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39771:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42429:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:35080:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37705:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44505:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39237:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39439:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39409:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44686:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:44115:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31940:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:32584:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39834:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:56039:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Element not found in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-11-11 12:15:45,bug,[DevTools Bug]: react-devtools depends on vulnerable version of electron,"### Website or app

https://github.com/facebook/react/blob/main/packages/react-devtools/package.json

### Repro steps

### Issue

electron package versions <18.3.7 suffer from a security vulnerability: ""Exfiltration of hashed SMB credentials on Windows via file:// redirect"".

See https://github.com/advisories/GHSA-p2jh-44qj-pf2v

### Solution

Upgrade electron dependency in react-devtools to >18.3.7

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-10-17 08:20:47,bug,"[DevTools Bug] Could not find ID for Fiber ""MiddleSectionContainer""","### Website or app

///

### Repro steps

log in

inspect element

### How often does this bug happen?

Only once

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.25.0-336ac8ceb

### Error message (automated)

Could not find ID for Fiber ""MiddleSectionContainer""

### Error call stack (automated)

```text

at getFiberIDThrows (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/react_devtools_backend.js:7007:11)

at fiberToSerializedElement (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/react_devtools_backend.js:8765:11)

at inspectElementRaw (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/react_devtools_backend.js:8934:21)

at Object.inspectElement (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/react_devtools_backend.js:9237:38)

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/react_devtools_backend.js:11584:56

at Bridge.emit (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/react_devtools_backend.js:4192:18)

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/react_devtools_backend.js:4838:14

at listener (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/react_devtools_backend.js:13163:9)

```

### Error component stack (automated)

```text

at InspectedElementContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:39612:3)

at Suspense

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37920:5)

at div

at InspectedElementErrorBoundaryWrapper (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:38454:3)

at NativeStyleContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:41105:3)

at div

at div

at OwnersListContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:33778:3)

at SettingsModalContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:36399:3)

at Components_Components (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:43155:52)

at ErrorBoundary_ErrorBoundary (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:37920:5)

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:38092:3)

at PortaledContent (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:38122:5)

at div

at div

at div

at ThemeProvider (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:38092:3)

at TimelineContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:43336:3)

at ProfilerContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:42781:3)

at TreeContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:30676:3)

at SettingsContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:31302:3)

at ModalDialogContextController (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:38517:3)

at DevTools_DevTools (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:54684:3)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Could not find ID for Fiber ""MiddleSectionContainer"" in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-10-06 05:33:48,bug,"[DevTools Bug] Cannot add node ""5370"" because a node with that id is already in the Store.","### Website or app

----

### Repro steps

----

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.25.0-336ac8ceb

### Error message (automated)

Cannot add node ""5370"" because a node with that id is already in the Store.

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:26596:41

at bridge_Bridge.emit (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:24626:22)

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:24795:14

at listener (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:54959:39)

```

### Error component stack (automated)

_No response_

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Cannot add node because a node with that id is already in the Store. in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-09-09 22:42:43,bug,[DevTools Bug]: React extension tab in Edge DevTools doesn't have emoji prefix in title.,"### Website or app

https://reactjs.org/

### Repro steps

1. Open Developer Tools with React extension on any website that using React in Edge.

2. Check the react extension tab (Profiler and Components), it doesn't have emoji prefix in the title like Chrome does.

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-09-05 03:06:52,bug,[DevTools Bug]: DevTools shouldn't skip over keyed Fragments in the tree,"### Website or app

https://github.com/reactjs/reactjs.org/pull/4981

### Repro steps

1. Wrap something into ``

2. It doesn't show up in DevTools

We filter out fragments because they tend to be useless. But this one is important! Keys are crucial and we should show anything with a key in the tree.

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-08-23 10:14:02,bug,Bug: out of order application of useState changes,"After updating my app to React 18 I had problem with inconsistent state from useState

I created code example with the problem:

* I have 2 states `const [done, setDone] = useState(false);` (inside hook) and `const [ids, setIds] = React.useState([])`

* I call `setIds([1,2,4])` (inside await, but it's executed immediately, as we see in console) and then `setDone(true)`

* then component is rerendered with updated `done` but original `ids`

* then component is rerendered, but with both states updated

React version: 18.2.0, 18.3.0-next

Link to code example:

Smaller repro: (from eps1lon's comment)

## The current behavior

In the example after clicking run:

`Inner` is rerendered with `done: true`, but without updated `ids`.

`FormikLike` is created with empty `ids`.

`[]` is displayed under button.

## The expected behavior

First `Inner` rerender should have updated `ids` state.

`FormikLike` should be created with non-empty `ids`.

`[1,2,4]` is displayed under button.

It workied this way in React 17.

In https://stackoverflow.com/a/48610973 @gaearon wrote:

> > But can you trust React to update the state in the same order as setState is called for the same component?

>

> Yes.

Answer was for class components, but I hope the same is true for multiple useState hooks in single function component."

facebook/react,2022-08-11 13:08:10,bug,"[DevTools Bug] Cannot add node ""7448"" because a node with that id is already in the Store.","### Website or app

https://github.com/digita-webshop/digita-webshop-frontend/tree/develop

### Repro steps

1) npm install

2) npm start

3) inspect element

4) components tab

### How often does this bug happen?

Sometimes

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.25.0-336ac8ceb

### Error message (automated)

Cannot add node ""7448"" because a node with that id is already in the Store.

### Error call stack (automated)

```text

emit@moz-extension://9908b50a-48f2-40b1-9532-79813e5a6947/build/main.js:24626:22

bridge_Bridge/this._wallUnlisten<@moz-extension://9908b50a-48f2-40b1-9532-79813e5a6947/build/main.js:24795:14

listener@moz-extension://9908b50a-48f2-40b1-9532-79813e5a6947/build/main.js:54959:41

```

### Error component stack (automated)

_No response_

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Cannot add node because a node with that id is already in the Store. in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-07-27 06:25:25,bug,"[DevTools Bug]: ""open in editor"" not working for vscode remote files","### Website or app

empty

### Repro steps

/data/home/xxxx/src/test.tsx

1. Inspect component

2. User clicks ""open in editor""

3. file not found

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-07-20 15:32:03,bug,[DevTools Bug]: window.bundle.js:2 TypeError: Cannot read properties of undefined (reading 'action'),"### Website or app

https://onepiece-cardgame.dev/builder?f=%24R+%28%22zoro%22%29

### Repro steps

1. Load webpage

2. Tools doesnt work

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-07-15 06:54:26,bug,"[DevTools Bug] Could not inspect element with id ""69""","### Website or app

Internal company application

### Repro steps

Sometimes I got this error, I solve it be restart my device

### How often does this bug happen?

Sometimes

### DevTools package (automated)

react-devtools-core

### DevTools version (automated)

4.14.0-d0ec283819

### Error message (automated)

Could not inspect element with id ""69""

### Error call stack (automated)

```text

Uncaught Error: Could not inspect element with id ""69""

The error occurred at Ni (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:261874)

at Suspense

at yl (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:248667)

at div

at Tl (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:252578)

at Ji (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:278469)

at div

at div

at Oa (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:229816)

at Va (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:236032)

at /Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:305187

at yl (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:248667)

at /Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:249909

at div

at div

at Ns (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:299485)

at vn (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:180478)

at Un (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:194071)

at Pl (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:253263)

at kc (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:339874)

```

### Error component stack (automated)

```text

at Ni (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:261874)

at Suspense

at yl (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:248667)

at div

at Tl (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:252578)

at Ji (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:278469)

at div

at div

at Oa (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:229816)

at Va (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:236032)

at /Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:305187

at yl (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:248667)

at /Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:249909

at div

at div

at Ns (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:299485)

at vn (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:180478)

at Un (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:194071)

at Pl (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:253263)

at kc (/Applications/React Native Debugger.app/Contents/Resources/app.asar/node_modules/react-devtools-core/dist/standalone.js:48:339874)

```

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Could not inspect element with id in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-07-07 17:02:35,bug,"[DevTools Bug] Cannot remove node ""0"" because no matching node was found in the Store.","### Website or app

Bitbucket repo

### Repro steps

just npm react-devtools and run

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-core

### DevTools version (automated)

4.24.7-7f673317f

### Error message (automated)

Cannot remove node ""0"" because no matching node was found in the Store.

### Error call stack (automated)

```text

at /Users/matt/.config/yarn/global/node_modules/react-devtools-core/dist/standalone.js:48:333971

at f.emit (/Users/matt/.config/yarn/global/node_modules/react-devtools-core/dist/standalone.js:48:279464)

at /Users/matt/.config/yarn/global/node_modules/react-devtools-core/dist/standalone.js:48:281005

at /Users/matt/.config/yarn/global/node_modules/react-devtools-core/dist/standalone.js:48:667650

at Array.forEach ()

at A.e.onmessage (/Users/matt/.config/yarn/global/node_modules/react-devtools-core/dist/standalone.js:48:667634)

at A.t (/Users/matt/.config/yarn/global/node_modules/react-devtools-core/dist/standalone.js:39:2838)

at A.emit (events.js:315:20)

at e.exports.L (/Users/matt/.config/yarn/global/node_modules/react-devtools-core/dist/standalone.js:3:58322)

at e.exports.emit (events.js:315:20)

```

### Error component stack (automated)

_No response_

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Cannot remove node because no matching node was found in the Store. in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-07-05 03:46:47,bug,[DevTools Bug]: Uncaught TypeError: hook.sub is not a function,"### Website or app

New project created by CRA

### Repro steps

1. create a new project by CRA on mac os Monterey v12.4;

2. import 'react-devtools' at first line of `src/index.tsx`;

3. sudo npm i --location=global react-devtools;

4. npx react-devtools;

5. in my raect app console run `t=document.createElement('script'); t.type='text/javascript'; t.src='http://localhost:8097'; document.head.prepend(t)`;

6. Uncaught TypeError: hook.sub is not a function, below are some screenshots.

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-06-29 02:11:00,bug,"[DevTools Bug] Cannot remove node ""25"" because no matching node was found in the Store.","### Website or app

localhost

### Repro steps

This error occurs every time I rebuild my React app

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.24.7-7f673317f

### Error message (automated)

Cannot remove node ""25"" because no matching node was found in the Store.

### Error call stack (automated)

```text

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:26516:43

at bridge_Bridge.emit (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:24434:22)

at chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:24603:14

at listener (chrome-extension://fmkadmapgofadopljbjfkapdkoienihi/build/main.js:54566:39)

```

### Error component stack (automated)

_No response_

### GitHub query string (automated)

```text

https://api.github.com/search/issues?q=Cannot remove node because no matching node was found in the Store. in:title is:issue is:open is:public label:""Component: Developer Tools"" repo:facebook/react

```

"

facebook/react,2022-06-08 13:57:18,bug,[DevTools Bug]: devtool show every page use `React`,"### Website or app

https://vuejs.org/guide/introduction.html

### Repro steps

Open the [Vue3](https://vuejs.org/guide/introduction.html) doc site, then `react` devtool show this page use procution build of `React`

And Github too

I'm using Crome

Version 102.0.5005.61 (Official Build) (x86_64)

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-06-05 03:26:15,bug,[DevTools Bug]: Warning: Internal React error: Expected static flag was missing. Please notify the React team.,"### Website or app

https://codepen.io/alejozarate/pen/zYRLKww

### Repro steps

The component is successfully rendered with all the interactions working properly.

As far as I can tell, the error is only shown in the console. The traceback point to the line 15 of the codepen:

const _ahr = await SContract.methods.rewardPerHour().call();

### How often does this bug happen?

Every time

### DevTools package (automated)

_No response_

### DevTools version (automated)

_No response_

### Error message (automated)

_No response_

### Error call stack (automated)

_No response_

### Error component stack (automated)

_No response_

### GitHub query string (automated)

_No response_"

facebook/react,2022-05-26 02:08:32,bug,"[DevTools Bug] When inspecting, hook values after `useDeferredValue` are offset","### Website or app

https://github.com/Alduino/React-useDeferredValue-DevTools-Reprod

### Repro steps

1. Start the app in either dev or production mode.

2. Open the React dev tools

3. Click on the ""App"" component

4. The first Memo has the value `3.14` (the value that the second Memo should have) instead of `1.41`

If you want it to throw an error instead of just looking at the values, you can set `window.throwIfIncorrect = true` before DevTools inspects the component.

I tried in CodeSandbox's embedded React dev tools as well - the issue happens in React 18.0 (though the first hook has no value and the second has the first hook's value) but it doesn't happen in 18.1.

### How often does this bug happen?

Every time

### DevTools package (automated)

react-devtools-extensions

### DevTools version (automated)

4.24.0-82762bea5

### Error message (automated)

First is 3.14 when it should be 1.41

### Error call stack (automated)

```text

I@moz-extension://38b0497f-37d9-49e7-9147-393888469493/build/react_devtools_backend.js:14022:6

exports.inspectHooksOfFiber@moz-extension://38b0497f-37d9-49e7-9147-393888469493/build/react_devtools_backend.js:14090:12

inspectElementRaw@moz-extension://38b0497f-37d9-49e7-9147-393888469493/build/react_devtools_backend.js:8847:65

inspectElement@moz-extension://38b0497f-37d9-49e7-9147-393888469493/build/react_devtools_backend.js:9130:38

agent_Agent/<@moz-extension://38b0497f-37d9-49e7-9147-393888469493/build/react_devtools_backend.js:11002:56

emit@moz-extension://38b0497f-37d9-49e7-9147-393888469493/build/react_devtools_backend.js:4137:18

Bridge/this._wallUnlisten<@moz-extension://38b0497f-37d9-49e7-9147-393888469493/build/react_devtools_backend.js:4780:14