---

title: "Workflow step examples"

author: "Author: Daniela Cassol (danielac@ucr.edu) and Thomas Girke (thomas.girke@ucr.edu)"

date: "Last update: `r format(Sys.time(), '%d %B, %Y')`"

output:

BiocStyle::html_document:

toc_float: true

code_folding: show

BiocStyle::pdf_document: default

package: systemPipeR

vignette: |

%\VignetteEncoding{UTF-8}

%\VignetteIndexEntry{systemPipeR: Workflow design and reporting generation environment}

%\VignetteEngine{knitr::rmarkdown}

fontsize: 14pt

bibliography: bibtex.bib

editor_options:

chunk_output_type: console

type: docs

weight: 4

---

```{r style, echo = FALSE, results = 'asis'}

BiocStyle::markdown()

options(width=80, max.print=1000)

knitr::opts_chunk$set(

eval=as.logical(Sys.getenv("KNITR_EVAL", "TRUE")),

cache=as.logical(Sys.getenv("KNITR_CACHE", "TRUE")),

tidy.opts=list(width.cutoff=80), tidy=TRUE)

```

```{r setup, echo=FALSE, message=FALSE, warning=FALSE}

suppressPackageStartupMessages({

library(systemPipeR)

})

```

## Define environment settings and samples

A typical workflow starts with generating the expected working environment

containing the proper directory structure, input files, and parameter settings.

To simplify this task, one can load one of the existing NGS workflows templates

provided by _`systemPipeRdata`_ into the current working directory. The

following does this for the _`rnaseq`_ template. The name of the resulting

workflow directory can be specified under the _`mydirname`_ argument. The

default _`NULL`_ uses the name of the chosen workflow. An error is issued if a

directory of the same name and path exists already. On Linux and OS X systems

one can also create new workflow instances from the command-line of a terminal as shown

[here](http://bioconductor.org/packages/devel/data/experiment/vignettes/systemPipeRdata/inst/doc/systemPipeRdata.html#generate-workflow-template).

To apply workflows to custom data, the user needs to modify the _`targets`_ file and if

necessary update the corresponding _`.cwl`_ and _`.yml`_ files. A collection of pre-generated _`.cwl`_ and _`.yml`_ files are provided in the _`param/cwl`_ subdirectory of each workflow template. They

are also viewable in the GitHub repository of _`systemPipeRdata`_ ([see

here](https://github.com/tgirke/systemPipeRdata/tree/master/inst/extdata/param)).

```{r load_package, eval=TRUE}

library(systemPipeR)

library(systemPipeRdata)

genWorkenvir(workflow = "rnaseq", mydirname = NULL)

setwd("rnaseq")

```

```{r setting_dir, include=FALSE, warning=FALSE}

knitr::opts_knit$set(root.dir = 'rnaseq')

unlink("systemPipeRNAseq_importWF.Rmd")

unlink("systemPipeRNAseq.Rmd")

```

## Project initialization

To create a Workflow within _`systemPipeR`_, we can start by defining an empty

container and checking the directory structure:

```{r SPRproject, eval=TRUE}

sal <- SPRproject()

```

### Required packages and resources

The `systemPipeR` package needs to be loaded [@H_Backman2016-bt].

```{r load_SPR, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

library(systemPipeR)

}, step_name = "load_SPR")

```

## Read Preprocessing

### Preprocessing with _`preprocessReads`_ function

The function _`preprocessReads`_ allows to apply predefined or custom

read preprocessing functions to all FASTQ files referenced in a

_`SYSargsList`_ container, such as quality filtering or adaptor trimming

routines. Internally, _`preprocessReads`_ uses the _`FastqStreamer`_ function from

the _`ShortRead`_ package to stream through large FASTQ files in a

memory-efficient manner. The following example performs adaptor trimming with

the _`trimLRPatterns`_ function from the _`Biostrings`_ package.

Here, we are appending this step at the _`SYSargsList`_ object created previously.

All the parameters are defined on the _`preprocessReads/preprocessReads-se.yml`_ file.

```{r preprocessing, message=FALSE, eval=TRUE, spr='sysargs'}

targetspath <- system.file("extdata", "targets.txt", package = "systemPipeR")

appendStep(sal) <- SYSargsList(

step_name = "preprocessing",

targets = targetspath, dir = TRUE,

wf_file = "preprocessReads/preprocessReads-se.cwl",

input_file = "preprocessReads/preprocessReads-se.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = c(FileName = "_FASTQ_PATH1_", SampleName = "_SampleName_"),

dependency = "load_SPR"

)

```

The paths to the resulting output FASTQ files can be checked as follows:

```{r outfiles, eval=TRUE}

outfiles(sal)[[2]]

```

After the trimming step a new targets file is generated containing the paths to

the trimmed FASTQ files. The new targets information can be used for the next workflow

step instance, _e.g._ running the NGS alignments with the trimmed FASTQ files.

The following example shows how one can design a custom read preprocessing function

using utilities provided by the _`ShortRead`_ package, and then run it

in batch mode with the _'preprocessReads'_ function. For here, it is possible to

replace the function used on the `preprocessing` step and modify the `sal` object.

First, we defined the function:

```{r custom_preprocessing_function, eval=FALSE}

appendStep(sal) <- LineWise({

filterFct <- function(fq, cutoff=20, Nexceptions=0) {

qcount <- rowSums(as(quality(fq), "matrix") <= cutoff, na.rm=TRUE)

# Retains reads where Phred scores are >= cutoff with N exceptions

fq[qcount <= Nexceptions]

}

},

step_name = "custom_preprocessing_function",

dependency = "preprocessing")

```

After, we can edit the input parameter:

```{r editing_preprocessing, message=FALSE, eval=FALSE}

yamlinput(sal, 2)$Fct

yamlinput(sal, 2, "Fct") <- "'filterFct(fq, cutoff=20, Nexceptions=0)'"

yamlinput(sal, 2)$Fct ## check the new function

```

### Preprocessing with TrimGalore!

[TrimGalore!](http://www.bioinformatics.babraham.ac.uk/projects/trim_galore/) is

a wrapper tool to consistently apply quality and adapter trimming to fastq files,

with some extra functionality for removing Reduced Representation Bisulfite-Seq

(RRBS) libraries.

```{r trimGalore, eval=TRUE, spr='sysargs'}

targetspath <- system.file("extdata", "targets.txt", package = "systemPipeR")

appendStep(sal) <- SYSargsList(

step_name = "trimGalore",

targets = targetspath, dir = TRUE,

wf_file = "trim_galore/trim_galore-se.cwl",

input_file = "trim_galore/trim_galore-se.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = c(FileName = "_FASTQ_PATH1_", SampleName = "_SampleName_"),

dependency = "load_SPR",

run_step = "optional"

)

```

### Preprocessing with Trimmomatic

```{r trimmomatic, eval=TRUE, spr='sysargs'}

targetspath <- system.file("extdata", "targets.txt", package = "systemPipeR")

appendStep(sal) <- SYSargsList(

step_name = "trimmomatic",

targets = targetspath, dir = TRUE,

wf_file = "trimmomatic/trimmomatic-se.cwl",

input_file = "trimmomatic/trimmomatic-se.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = c(FileName = "_FASTQ_PATH1_", SampleName = "_SampleName_"),

dependency = "load_SPR",

run_step = "optional"

)

```

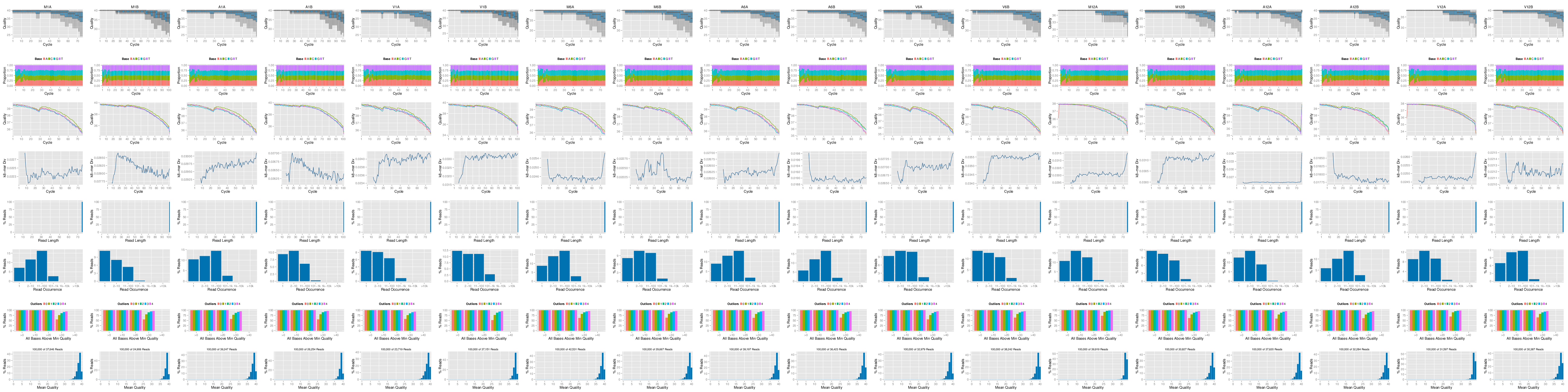

## FASTQ quality report

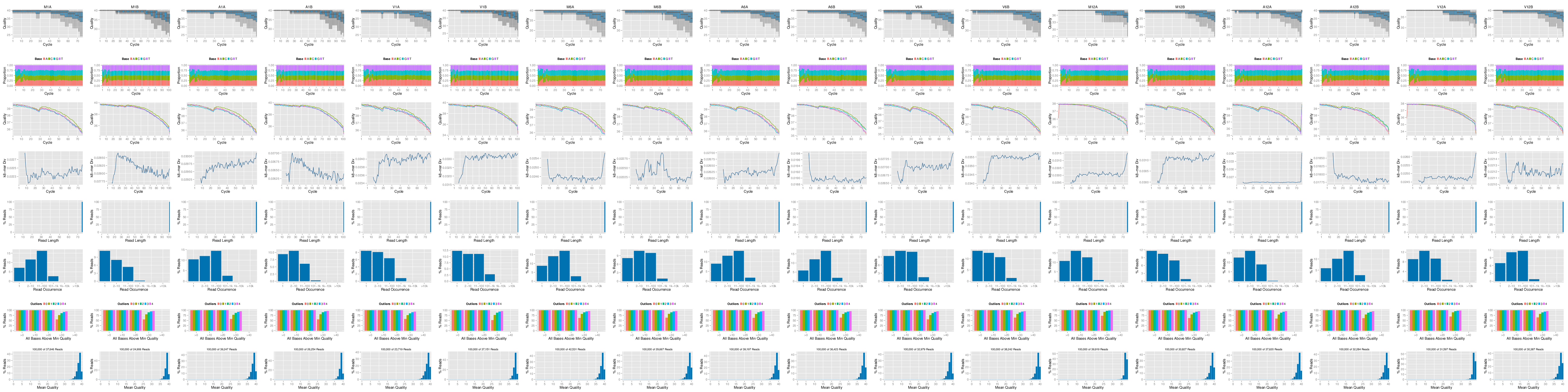

The following _`seeFastq`_ and _`seeFastqPlot`_ functions generate and plot a series of

useful quality statistics for a set of FASTQ files including per cycle quality

box plots, base proportions, base-level quality trends, relative k-mer

diversity, length and occurrence distribution of reads, number of reads above

quality cutoffs and mean quality distribution.

The function _`seeFastq`_ computes the quality statistics and stores the results in a

relatively small list object that can be saved to disk with _`save()`_ and

reloaded with _`load()`_ for later plotting. The argument _`klength`_ specifies the

k-mer length and _`batchsize`_ the number of reads to a random sample from each

FASTQ file.

```{r fastq_quality, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

files <- getColumn(sal, step = "preprocessing", 'outfiles') # get outfiles from preprocessing step

fqlist <- seeFastq(fastq=files, batchsize=10000, klength=8)

pdf("./results/fastqReport.pdf", height = 18, width = 4*length(fqlist))

seeFastqPlot(fqlist)

dev.off()

},

step_name = "fastq_quality",

dependency = "preprocessing"

)

```

**Figure 1:** FASTQ quality report

Parallelization of FASTQ quality report on a single machine with multiple cores.

```{r fastq_quality_option, eval=FALSE}

appendStep(sal) <- LineWise({

files <- getColumn(sal, step = "preprocessing", 'outfiles') # get outfiles from preprocessing step

f <- function(x) seeFastq(fastq=files[x], batchsize=100000, klength=8)

fqlist <- bplapply(seq(along=files), f, BPPARAM = MulticoreParam(workers=4)) ## Number of workers = 4

pdf("./results/fastqReport.pdf", height=18, width=4*length(fqlist))

seeFastqPlot(unlist(fqlist, recursive=FALSE))

dev.off()

},

step_name = "fastq_quality",

dependency = "preprocessing"

)

```

## NGS Alignment software

After quality control, the sequence reads can be aligned to a reference genome or

transcriptome database. The following sessions present some NGS sequence alignment

software. Select the most accurate aligner and determining the optimal parameter

for your custom data set project.

For all the following examples, it is necessary to install the respective software

and export the `PATH` accordingly. If it is available [Environment Module](http://modules.sourceforge.net/)

in the system, you can load all the request software with _`moduleload(args)`_ function.

### Alignment with `HISAT2`

The following steps will demonstrate how to use the short read aligner `Hisat2`

[@Kim2015-ve] in both interactive job submissions and batch submissions to

queuing systems of clusters using the _`systemPipeR's`_ new CWL command-line interface.

- Build `Hisat2` index.

```{r hisat_index, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "hisat_index",

targets = NULL, dir = FALSE,

wf_file = "hisat2/hisat2-index.cwl",

input_file = "hisat2/hisat2-index.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = NULL,

dependency = "preprocessing"

)

```

The parameter settings of the aligner are defined in the `workflow_hisat2-se.cwl`

and `workflow_hisat2-se.yml` files. The following shows how to construct the

corresponding *SYSargsList* object, and append to *sal* workflow.

- Alignment with `HISAT2` and `SAMtools`

It possible to build an workflow with `HISAT2` and `SAMtools`.

```{r hisat_mapping_samtools, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "hisat_mapping",

targets = "preprocessing", dir = TRUE,

wf_file = "workflow-hisat2/workflow_hisat2-se.cwl",

input_file = "workflow-hisat2/workflow_hisat2-se.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars=c(preprocessReads_se="_FASTQ_PATH1_", SampleName="_SampleName_"),

dependency = c("hisat_index")

)

```

### Alignment with `Tophat2`

The NGS reads of this project can also be aligned against the reference genome

sequence using `Bowtie2/TopHat2` [@Kim2013-vg; @Langmead2012-bs].

- Build _`Bowtie2`_ index.

```{r bowtie_index, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "bowtie_index",

targets = NULL, dir = FALSE,

wf_file = "bowtie2/bowtie2-index.cwl",

input_file = "bowtie2/bowtie2-index.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = NULL,

dependency = "preprocessing",

run_step = "optional"

)

```

The parameter settings of the aligner are defined in the `workflow_tophat2-mapping.cwl`

and `tophat2-mapping-pe.yml` files. The following shows how to construct the

corresponding *SYSargsList* object, using the outfiles from the `preprocessing` step.

```{r tophat2_mapping, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "tophat2_mapping",

targets = "preprocessing", dir = TRUE,

wf_file = "tophat2/workflow_tophat2-mapping-se.cwl",

input_file = "tophat2/tophat2-mapping-se.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars=c(preprocessReads_se="_FASTQ_PATH1_", SampleName="_SampleName_"),

dependency = c("bowtie_index"),

run_session = "compute",

run_step = "optional"

)

```

### Alignment with _`Bowtie2`_ (_e.g._ for miRNA profiling)

The following example runs _`Bowtie2`_ as a single process without submitting it to a cluster.

```{r bowtie2_mapping, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "bowtie2_mapping",

targets = "preprocessing", dir = TRUE,

wf_file = "bowtie2/workflow_bowtie2-mapping-se.cwl",

input_file = "bowtie2/bowtie2-mapping-se.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars=c(preprocessReads_se="_FASTQ_PATH1_", SampleName="_SampleName_"),

dependency = c("bowtie_index"),

run_session = "compute",

run_step = "optional"

)

```

### Alignment with _`BWA-MEM`_ (_e.g._ for VAR-Seq)

The following example runs BWA-MEM as a single process without submitting it to a cluster.

- Build the index:

```{r bwa_index, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "bwa_index",

targets = NULL, dir = FALSE,

wf_file = "bwa/bwa-index.cwl",

input_file = "bwa/bwa-index.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = NULL,

dependency = "preprocessing",

run_step = "optional"

)

```

- Prepare the alignment step:

```{r bwa_mapping, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "bwa_mapping",

targets = "preprocessing", dir = TRUE,

wf_file = "bwa/bwa-se.cwl",

input_file = "bwa/bwa-se.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars=c(preprocessReads_se="_FASTQ_PATH1_", SampleName="_SampleName_"),

dependency = c("bwa_index"),

run_session = "compute",

run_step = "optional"

)

```

### Alignment with _`Rsubread`_ (_e.g._ for RNA-Seq)

The following example shows how one can use within the \Rpackage{systemPipeR} environment the R-based aligner \Rpackage{Rsubread}, allowing running from R or command-line.

- Build the index:

```{r rsubread_index, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "rsubread_index",

targets = NULL, dir = FALSE,

wf_file = "rsubread/rsubread-index.cwl",

input_file = "rsubread/rsubread-index.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = NULL,

dependency = "preprocessing",

run_step = "optional"

)

```

- Prepare the alignment step:

```{r rsubread_mapping, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "rsubread",

targets = "preprocessing", dir = TRUE,

wf_file = "rsubread/rsubread-mapping-se.cwl",

input_file = "rsubread/rsubread-mapping-se.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars=c(preprocessReads_se="_FASTQ_PATH1_", SampleName="_SampleName_"),

dependency = c("rsubread_index"),

run_session = "compute",

run_step = "optional"

)

```

### Alignment with _`gsnap`_ (_e.g._ for VAR-Seq and RNA-Seq)

Another R-based short read aligner is _`gsnap`_ from the _`gmapR`_ package [@Wu2010-iq].

The code sample below introduces how to run this aligner on multiple nodes of a compute cluster.

- Build the index:

```{r gsnap_index, eval=TRUE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "gsnap_index",

targets = NULL, dir = FALSE,

wf_file = "gsnap/gsnap-index.cwl",

input_file = "gsnap/gsnap-index.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = NULL,

dependency = "preprocessing",

run_step = "optional"

)

```

- Prepare the alignment step:

```{r gsnap_mapping, eval=FALSE, spr='sysargs'}

appendStep(sal) <- SYSargsList(

step_name = "gsnap",

targets = "targetsPE.txt", dir = TRUE,

wf_file = "gsnap/gsnap-mapping-pe.cwl",

input_file = "gsnap/gsnap-mapping-pe.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = c(FileName1 = "_FASTQ_PATH1_",

FileName2 = "_FASTQ_PATH2_", SampleName = "_SampleName_"),

dependency = c("gsnap_index"),

run_session = "compute",

run_step = "optional"

)

```

```{r gsnap_mapping_internal, eval=TRUE, echo=FALSE}

appendStep(sal) <- SYSargsList(

step_name = "gsnap",

targets = system.file("extdata", "targetsPE.txt", package = "systemPipeR"), dir = TRUE,

wf_file = "gsnap/gsnap-mapping-pe.cwl",

input_file = "gsnap/gsnap-mapping-pe.yml",

dir_path = system.file("extdata/cwl", package = "systemPipeR"),

inputvars = c(FileName1 = "_FASTQ_PATH1_",

FileName2 = "_FASTQ_PATH2_", SampleName = "_SampleName_"),

dependency = c("gsnap_index"),

run_session = "compute",

run_step = "optional"

)

```

## Create symbolic links for viewing BAM files in IGV

The genome browser IGV supports reading of indexed/sorted BAM files via web URLs. This way it can be avoided to create unnecessary copies of these large files. To enable this approach, an HTML directory with Http access needs to be available in the user account (_e.g._ _`home/publichtml`_) of a system. If this is not the case then the BAM files need to be moved or copied to the system where IGV runs. In the following, _`htmldir`_ defines the path to the HTML directory with http access where the symbolic links to the BAM files will be stored. The corresponding URLs will be written to a text file specified under the `_urlfile`_ argument.

```{r igv, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

symLink2bam(

sysargs=stepsWF(sal)[[7]],

htmldir=c("~/.html/", "somedir/"),

urlbase="http://myserver.edu/~username/",

urlfile="IGVurl.txt"

)

},

step_name = "igv",

dependency = "hisat_mapping"

)

```

## Read counting for mRNA profiling experiments

Create _`txdb`_ (needs to be done only once).

```{r create_txdb, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

library(GenomicFeatures)

txdb <- makeTxDbFromGFF(file="data/tair10.gff", format="gff",

dataSource="TAIR", organism="Arabidopsis thaliana")

saveDb(txdb, file="./data/tair10.sqlite")

},

step_name = "create_txdb",

dependency = "hisat_mapping"

)

```

The following performs read counting with _`summarizeOverlaps`_ in parallel mode with multiple cores.

```{r read_counting, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

library(BiocParallel)

txdb <- loadDb("./data/tair10.sqlite")

eByg <- exonsBy(txdb, by="gene")

outpaths <- getColumn(sal, step = "hisat_mapping", 'outfiles', column = 2)

bfl <- BamFileList(outpaths, yieldSize=50000, index=character())

multicoreParam <- MulticoreParam(workers=4); register(multicoreParam); registered()

counteByg <- bplapply(bfl, function(x) summarizeOverlaps(eByg, x, mode="Union",

ignore.strand=TRUE,

inter.feature=TRUE,

singleEnd=TRUE))

# Note: for strand-specific RNA-Seq set 'ignore.strand=FALSE' and for PE data set 'singleEnd=FALSE'

countDFeByg <- sapply(seq(along=counteByg),

function(x) assays(counteByg[[x]])$counts)

rownames(countDFeByg) <- names(rowRanges(counteByg[[1]]))

colnames(countDFeByg) <- names(bfl)

rpkmDFeByg <- apply(countDFeByg, 2, function(x) returnRPKM(counts=x, ranges=eByg))

write.table(countDFeByg, "results/countDFeByg.xls",

col.names=NA, quote=FALSE, sep="\t")

write.table(rpkmDFeByg, "results/rpkmDFeByg.xls",

col.names=NA, quote=FALSE, sep="\t")

},

step_name = "read_counting",

dependency = "create_txdb"

)

```

Please note, in addition to read counts this step generates RPKM normalized expression values. For most statistical differential expression or abundance analysis methods, such as _`edgeR`_ or _`DESeq2`_, the raw count values should be used as input. The usage of RPKM values should be restricted to specialty applications required by some users, _e.g._ manually comparing the expression levels of different genes or features.

#### Read and alignment count stats

Generate a table of read and alignment counts for all samples.

```{r align_stats, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

read_statsDF <- alignStats(args)

write.table(read_statsDF, "results/alignStats.xls",

row.names = FALSE, quote = FALSE, sep = "\t")

},

step_name = "align_stats",

dependency = "hisat_mapping"

)

```

The following shows the first four lines of the sample alignment stats file

provided by the _`systemPipeR`_ package. For simplicity the number of PE reads

is multiplied here by 2 to approximate proper alignment frequencies where each

read in a pair is counted.

```{r align_stats2, eval=TRUE}

read.table(system.file("extdata", "alignStats.xls", package = "systemPipeR"), header = TRUE)[1:4,]

```

## Read counting for miRNA profiling experiments

Download `miRNA` genes from `miRBase`.

```{r read_counting_mirna, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

system("wget https://www.mirbase.org/ftp/CURRENT/genomes/ath.gff3 -P ./data/")

gff <- rtracklayer::import.gff("./data/ath.gff3")

gff <- split(gff, elementMetadata(gff)$ID)

bams <- getColumn(sal, step = "bowtie2_mapping", 'outfiles', column = 2)

bfl <- BamFileList(bams, yieldSize=50000, index=character())

countDFmiR <- summarizeOverlaps(gff, bfl, mode="Union",

ignore.strand = FALSE, inter.feature = FALSE)

countDFmiR <- assays(countDFmiR)$counts

# Note: inter.feature=FALSE important since pre and mature miRNA ranges overlap

rpkmDFmiR <- apply(countDFmiR, 2, function(x) returnRPKM(counts = x, ranges = gff))

write.table(assays(countDFmiR)$counts, "results/countDFmiR.xls",

col.names=NA, quote=FALSE, sep="\t")

write.table(rpkmDFmiR, "results/rpkmDFmiR.xls", col.names=NA, quote=FALSE, sep="\t")

},

step_name = "read_counting_mirna",

dependency = "bowtie2_mapping"

)

```

## Correlation analysis of samples

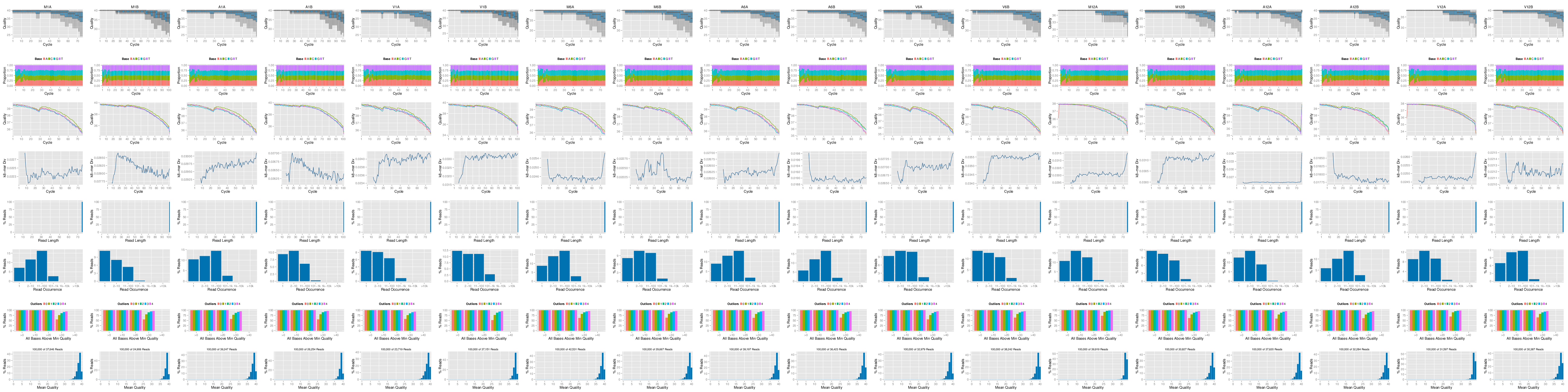

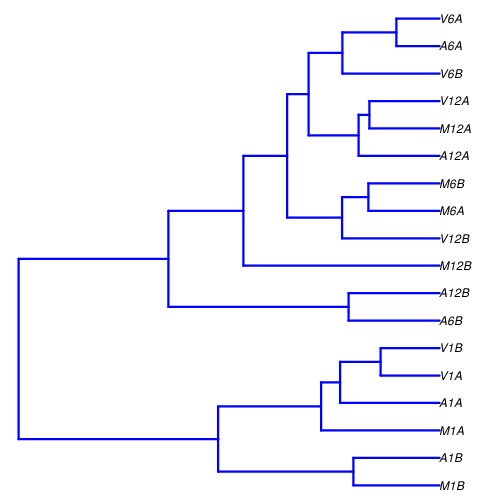

The following computes the sample-wise Spearman correlation coefficients from the _`rlog`_ (regularized-logarithm) transformed expression values generated with the _`DESeq2`_ package. After transformation to a distance matrix, hierarchical clustering is performed with the _`hclust`_ function and the result is plotted as a dendrogram ([sample\_tree.pdf](sample_tree.png)).

```{r sample_tree_rlog, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

library(DESeq2, warn.conflicts=FALSE, quietly=TRUE)

library(ape, warn.conflicts=FALSE)

countDFpath <- system.file("extdata", "countDFeByg.xls", package="systemPipeR")

countDF <- as.matrix(read.table(countDFpath))

colData <- data.frame(row.names = targetsWF(sal)[[2]]$SampleName,

condition=targetsWF(sal)[[2]]$Factor)

dds <- DESeqDataSetFromMatrix(countData = countDF, colData = colData,

design = ~ condition)

d <- cor(assay(rlog(dds)), method = "spearman")

hc <- hclust(dist(1-d))

plot.phylo(as.phylo(hc), type = "p", edge.col = 4, edge.width = 3,

show.node.label = TRUE, no.margin = TRUE)

},

step_name = "sample_tree_rlog",

dependency = "read_counting"

)

```

**Figure 2:** Correlation dendrogram of samples for _`rlog`_ values.

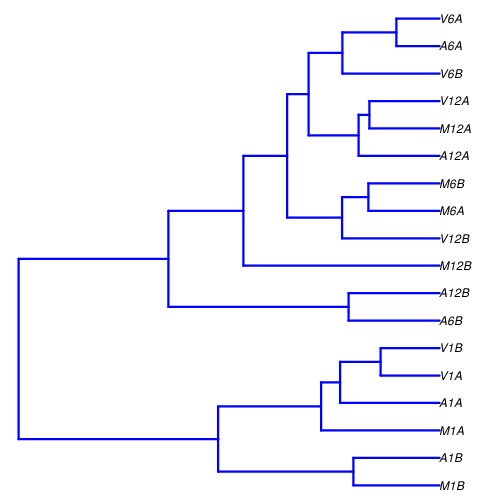

## DEG analysis with _`edgeR`_

The following _`run_edgeR`_ function is a convenience wrapper for

identifying differentially expressed genes (DEGs) in batch mode with

_`edgeR`_'s GML method [@Robinson2010-uk] for any number of

pairwise sample comparisons specified under the _`cmp`_ argument. Users

are strongly encouraged to consult the

[_`edgeR`_](\href{http://www.bioconductor.org/packages/devel/bioc/vignettes/edgeR/inst/doc/edgeRUsersGuide.pdf) vignette

for more detailed information on this topic and how to properly run _`edgeR`_

on data sets with more complex experimental designs.

```{r edger, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

targetspath <- system.file("extdata", "targets.txt", package = "systemPipeR")

targets <- read.delim(targetspath, comment = "#")

cmp <- readComp(file = targetspath, format = "matrix", delim = "-")

countDFeBygpath <- system.file("extdata", "countDFeByg.xls", package = "systemPipeR")

countDFeByg <- read.delim(countDFeBygpath, row.names = 1)

edgeDF <- run_edgeR(countDF = countDFeByg, targets = targets, cmp = cmp[[1]],

independent = FALSE, mdsplot = "")

DEG_list <- filterDEGs(degDF = edgeDF, filter = c(Fold = 2, FDR = 10))

},

step_name = "edger",

dependency = "read_counting"

)

```

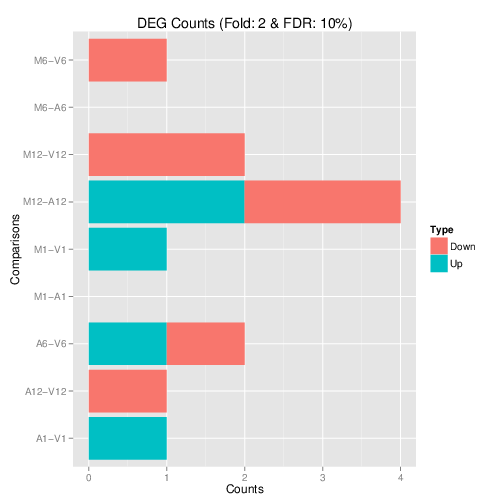

Filter and plot DEG results for up and down-regulated genes. Because of the small size of the toy data set used by this vignette, the _FDR_ value has been set to a relatively high threshold (here 10%). More commonly used _FDR_ cutoffs are 1% or 5%. The definition of '_up_' and '_down_' is given in the corresponding help file. To open it, type _`?filterDEGs`_ in the R console.

**Figure 3:** Up and down regulated DEGs identified by _`edgeR`_.

## DEG analysis with _`DESeq2`_

The following _`run_DESeq2`_ function is a convenience wrapper for

identifying DEGs in batch mode with _`DESeq2`_ [@Love2014-sh] for any number of

pairwise sample comparisons specified under the _`cmp`_ argument. Users

are strongly encouraged to consult the

[_`DESeq2`_](http://www.bioconductor.org/packages/devel/bioc/vignettes/DESeq2/inst/doc/DESeq2.pdf) vignette

for more detailed information on this topic and how to properly run _`DESeq2`_

on data sets with more complex experimental designs.

```{r deseq2, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

degseqDF <- run_DESeq2(countDF=countDFeByg, targets=targets, cmp=cmp[[1]],

independent=FALSE)

DEG_list2 <- filterDEGs(degDF=degseqDF, filter=c(Fold=2, FDR=10))

},

step_name = "deseq2",

dependency = "read_counting"

)

```

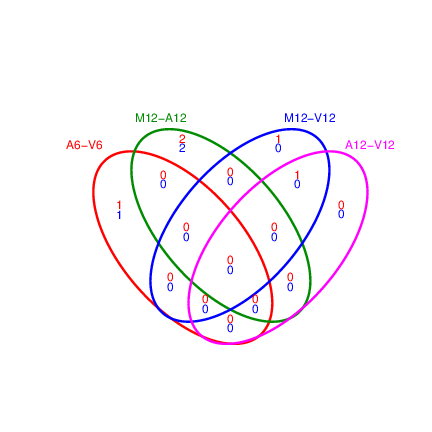

## Venn Diagrams

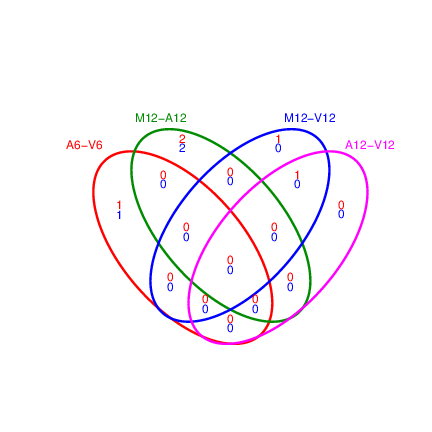

The function _`overLapper`_ can compute Venn intersects for large numbers of sample sets (up to 20 or more) and _`vennPlot`_ can plot 2-5 way Venn diagrams. A useful feature is the possibility to combine the counts from several Venn comparisons with the same number of sample sets in a single Venn diagram (here for 4 up and down DEG sets).

```{r vennplot, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

vennsetup <- overLapper(DEG_list$Up[6:9], type="vennsets")

vennsetdown <- overLapper(DEG_list$Down[6:9], type="vennsets")

vennPlot(list(vennsetup, vennsetdown), mymain="", mysub="",

colmode=2, ccol=c("blue", "red"))

},

step_name = "vennplot",

dependency = "edger"

)

```

**Figure 4:** Venn Diagram for 4 Up and Down DEG Sets.

## GO term enrichment analysis of DEGs

### Obtain gene-to-GO mappings

The following shows how to obtain gene-to-GO mappings from _`biomaRt`_ (here for _A. thaliana_) and how to organize them for the downstream GO term enrichment analysis. Alternatively, the gene-to-GO mappings can be obtained for many organisms from Bioconductor's _`*.db`_ genome annotation packages or GO annotation files provided by various genome databases. For each annotation, this relatively slow preprocessing step needs to be performed only once. Subsequently, the preprocessed data can be loaded with the _`load`_ function as shown in the next subsection.

```{r get_go_biomart, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

library("biomaRt")

listMarts() # To choose BioMart database

listMarts(host="plants.ensembl.org")

m <- useMart("plants_mart", host="https://plants.ensembl.org")

listDatasets(m)

m <- useMart("plants_mart", dataset="athaliana_eg_gene", host="https://plants.ensembl.org")

listAttributes(m) # Choose data types you want to download

go <- getBM(attributes=c("go_id", "tair_locus", "namespace_1003"), mart=m)

go <- go[go[,3]!="",]; go[,3] <- as.character(go[,3])

go[go[,3]=="molecular_function", 3] <- "F"

go[go[,3]=="biological_process", 3] <- "P"

go[go[,3]=="cellular_component", 3] <- "C"

go[1:4,]

dir.create("./data/GO")

write.table(go, "data/GO/GOannotationsBiomart_mod.txt",

quote=FALSE, row.names=FALSE, col.names=FALSE, sep="\t")

catdb <- makeCATdb(myfile="data/GO/GOannotationsBiomart_mod.txt",

lib=NULL, org="", colno=c(1,2,3), idconv=NULL)

save(catdb, file="data/GO/catdb.RData")

},

step_name = "get_go_biomart",

dependency = "edger"

)

```

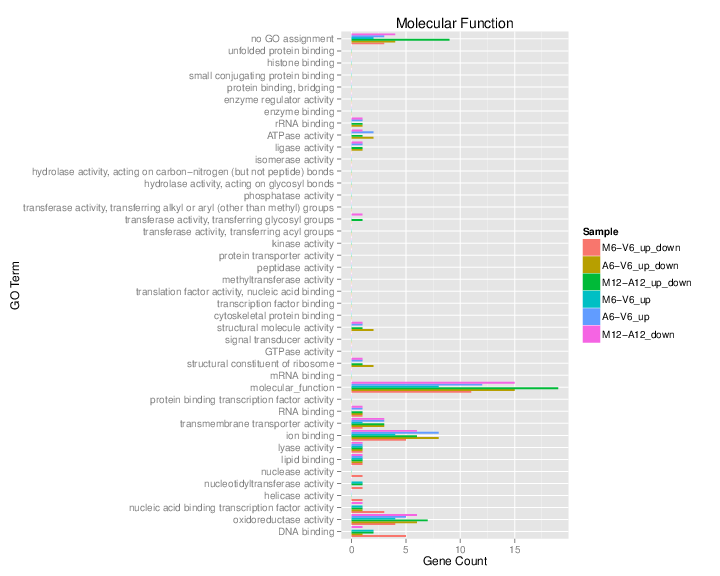

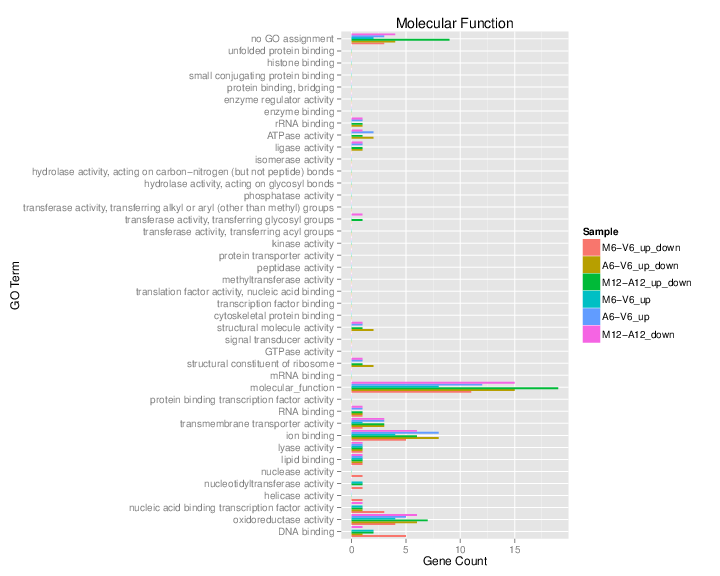

### Batch GO term enrichment analysis

Apply the enrichment analysis to the DEG sets obtained in the above differential expression analysis. Note, in the following example the _FDR_ filter is set here to an unreasonably high value, simply because of the small size of the toy data set used in this vignette. Batch enrichment analysis of many gene sets is performed with the _`GOCluster_Report`_ function. When _`method="all"`_, it returns all GO terms passing the p-value cutoff specified under the _`cutoff`_ arguments. When _`method="slim"`_, it returns only the GO terms specified under the _`myslimv`_ argument. The given example shows how one can obtain such a GO slim vector from BioMart for a specific organism.

```{r go_enrichment, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

load("data/GO/catdb.RData")

DEG_list <- filterDEGs(degDF=edgeDF, filter=c(Fold=2, FDR=50), plot=FALSE)

up_down <- DEG_list$UporDown; names(up_down) <- paste(names(up_down), "_up_down", sep="")

up <- DEG_list$Up; names(up) <- paste(names(up), "_up", sep="")

down <- DEG_list$Down; names(down) <- paste(names(down), "_down", sep="")

DEGlist <- c(up_down, up, down)

DEGlist <- DEGlist[sapply(DEGlist, length) > 0]

BatchResult <- GOCluster_Report(catdb=catdb, setlist=DEGlist, method="all",

id_type="gene", CLSZ=2, cutoff=0.9,

gocats=c("MF", "BP", "CC"), recordSpecGO=NULL)

library("biomaRt")

m <- useMart("plants_mart", dataset="athaliana_eg_gene", host="https://plants.ensembl.org")

goslimvec <- as.character(getBM(attributes=c("goslim_goa_accession"), mart=m)[,1])

BatchResultslim <- GOCluster_Report(catdb=catdb, setlist=DEGlist, method="slim",

id_type="gene", myslimv=goslimvec, CLSZ=10,

cutoff=0.01, gocats=c("MF", "BP", "CC"),

recordSpecGO=NULL)

gos <- BatchResultslim[grep("M6-V6_up_down", BatchResultslim$CLID), ]

gos <- BatchResultslim

pdf("GOslimbarplotMF.pdf", height=8, width=10); goBarplot(gos, gocat="MF"); dev.off()

goBarplot(gos, gocat="BP")

goBarplot(gos, gocat="CC")

},

step_name = "go_enrichment",

dependency = "get_go_biomart"

)

```

### Plot batch GO term results

The _`data.frame`_ generated by _`GOCluster_Report`_ can be plotted with the _`goBarplot`_ function. Because of the variable size of the sample sets, it may not always be desirable to show the results from different DEG sets in the same bar plot. Plotting single sample sets is achieved by subsetting the input data frame as shown in the first line of the following example.

**Figure 5:** GO Slim Barplot for MF Ontology.

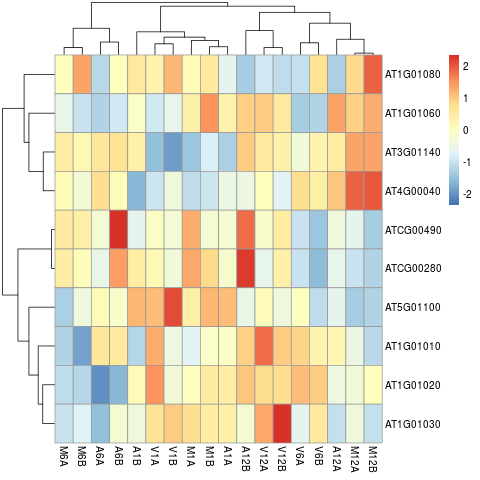

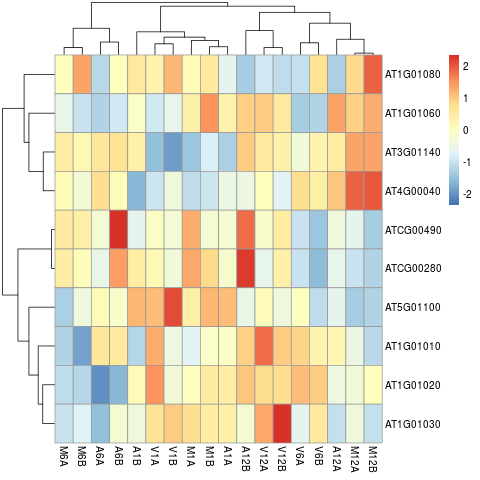

## Clustering and heat maps

The following example performs hierarchical clustering on the _`rlog`_ transformed expression matrix subsetted by the DEGs identified in the

above differential expression analysis. It uses a Pearson correlation-based distance measure and complete linkage for cluster join.

```{r hierarchical_clustering, message=FALSE, eval=TRUE, spr='r'}

appendStep(sal) <- LineWise({

library(pheatmap)

geneids <- unique(as.character(unlist(DEG_list[[1]])))

y <- assay(rlog(dds))[geneids, ]

pdf("heatmap1.pdf")

pheatmap(y, scale="row", clustering_distance_rows="correlation",

clustering_distance_cols="correlation")

dev.off()

},

step_name = "hierarchical_clustering",

dependency = c("sample_tree_rlog", "edger")

)

```

**Figure 7:** Heat map with hierarchical clustering dendrograms of DEGs.

## Visualize workflow

systemPipeR workflows instances can be visualized with the `plotWF` function.

This function will make a plot of selected workflow instance and the following information is displayed on the plot:

- Workflow structure (dependency graphs between different steps);

- Workflow step status, *e.g.* `Success`, `Error`, `Pending`, `Warnings`;

- Sample status and statistics;

- Workflow timing: running duration time.

If no argument is provided, the basic plot will automatically detect width, height, layout, plot method, branches, etc.

```{r}

plotWF(sal, show_legend = TRUE, width = "80%")

```

## Running the workflow

For running the workflow, `runWF` function will execute all the command lines store in the workflow container.

```{r, eval=FALSE}

sal <- runWF(sal)

```

#### Interactive job submissions in a single machine

To simplify the short read alignment execution for the user, the command-line

can be run with the *`runCommandline`* function.

The execution will be on a single machine without submitting to a queuing system

of a computer cluster. This way, the input FASTQ files will be processed sequentially.

By default *`runCommandline`* auto detects SAM file outputs and converts them

to sorted and indexed BAM files, using internally the `Rsamtools` package

[@Rsamtools]. Besides, *`runCommandline`* allows the user to create a dedicated

results folder for each workflow and a sub-folder for each sample

defined in the *targets* file. This includes all the output and log files for each

step. When these options are used, the output location will be updated by default

and can be assigned to the same object.

If available, multiple CPU cores can be used for processing each file. The number

of CPU cores (here 4) to use for each process is defined in the _`*.yml`_ file.

With _`yamlinput(args)['thread']`_ one can return this value from the _`SYSargs2`_ object.

#### Parallelization on clusters

Alternatively, the computation can be greatly accelerated by processing many files

in parallel using several compute nodes of a cluster, where a scheduling/queuing

system is used for load balancing. For this the *`clusterRun`* function submits

the computing requests to the scheduler using the run specifications

defined by *`runCommandline`*.

To avoid over-subscription of CPU cores on the compute nodes, the value from

_`yamlinput(args)['thread']`_ is passed on to the submission command, here _`ncpus`_

in the _`resources`_ list object. The number of independent parallel cluster

processes is defined under the _`Njobs`_ argument. The following example will run

18 processes in parallel using for each 4 CPU cores. If the resources available

on a cluster allow running all 18 processes at the same time then the shown sample

submission will utilize in total 72 CPU cores. Note, *`clusterRun`* can be used

with most queueing systems as it is based on utilities from the _`batchtools`_

package which supports the use of template files (_`*.tmpl`_) for defining the

run parameters of different schedulers. To run the following code, one needs to

have both a conf file (see _`.batchtools.conf.R`_ samples [here](https://mllg.github.io/batchtools/))

and a template file (see _`*.tmpl`_ samples [here](https://github.com/mllg/batchtools/tree/master/inst/templates))

for the queueing available on a system. The following example uses the sample

conf and template files for the Slurm scheduler provided by this package.

## Access the Previous Version

Please find [here](/sp/spr/steps_oldversion/) the previous version.

```{r quiting, eval=TRUE, echo=FALSE}

knitr::opts_knit$set(root.dir = '../')

unlink("rnaseq", recursive = TRUE)

```

## References