# :bar_chart: Benchmarks

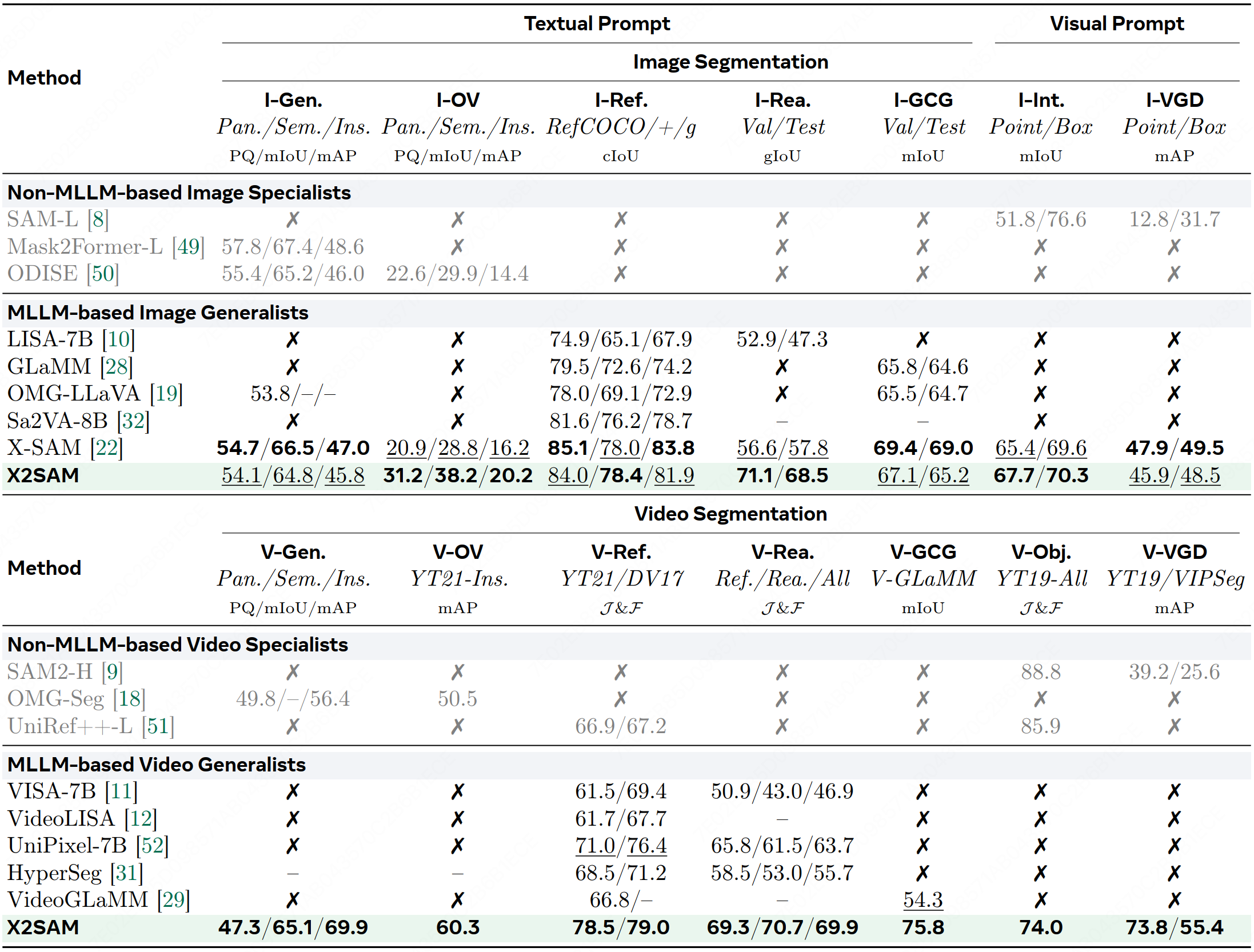

## Overall

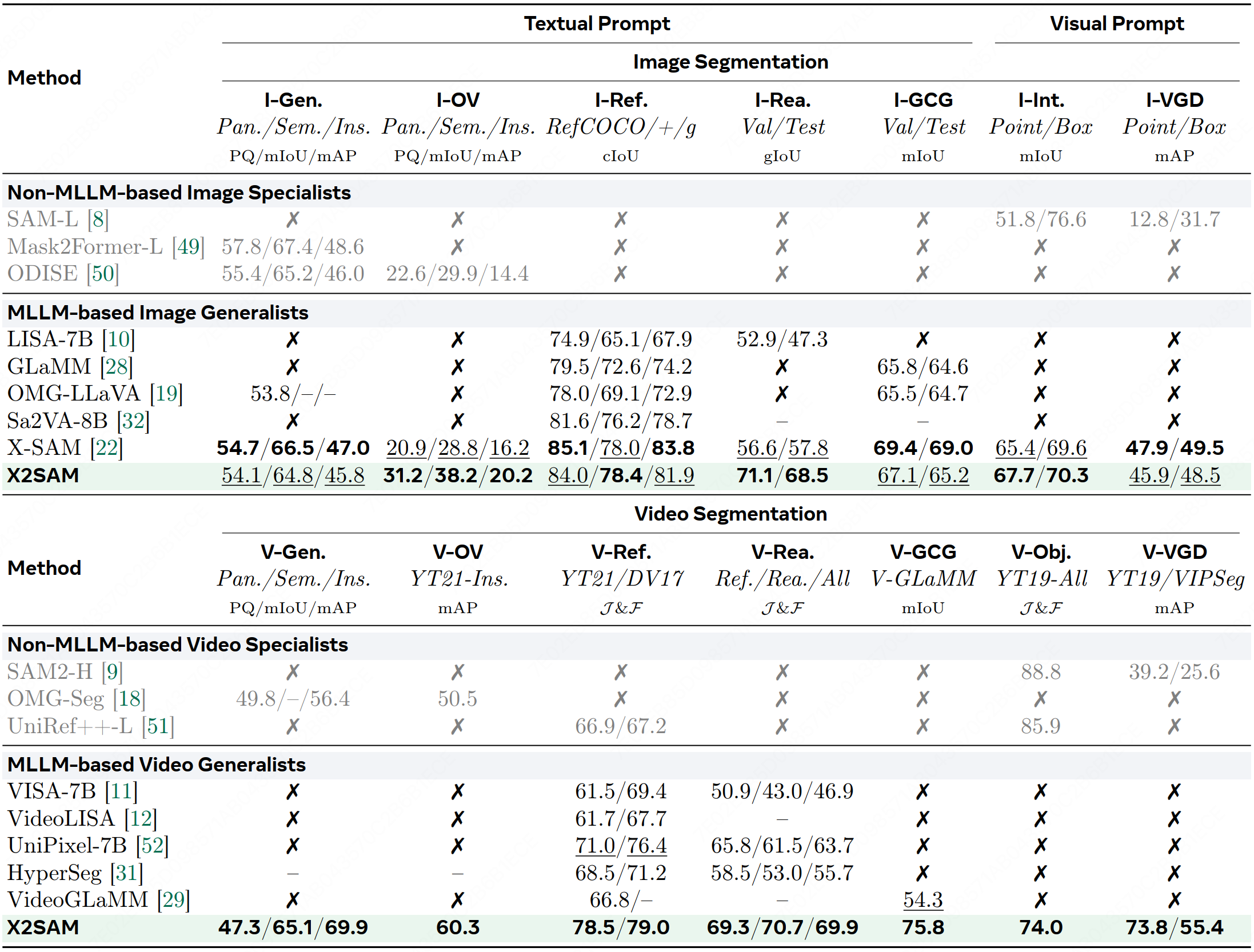

Table 1: Comparison of state-of-the-art segmentation methods across image and video segmentation benchmarks, ranging from non-MLLM-based to MLLM-based, and from specialists to generalists. ``x'' denotes unsupported. ``--'' indicates unreported. Best results are in bold, second-best are underlined.

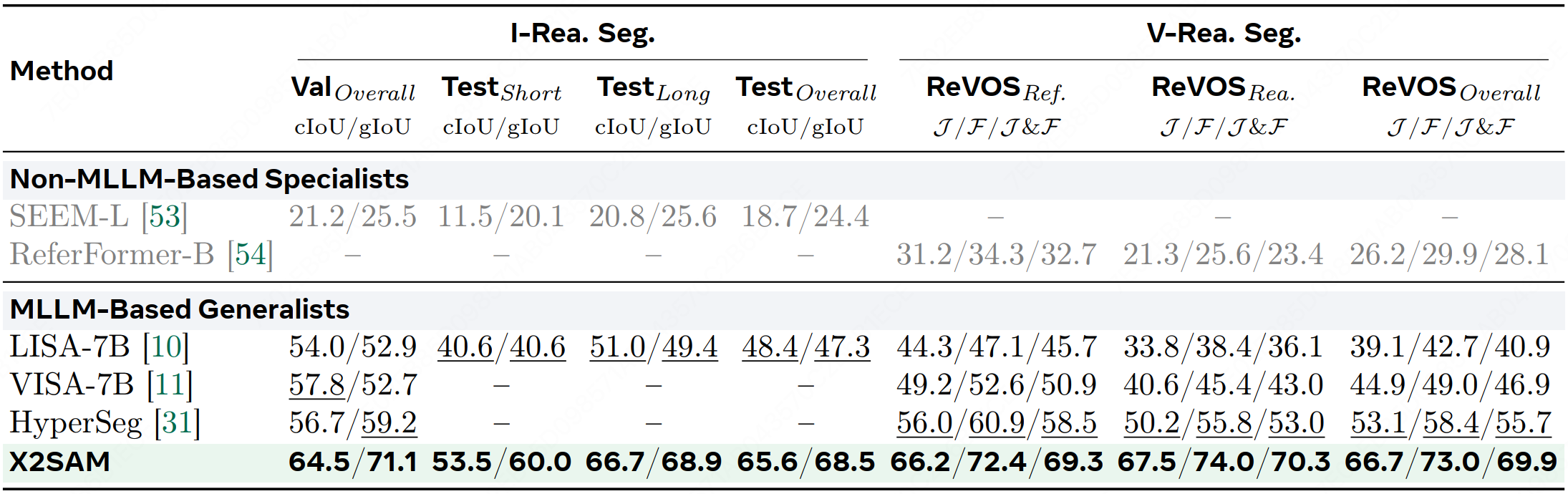

## Reasoning Segmentation

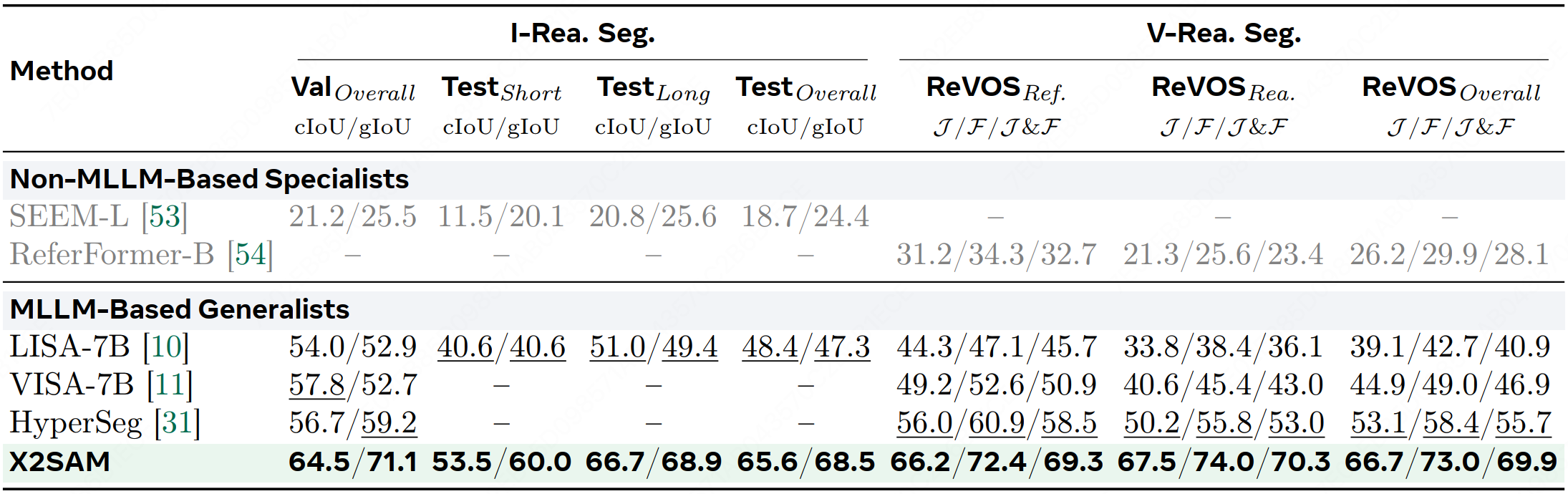

Table 2: Comparison across image and video reasoning segmentation benchmarks.

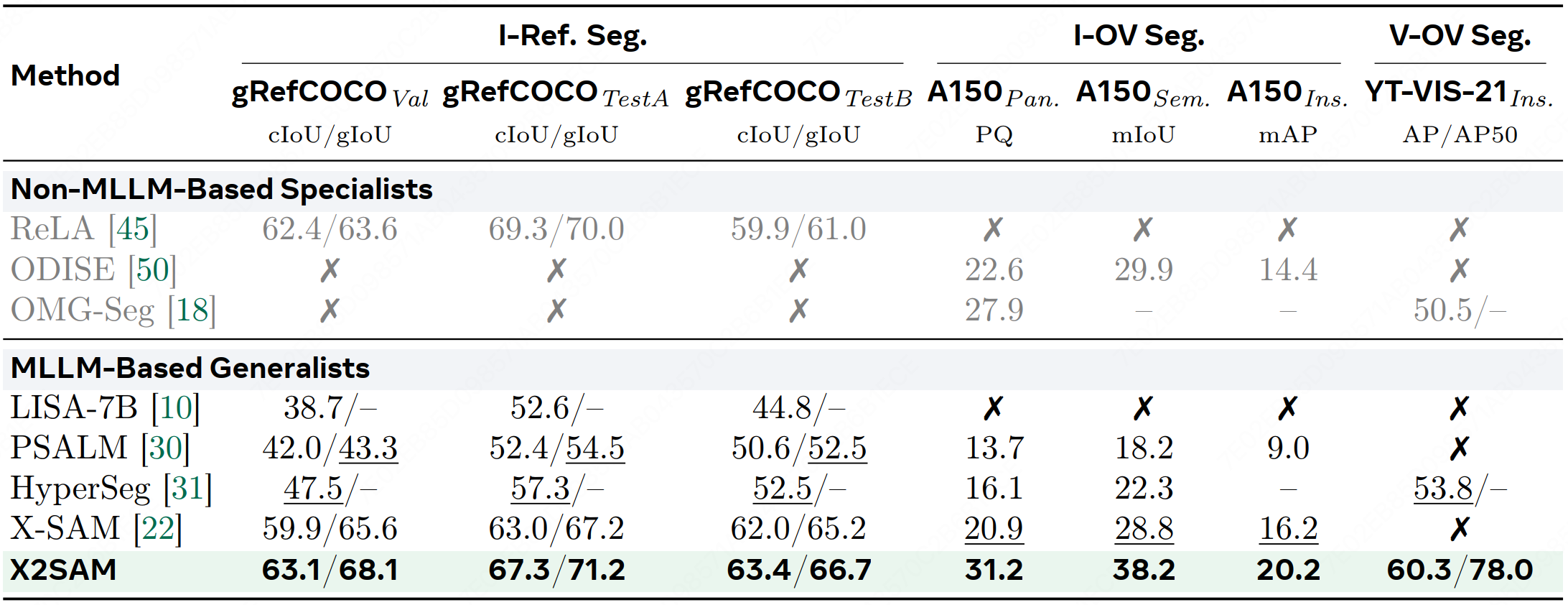

## Out-of-Domain Segmentation

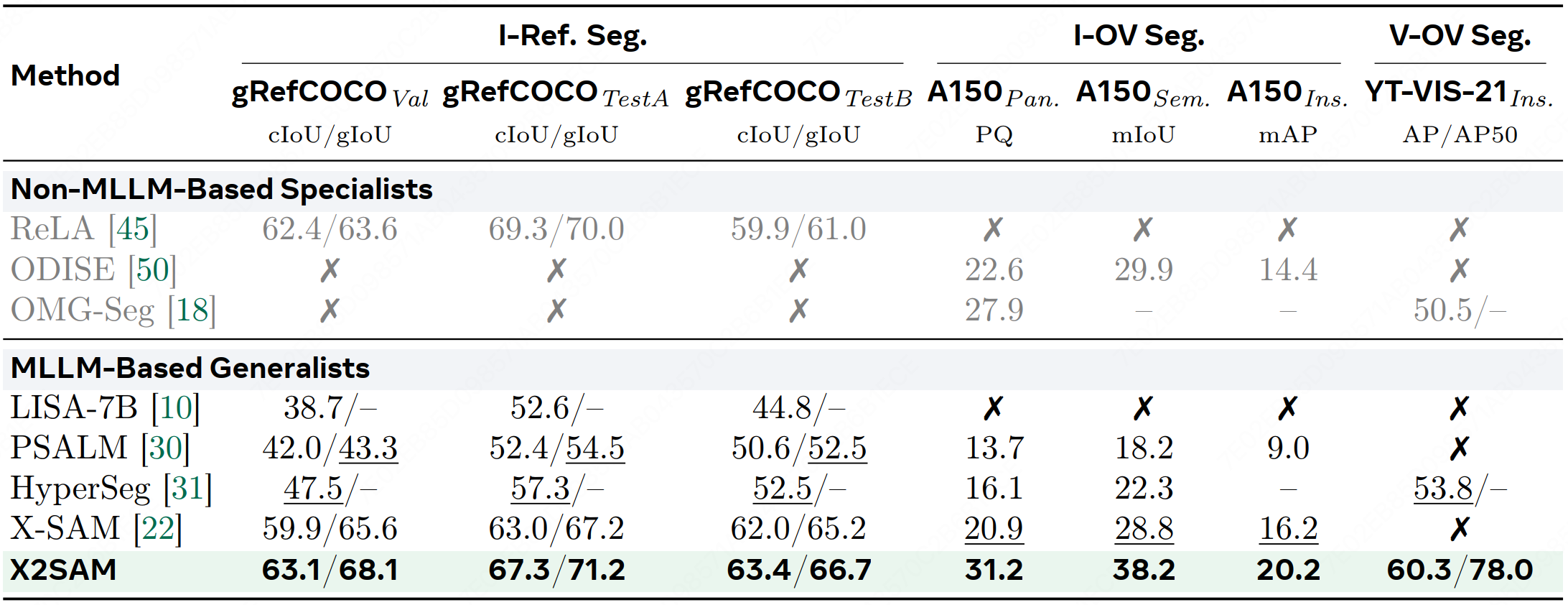

Table 3: Comparison on out-of-domain tasks, including image generalized referring segmentation, image and video open-vocabulary segmentation benchmarks.

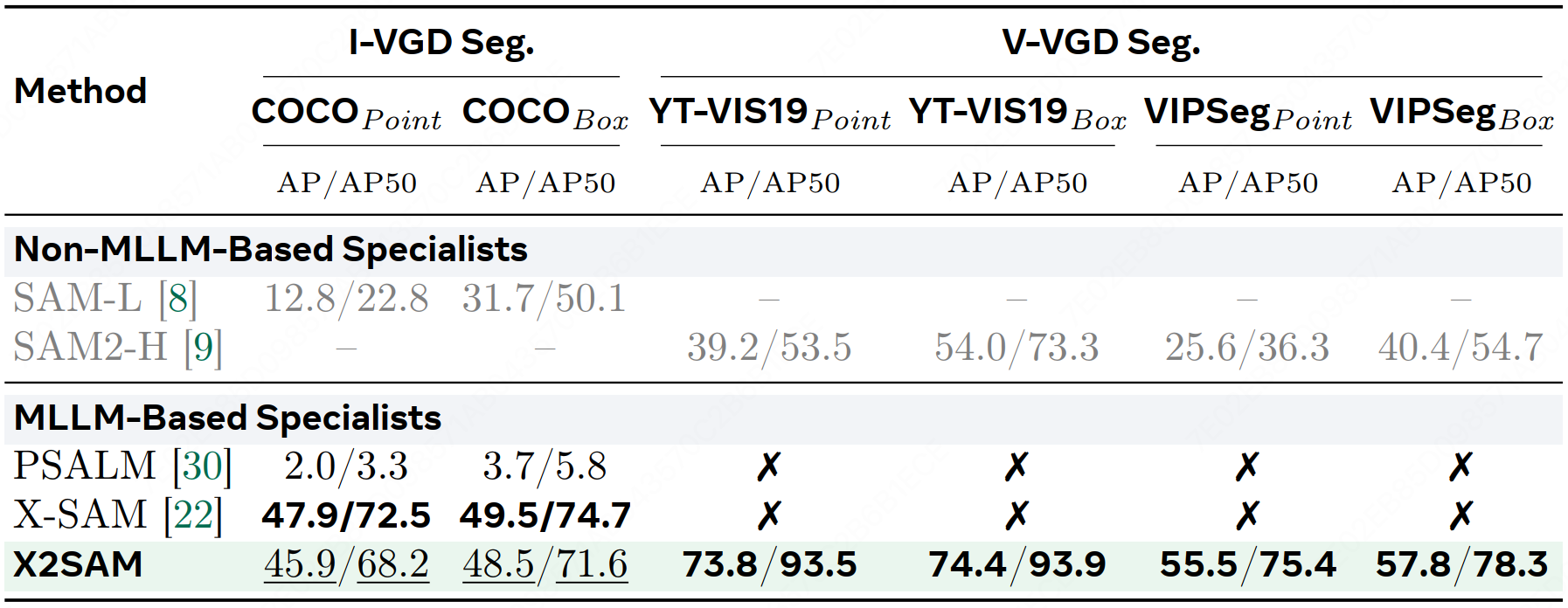

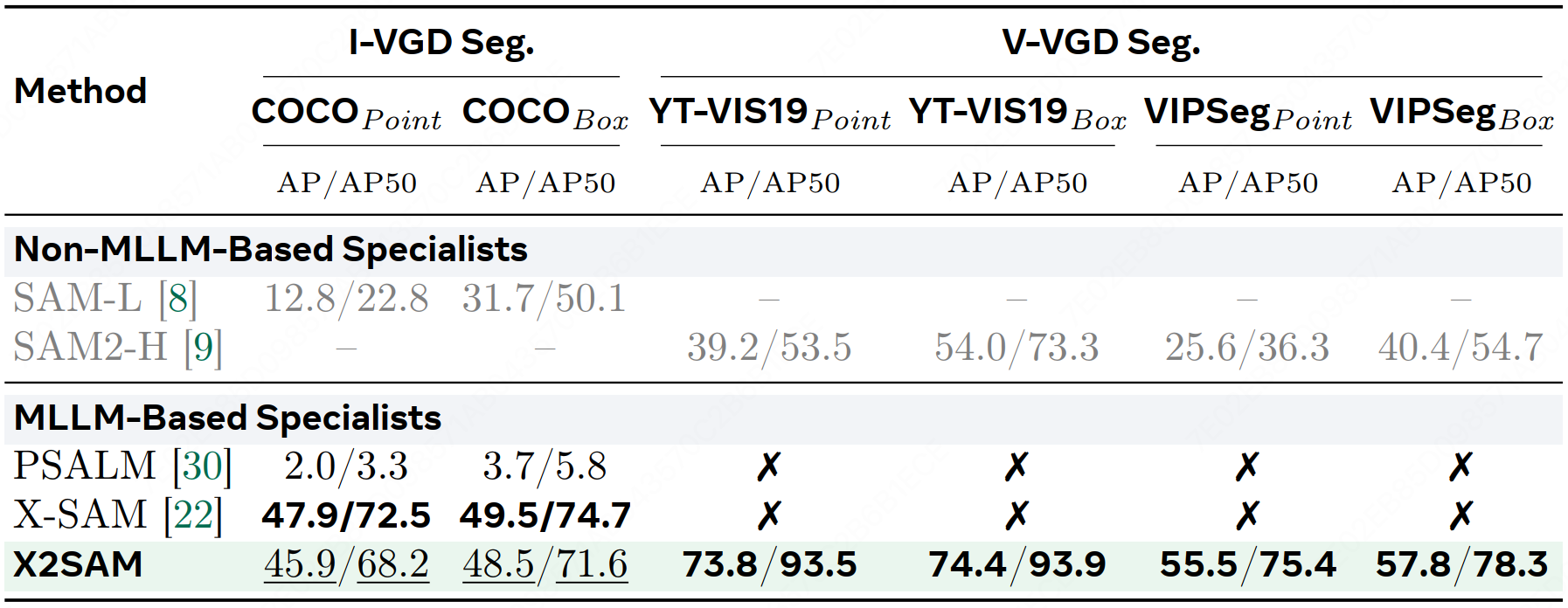

## VGD Segmentation

Table 4: Comparison across image and video visual grounded segmentation benchmarks.

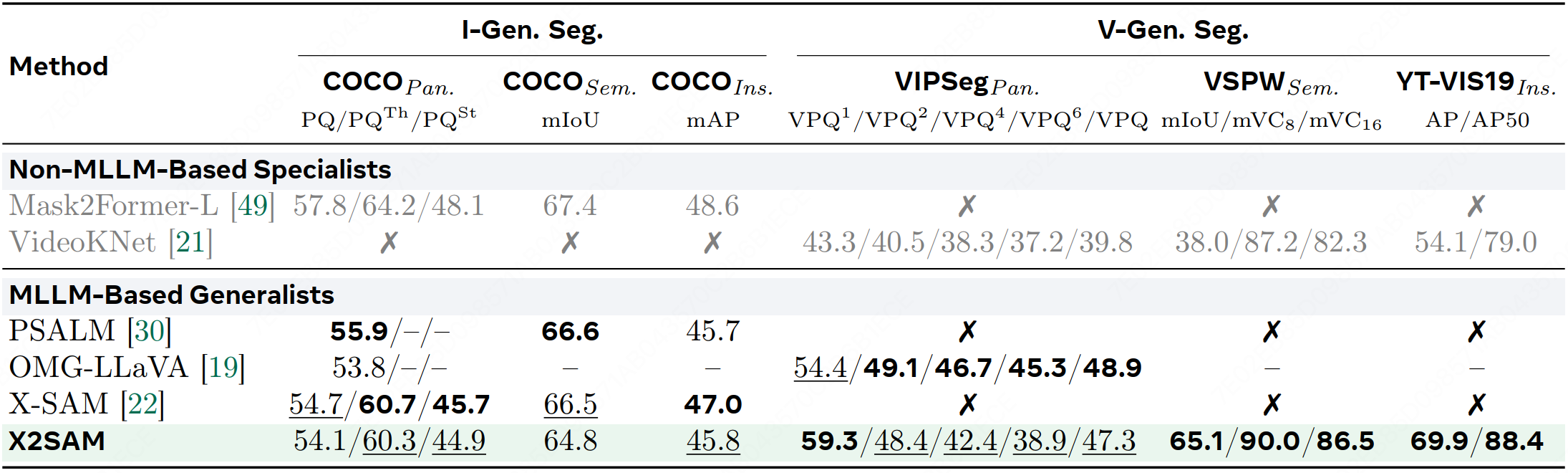

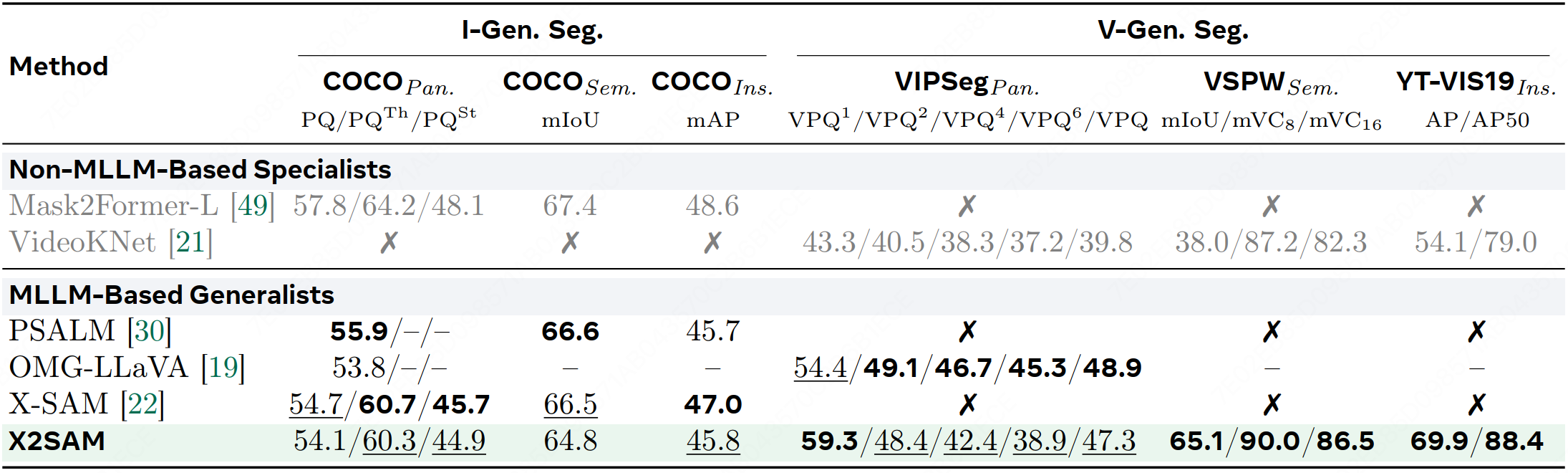

## Generic Segmentation

Table 5: Comparison across image and video generic segmentation benchmarks.

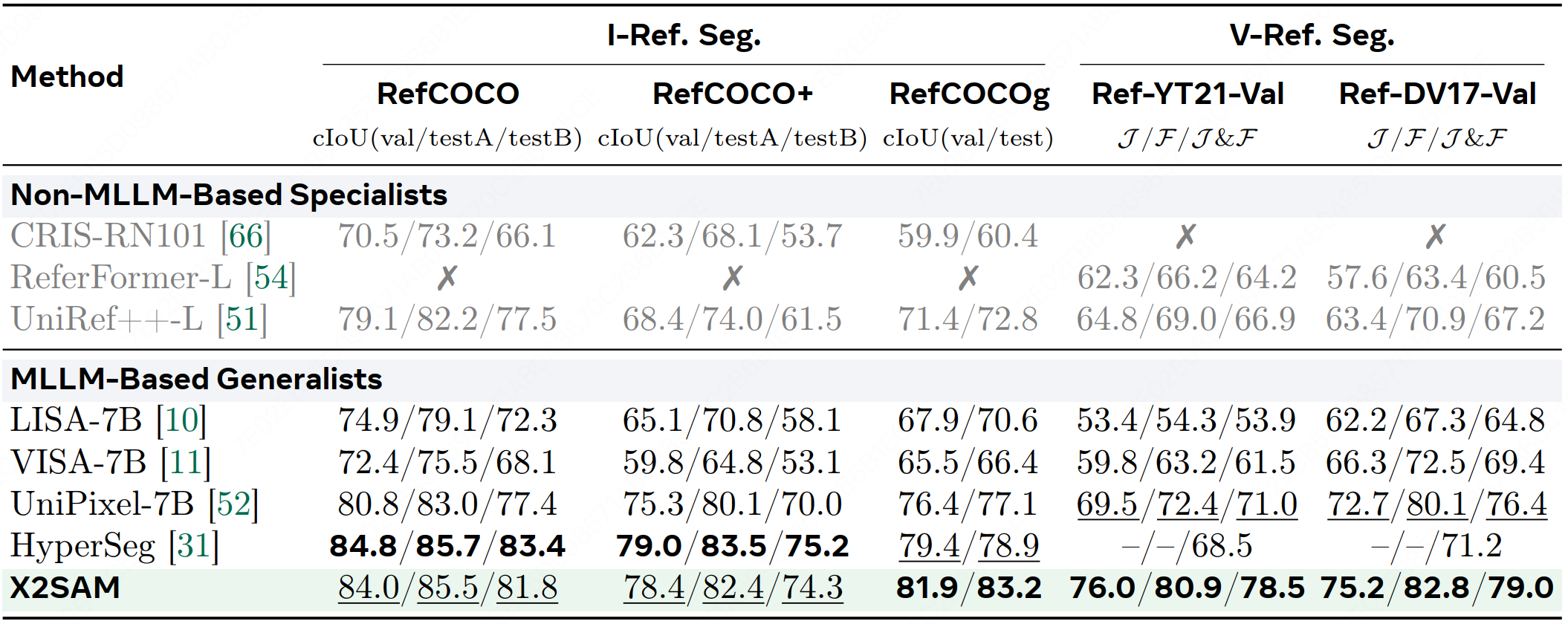

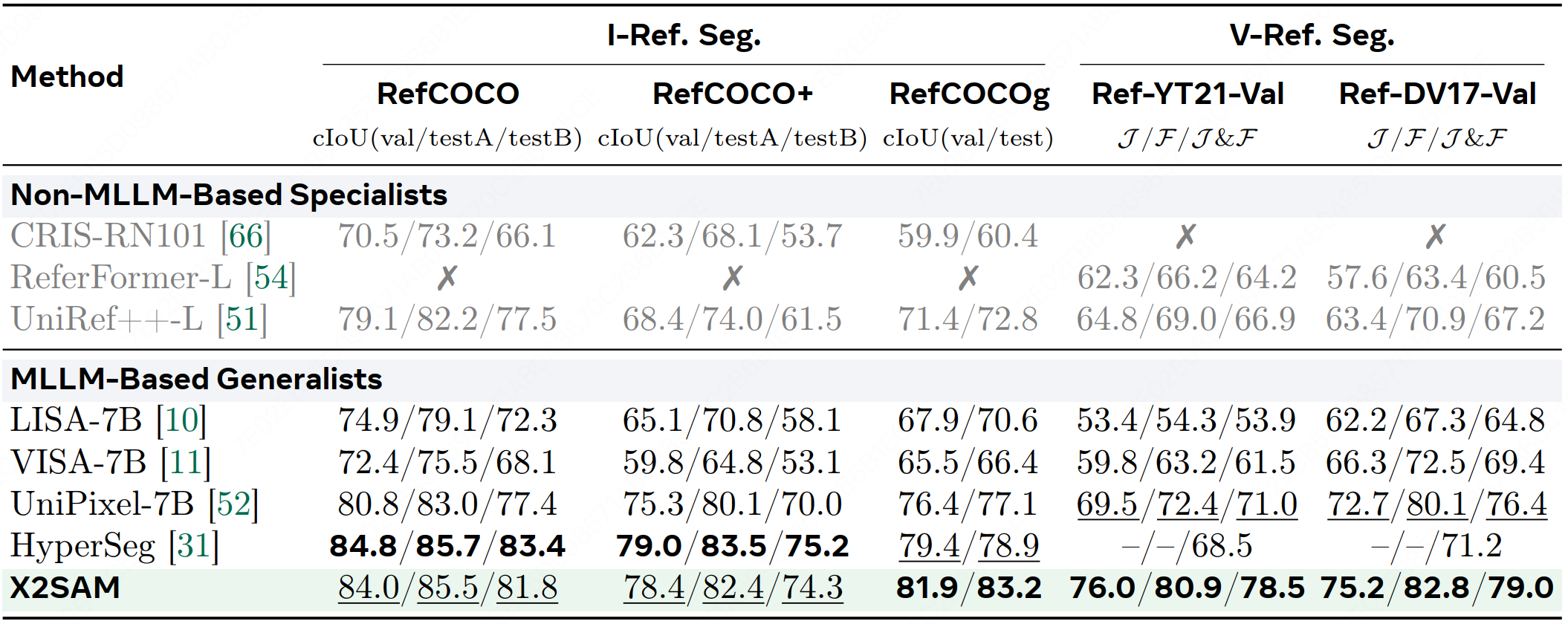

## Referring Segmentation

Table 6: Comparison across image and video referring segmentation benchmarks.

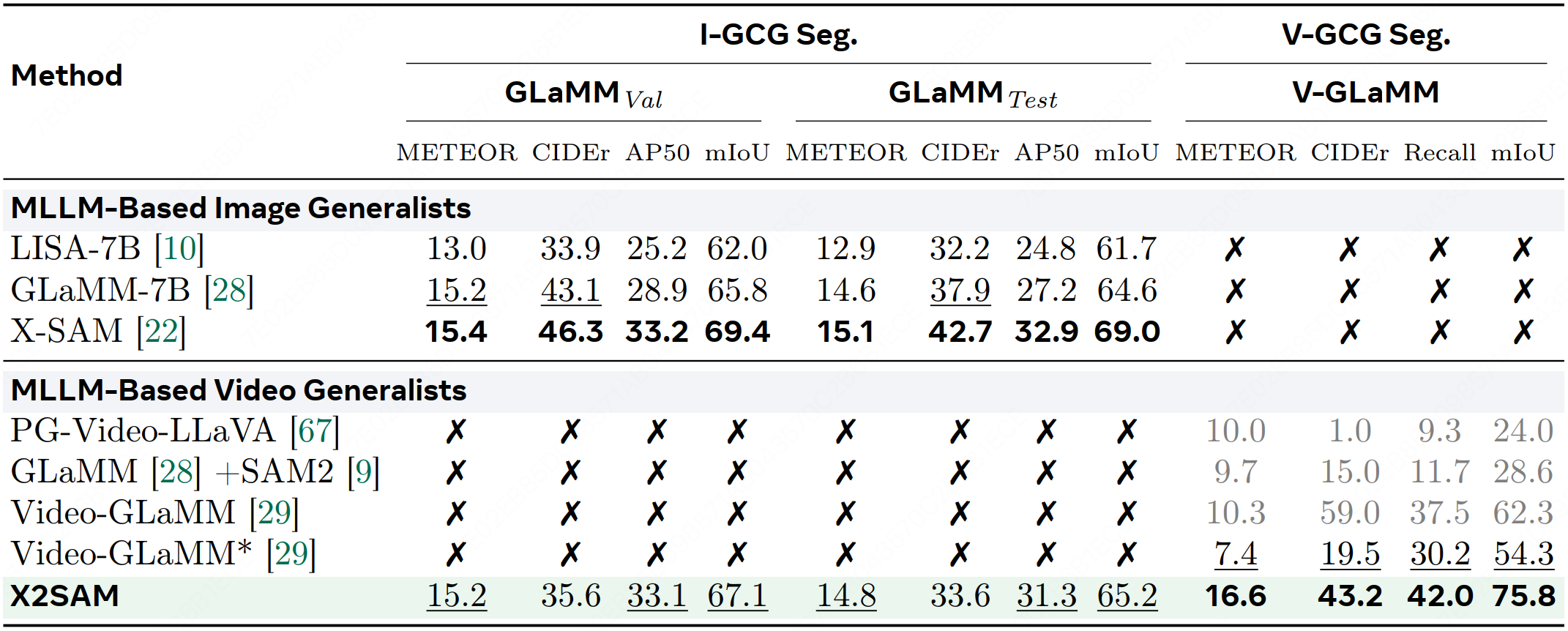

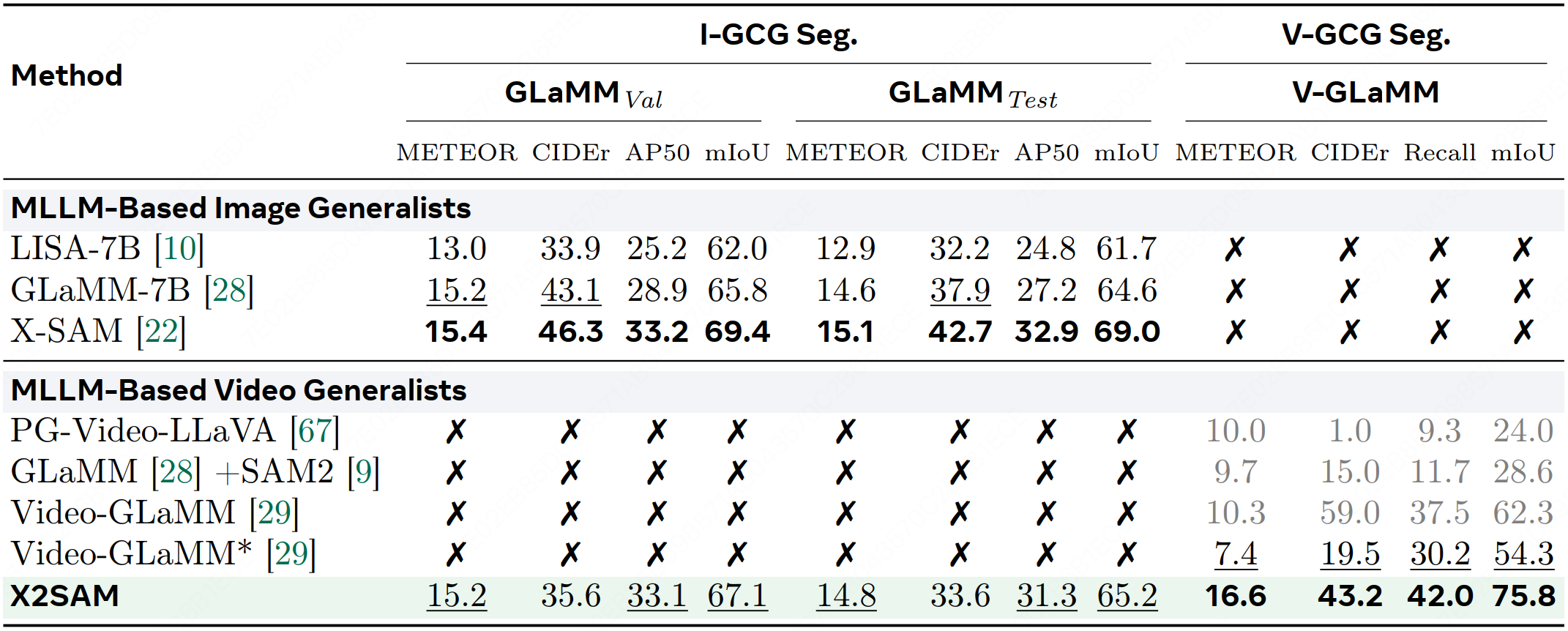

## GCG Segmentation

Table 7: Comparison across image and video grounded conversation generation segmentation benchmarks. \textcolor{gray}{Grayed} values means the method is reported in the original paper, * means the method is re-evaluated in this work.

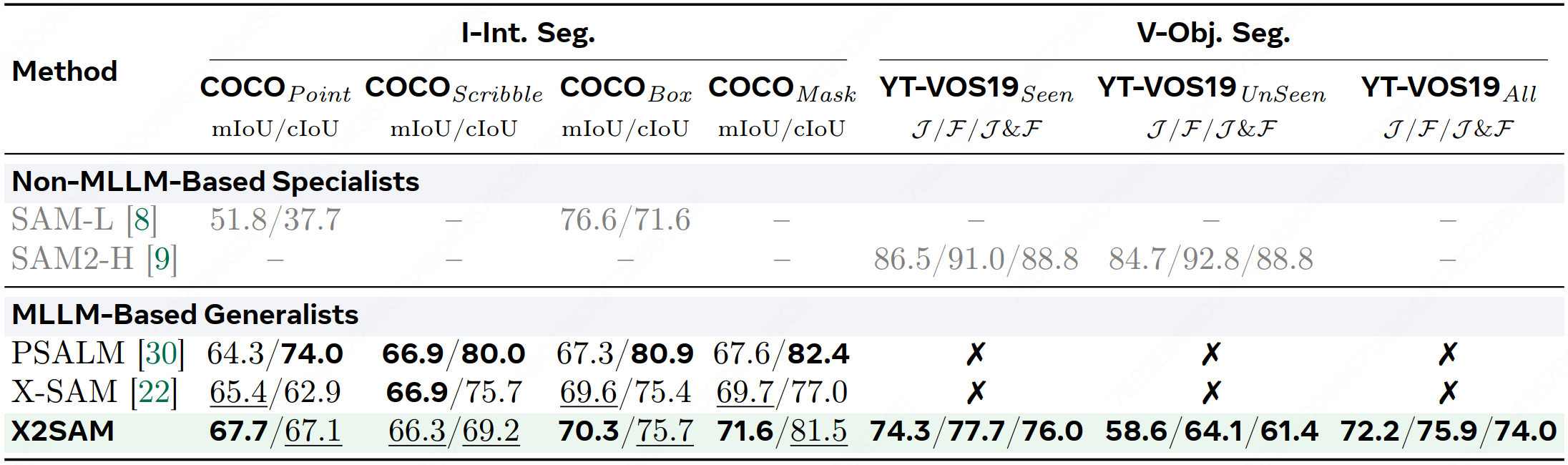

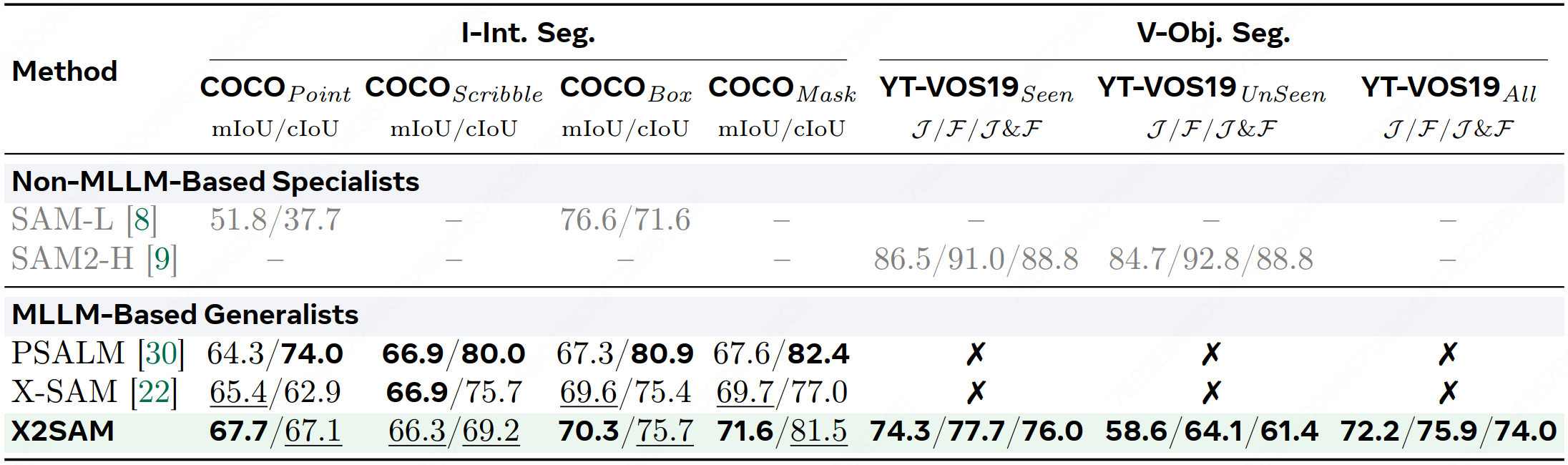

## Object-Centric Segmentation

Table 8: Comparison on object-centric segmentation tasks, including image interactive segmentation (I-Int.) and video object segmentation (V-Obj.) benchmarks.

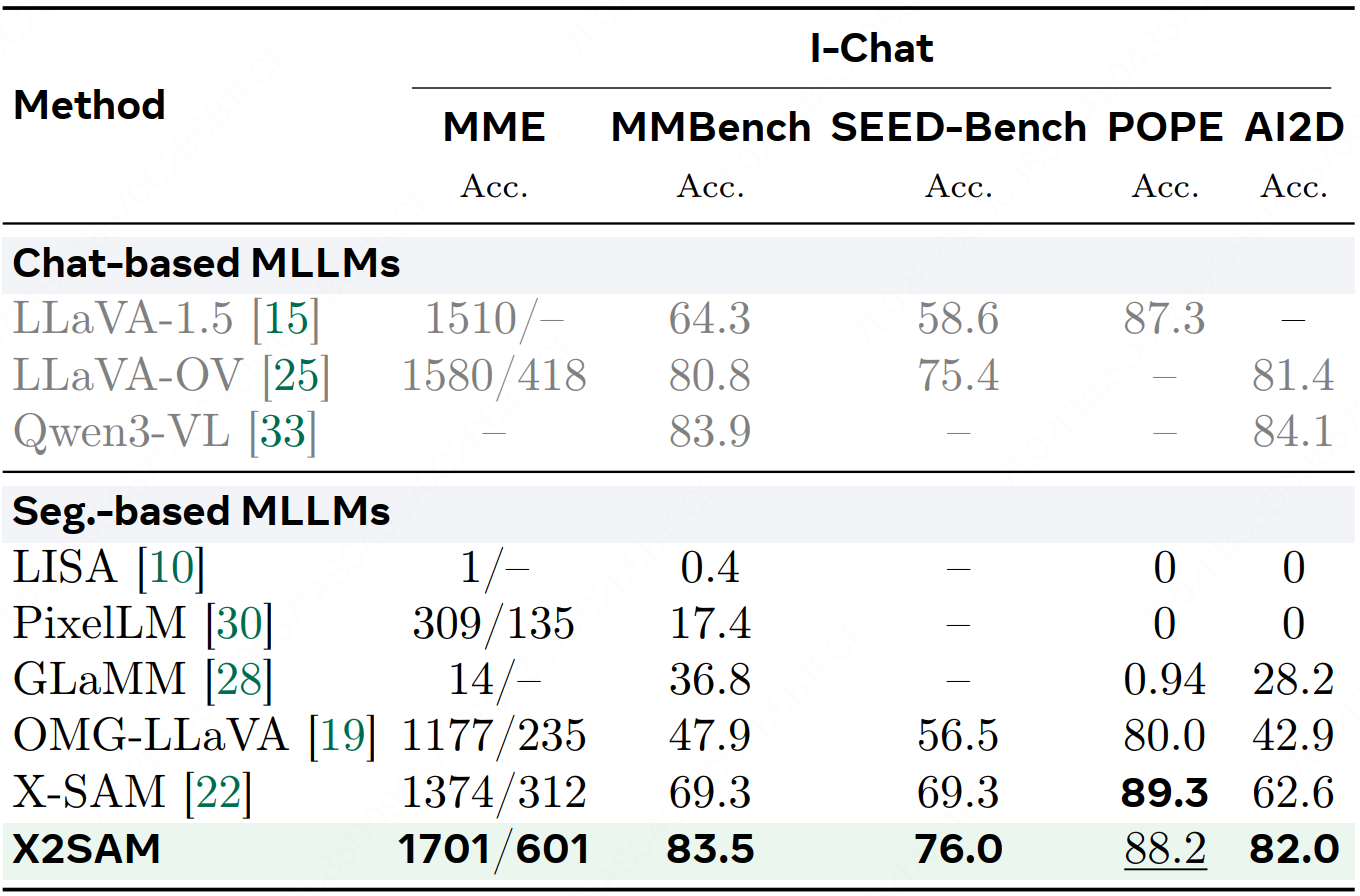

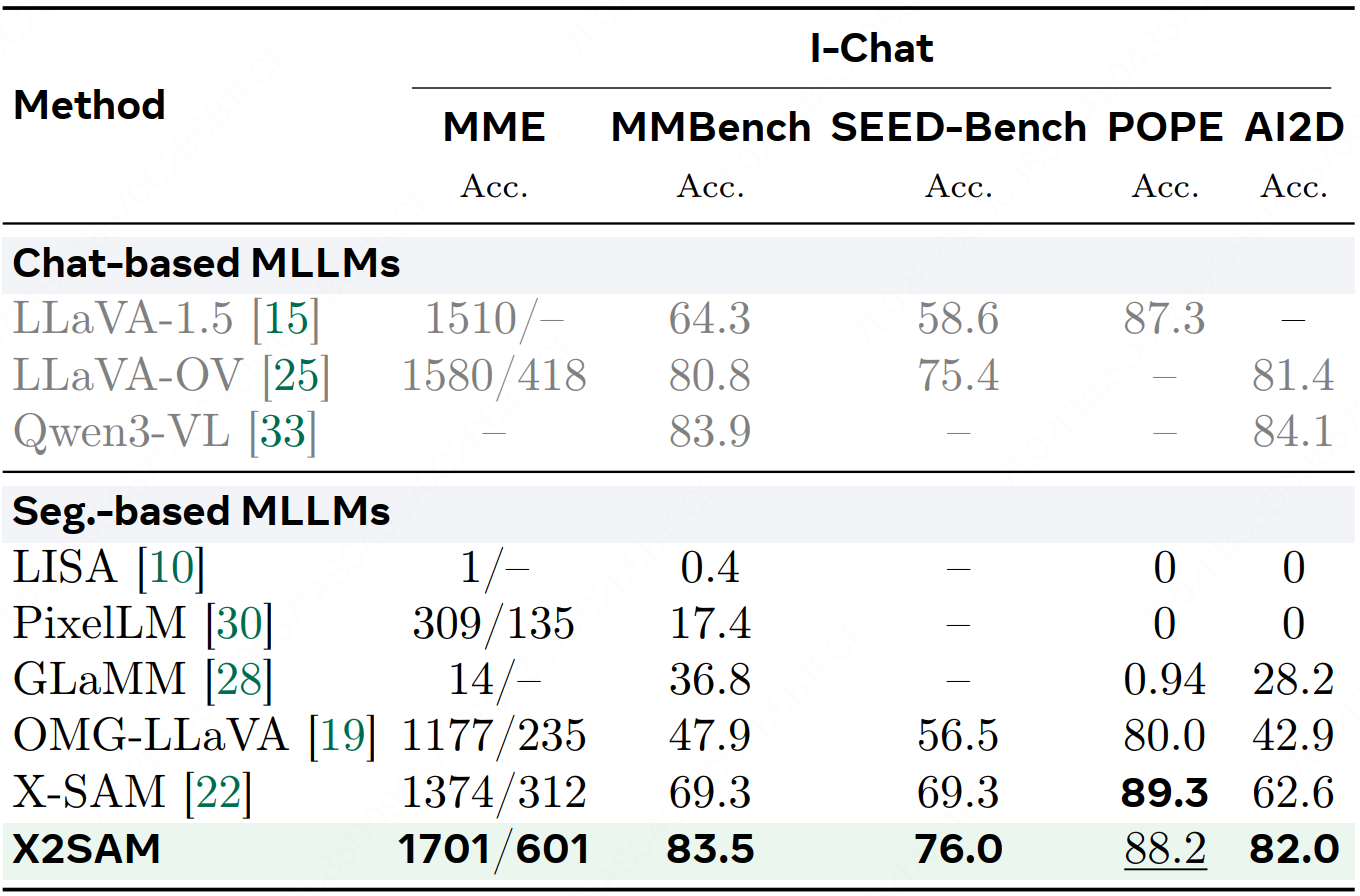

## Image Chat

Table 9: Comparison across image chat benchmarks.

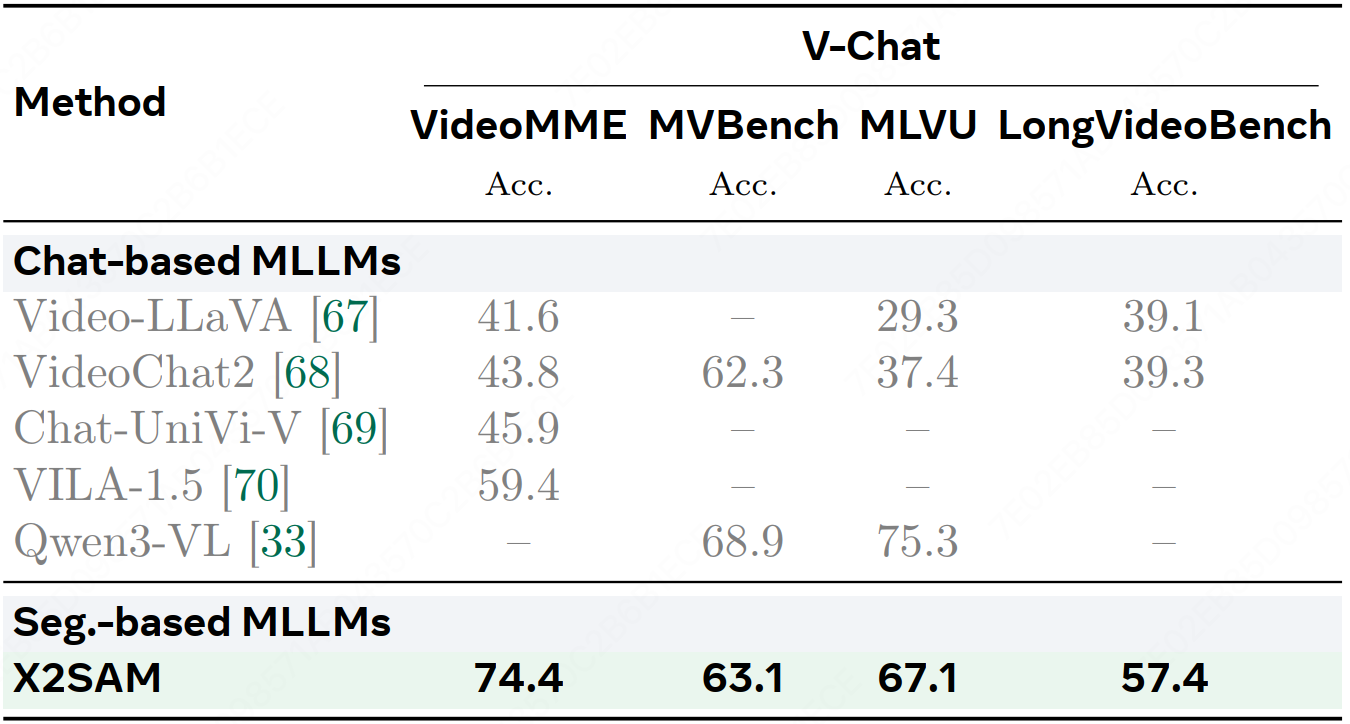

## Video Chat

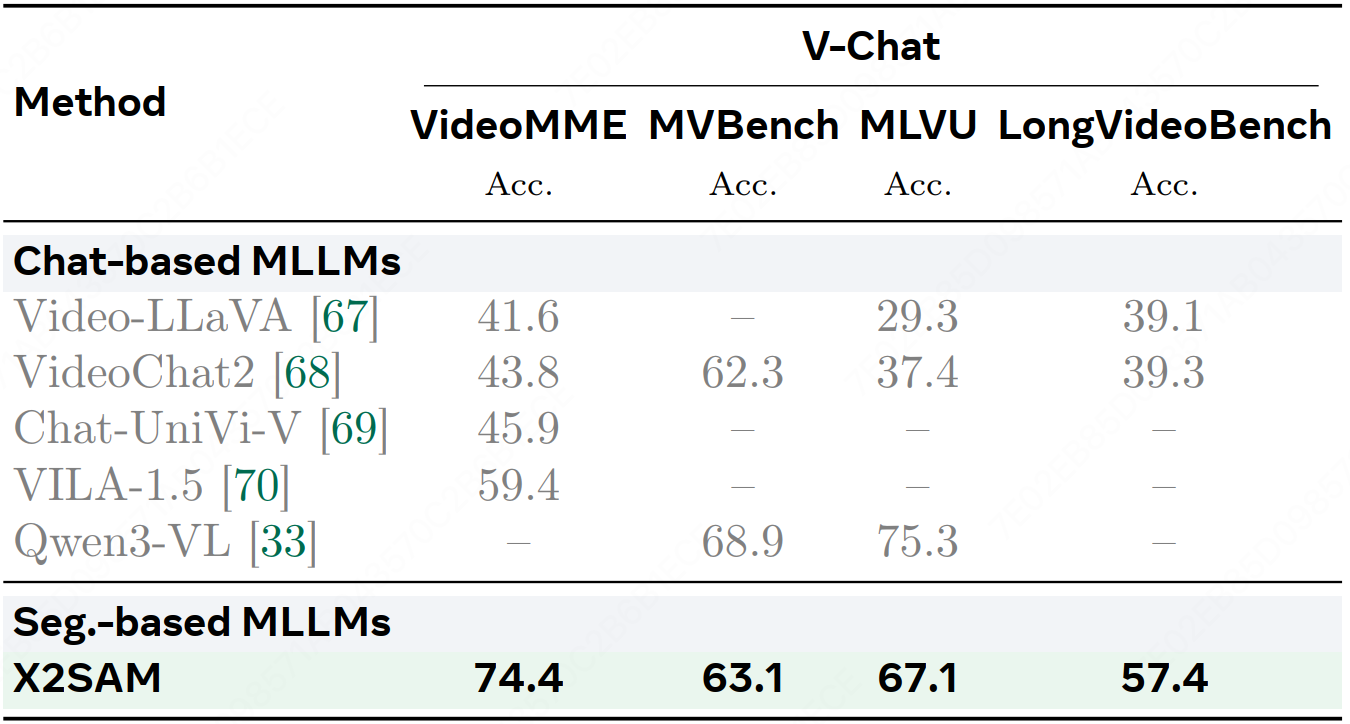

Table 10: Comparison across video chat benchmarks.