oh — OpenHarness & ohmo

oh — OpenHarness & ohmo

🔄 Agent Loop

• Streaming Tool-Call Cycle • API Retry with Exponential Backoff • Parallel Tool Execution • Token Counting & Cost Tracking |

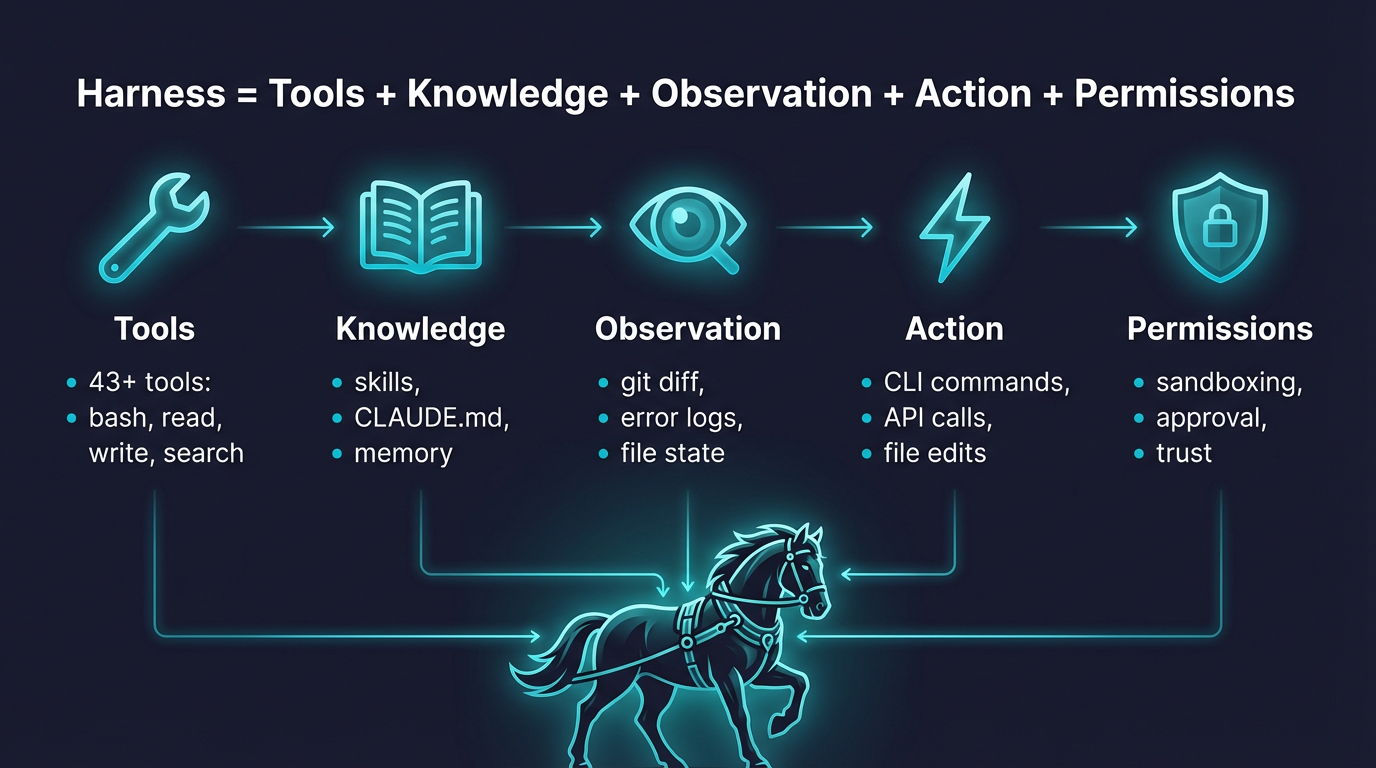

🔧 Harness Toolkit

• 43 Tools (File, Shell, Search, Web, MCP) • On-Demand Skill Loading (.md) • Plugin Ecosystem (Skills + Hooks + Agents) • Compatible with anthropics/skills & plugins |

🧠 Context & Memory

• CLAUDE.md Discovery & Injection • Context Compression (Auto-Compact) • MEMORY.md Persistent Memory • Session Resume & History |

🛡️ Governance

• Multi-Level Permission Modes • Path-Level & Command Rules • PreToolUse / PostToolUse Hooks • Interactive Approval Dialogs |

🤝 Swarm Coordination

• Subagent Spawning & Delegation • Team Registry & Task Management • Background Task Lifecycle • ClawTeam Integration (Roadmap) |

Start here: Quick Start · Provider Compatibility · Showcase · Contributing · Changelog

--- ## 🚀 Quick Start ### 1. Install #### Linux / macOS / WSL ```bash # One-click install curl -fsSL https://raw.githubusercontent.com/HKUDS/OpenHarness/main/scripts/install.sh | bash # Or via pip pip install openharness-ai ``` #### Windows (Native) ```powershell # One-click install (PowerShell) iex (Invoke-WebRequest -Uri 'https://raw.githubusercontent.com/HKUDS/OpenHarness/main/scripts/install.ps1') # Or via pip pip install openharness-ai ``` **Note**: Windows support is now native. In PowerShell, use `openh` instead of `oh` because `oh` can resolve to the built-in `Out-Host` alias. ### 2. Configure ```bash oh setup # interactive wizard — pick a provider, authenticate, done # On Windows PowerShell, use: openh setup ``` Supports **Claude / OpenAI / Copilot / Codex / Moonshot(Kimi) / GLM / MiniMax** and any compatible endpoint. ### 3. Run ```bash oh # On Windows PowerShell, use: openh ```

![]()

Oh my Harness!

The model is the agent. The code is the harness.

Thanks for visiting ✨ OpenHarness!